A Developer’s Guide to Building and Deploying Serverless Multi-Modal AI Agents with Modal

The artificial intelligence landscape is rapidly evolving beyond text-based interactions. The new frontier is multi-modal AI, a paradigm where models can understand, process, and generate information across a diverse range of data types, including text, images, audio, and video. This leap forward, driven by innovations from research hubs like Google DeepMind News and Meta AI News, is unlocking a new class of applications, from sophisticated visual question-answering systems to AI agents that can perceive and interact with the world in a more human-like way. However, deploying these complex, resource-intensive models presents a significant engineering challenge.

This is where serverless GPU platforms enter the picture, and recent Modal News has highlighted its emergence as a powerful solution for developers. Modal simplifies the entire lifecycle of a machine learning application, from development to deployment, by abstracting away the complexities of infrastructure management. It allows developers to define their entire compute environment—including dependencies, hardware (like NVIDIA A100s or H100s), and secrets—directly in Python code. This article provides a comprehensive, hands-on guide to building and deploying a multi-modal AI agent using Modal, covering core concepts, practical implementation, advanced techniques, and optimization best practices.

Understanding the Core Concepts of Multi-Modal AI

At its heart, multi-modal AI is about teaching machines to process and relate information from different sources. Humans do this instinctively; when we see a picture of a cat and read the word “cat,” our brain connects the visual and linguistic information. For an AI, this requires sophisticated architectures capable of bridging the gap between these disparate data modalities.

From Raw Data to a Shared Understanding

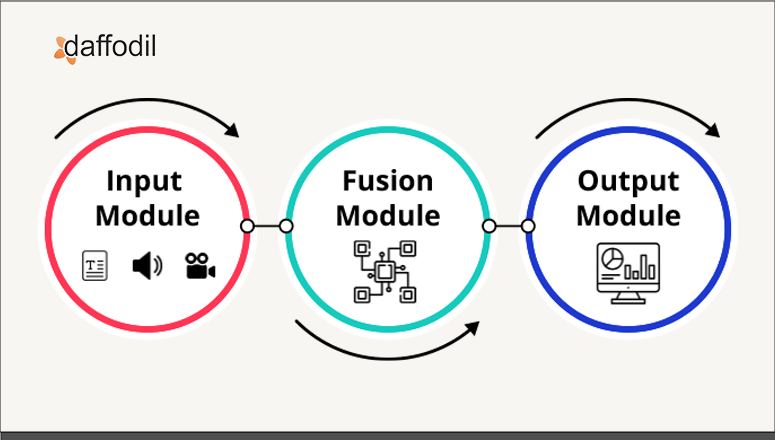

The fundamental challenge is that data from different modalities exists in fundamentally different formats. An image is a grid of pixel values, text is a sequence of tokens, and audio is a waveform. To make sense of them together, models must first convert them into a common mathematical representation, a process known as embedding.

- Embedding: Each modality is passed through a specialized encoder (e.g., a Vision Transformer or ViT for images, a BERT-like model for text) that transforms the raw data into a high-dimensional vector. This vector captures the semantic essence of the input.

- Fusion: Once the data is in this shared “embedding space,” the model can fuse the information. Early fusion combines raw or shallow features at the beginning of the model, while late fusion processes each modality independently and merges the results at the end. More advanced techniques, like cross-attention mechanisms popularized by the Transformer architecture, allow the model to dynamically weigh the importance of different parts of each modality in relation to the others.

Frameworks like PyTorch and TensorFlow provide the building blocks for these architectures, while the Hugging Face Transformers News continues to be dominated by the release of powerful, pre-trained multi-modal models like CLIP, BLIP, and Llava, which significantly lower the barrier to entry for developers.

A Simple Example: Image Captioning with Hugging Face

Before diving into Modal, let’s look at a basic example of using a pre-trained model for image captioning. We’ll use the Salesforce BLIP model, easily accessible through the Hugging Face Transformers library.

import requests

from PIL import Image

from transformers import BlipProcessor, BlipForConditionalGeneration

# Initialize the processor and model from Hugging Face

processor = BlipProcessor.from_pretrained("Salesforce/blip-image-captioning-large")

model = BlipForConditionalGeneration.from_pretrained("Salesforce/blip-image-captioning-large")

# Load an image from a URL

img_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/demo.jpg'

raw_image = Image.open(requests.get(img_url, stream=True).raw).convert('RGB')

# Prepare the image for the model

inputs = processor(raw_image, return_tensors="pt")

# Generate the caption

out = model.generate(**inputs)

caption = processor.decode(out[0], skip_special_tokens=True)

print(f"Generated Caption: {caption}")

# Expected output: "a woman sitting on the beach with a dog"This snippet demonstrates the core workflow: load a model, process an input image, and generate a textual output. Now, let’s see how to take this local script and turn it into a scalable, serverless API using Modal.

Building a Serverless Multi-Modal API on Modal

Modal’s core philosophy is to let you define your cloud environment in code. This eliminates the need for manual configuration, Dockerfiles, and complex CI/CD pipelines for simple deployments. We’ll convert our image captioning script into a web endpoint that can be called from anywhere.

Defining the Modal Application

A Modal application consists of a few key components: a `Stub` to define the app, a `modal.Image` to define the container environment, and decorated functions that run on Modal’s infrastructure.

Here’s how to structure the image captioning service:

- Setup: We define a `Stub` named “multi-modal-agent”.

- Container Image: We create a `modal.Image` that specifies our Python dependencies. Modal will build this container image once and cache it for fast subsequent runs. This is a major advantage over platforms where container builds are slow. We include `torch`, `transformers`, `requests`, and `Pillow`.

- Model Loading: We create a class to hold the model. Using a class-based approach allows Modal to leverage container lifecycle functions. The model is loaded once when the container starts (`@modal.enter()`) and remains in GPU memory, ready to serve requests. This is crucial for minimizing cold-start latency.

- Web Endpoint: We define a function decorated with `@stub.function` to specify hardware requirements (e.g., a GPU) and `@modal.web_endpoint` to expose it as an API. This function will take an image URL, process it, and return the caption as JSON.

Full Implementation Code

The entire logic can be contained in a single Python file. This is a major point of Modal News; the simplicity is a stark contrast to the YAML-heavy configurations of AWS SageMaker or Azure Machine Learning for similar tasks.

import io

import requests

from modal import Image, Stub, web_endpoint, enter

# 1. Setup the Stub

stub = Stub("multi-modal-agent")

# 2. Define the container image with all necessary dependencies

model_image = Image.debian_slim().pip_install(

"torch",

"transformers",

"requests",

"Pillow"

)

# Use a GPU for faster inference. Modal offers various types.

GPU_CONFIG = "A10G"

@stub.cls(gpu=GPU_CONFIG, image=model_image)

class ImageCaptioner:

@enter()

def load_model(self):

"""

This method is called once when the container starts.

It downloads and loads the model into memory.

"""

from transformers import BlipProcessor, BlipForConditionalGeneration

from PIL import Image

print("Loading BLIP model...")

self.processor = BlipProcessor.from_pretrained("Salesforce/blip-image-captioning-large")

self.model = BlipForConditionalGeneration.from_pretrained("Salesforce/blip-image-captioning-large")

self.Image = Image

print("Model loaded successfully!")

@web_endpoint(method="POST")

def caption(self, request: dict):

"""

This is the web endpoint that receives requests.

It expects a JSON body with an "image_url" key.

"""

image_url = request.get("image_url")

if not image_url:

return {"error": "image_url not provided"}, 400

try:

# Download and process the image

response = requests.get(image_url, stream=True)

response.raise_for_status()

raw_image = self.Image.open(io.BytesIO(response.content)).convert('RGB')

# Perform inference

inputs = self.processor(raw_image, return_tensors="pt")

out = self.model.generate(**inputs)

caption = self.processor.decode(out[0], skip_special_tokens=True)

print(f"Generated caption for {image_url}: {caption}")

return {"caption": caption}

except Exception as e:

return {"error": str(e)}, 500

# To deploy this, run `modal deploy app.py` in your terminal.

# To run it temporarily, use `modal serve app.py`.To deploy this, you simply run `modal deploy app.py` from your terminal. Modal provides you with a public URL, and your serverless, GPU-powered API is live.

Advanced Techniques: Integrating Vector Databases and Agentic Frameworks

Simple image captioning is just the beginning. The true power of multi-modal agents is unlocked when they can search, reason, and act upon vast amounts of multi-modal data. This often involves integrating with vector databases and agentic frameworks.

Multi-Modal Search with Vector Databases

Imagine building a system where you can search a massive library of images using natural language queries. This requires a vector database like Pinecone, Milvus, or Weaviate. The workflow is as follows:

- Indexing: Process each image in your dataset with a multi-modal encoder (like CLIP) to generate an embedding vector. Store these vectors in the vector database, indexed by their image ID or URL.

- Querying: When a user provides a text query, use the same encoder to generate an embedding for the text.

- Retrieval: Use the text embedding to perform a similarity search (Approximate Nearest Neighbor search) in the vector database. The database returns the IDs of the most visually similar images.

You can easily build a Modal function to perform the query step. This function would take a text query, generate its embedding, and then query your Pinecone or Milvus instance.

from modal import Image, Stub, Secret, web_endpoint, enter

# Note: This is a conceptual example. You would need to have a populated

# Pinecone index for this to work.

stub = Stub("multimodal-search")

search_image = Image.debian_slim().pip_install(

"sentence-transformers", # For CLIP models

"pinecone-client"

)

@stub.cls(

image=search_image,

secrets=[Secret.from_name("my-pinecone-secret")] # Securely manage API keys

)

class VectorSearcher:

@enter()

def setup(self):

import os

import pinecone

from sentence_transformers import SentenceTransformer

# Load CLIP model

self.model = SentenceTransformer('clip-ViT-B-32')

# Connect to Pinecone

pinecone.init(

api_key=os.environ["PINECONE_API_KEY"],

environment=os.environ["PINECONE_ENVIRONMENT"]

)

self.index = pinecone.Index("image-index")

@web_endpoint(method="POST")

def search(self, request: dict):

query = request.get("query")

if not query:

return {"error": "query not provided"}, 400

# Encode the text query into a vector

query_embedding = self.model.encode(query).tolist()

# Query Pinecone for the top 5 most similar image vectors

results = self.index.query(

vector=query_embedding,

top_k=5,

include_metadata=True

)

# Format and return the results

matches = [

{"id": match.id, "score": match.score, "metadata": match.metadata}

for match in results['matches']

]

return {"results": matches}This architecture is incredibly powerful and forms the basis of many modern retrieval-augmented generation (RAG) systems. The latest LangChain News and LlamaIndex News are filled with examples of building such systems over both text and multi-modal data.

Best Practices and Performance Optimization

While Modal abstracts away much of the complexity, following best practices can significantly improve performance and reduce costs.

Optimizing Container Builds

Modal caches `modal.Image` builds. To leverage this, define your dependencies as specifically as possible. If you are experimenting, separate stable dependencies from rapidly changing ones into different `pip_install` calls or layers. This ensures that only the changed layers are rebuilt, saving significant time. For complex dependencies, especially those requiring system-level packages, using a custom Dockerfile with `Image.from_dockerfile()` is a robust option.

Managing Cold Starts

Cold starts are a reality of serverless computing. For a GPU application, this includes container startup time and model loading time.

- Use Container Lifecycle Methods: As shown in our examples, use the `@enter()` method to load your model. This ensures the model is loaded once and kept warm for subsequent requests, dramatically reducing latency for all but the very first request.

- Choose the Right Hardware: A larger, more powerful GPU (like an A100) might load a model faster than a smaller one (like a T4). Balance performance needs with cost.

- Model Caching: Modal automatically caches model weights downloaded from hubs like Hugging Face within the container image, preventing re-downloads on every cold start.

For ultra-low latency, you can explore inference optimization frameworks. Recent NVIDIA AI News often covers updates to tools like TensorRT and the Triton Inference Server, which can be packaged into a Modal container to serve models compiled into formats like ONNX for maximum performance. For large language models, using libraries like vLLM within Modal can provide a massive throughput boost.

Cost Management and Monitoring

Modal’s pay-per-second billing for GPU usage is cost-effective, but it’s important to be mindful.

- Set Concurrency Limits: Configure `container_idle_timeout` in your `@stub.function` or `@stub.cls` to control how long a warm container waits for new requests before shutting down.

- Use the Right Tool for the Job: Don’t use a powerful H100 GPU for a small model that could run efficiently on an A10G.

- Integrate MLOps Tools: Use tools like Weights & Biases or MLflow for experiment tracking. You can easily integrate their Python clients into your Modal functions by adding them as a dependency and using Modal’s `Secret` management for API keys. This helps track performance and resource utilization across different model versions.

Conclusion and Next Steps

Multi-modal AI represents a paradigm shift in how we build intelligent systems, moving us closer to applications that can perceive and reason about the world in a holistic way. However, the operational complexity of deploying these models has historically been a major bottleneck. Serverless GPU platforms, with Modal at the forefront, are fundamentally changing this dynamic.

By allowing developers to define their entire infrastructure stack in Python, Modal drastically reduces the barrier to entry for building and shipping production-grade, multi-modal AI applications. We’ve seen how to progress from a simple local script to a scalable web API and even explored advanced architectures involving vector search. The combination of powerful open-source models from the Hugging Face ecosystem, agentic frameworks like LangChain, and streamlined infrastructure from Modal creates an incredibly potent stack for innovation.

As a next step, consider applying these principles to your own projects. Explore different multi-modal models for tasks like Visual Question Answering (VQA), build a RAG pipeline over your own image dataset, or experiment with generative models that create images from text. With tools like Modal, the path from idea to deployed, scalable multi-modal agent has never been clearer or more accessible.