PyTorch

torch.compile in PyTorch 2.5: Where the Speedup Comes From and Where It Disappears

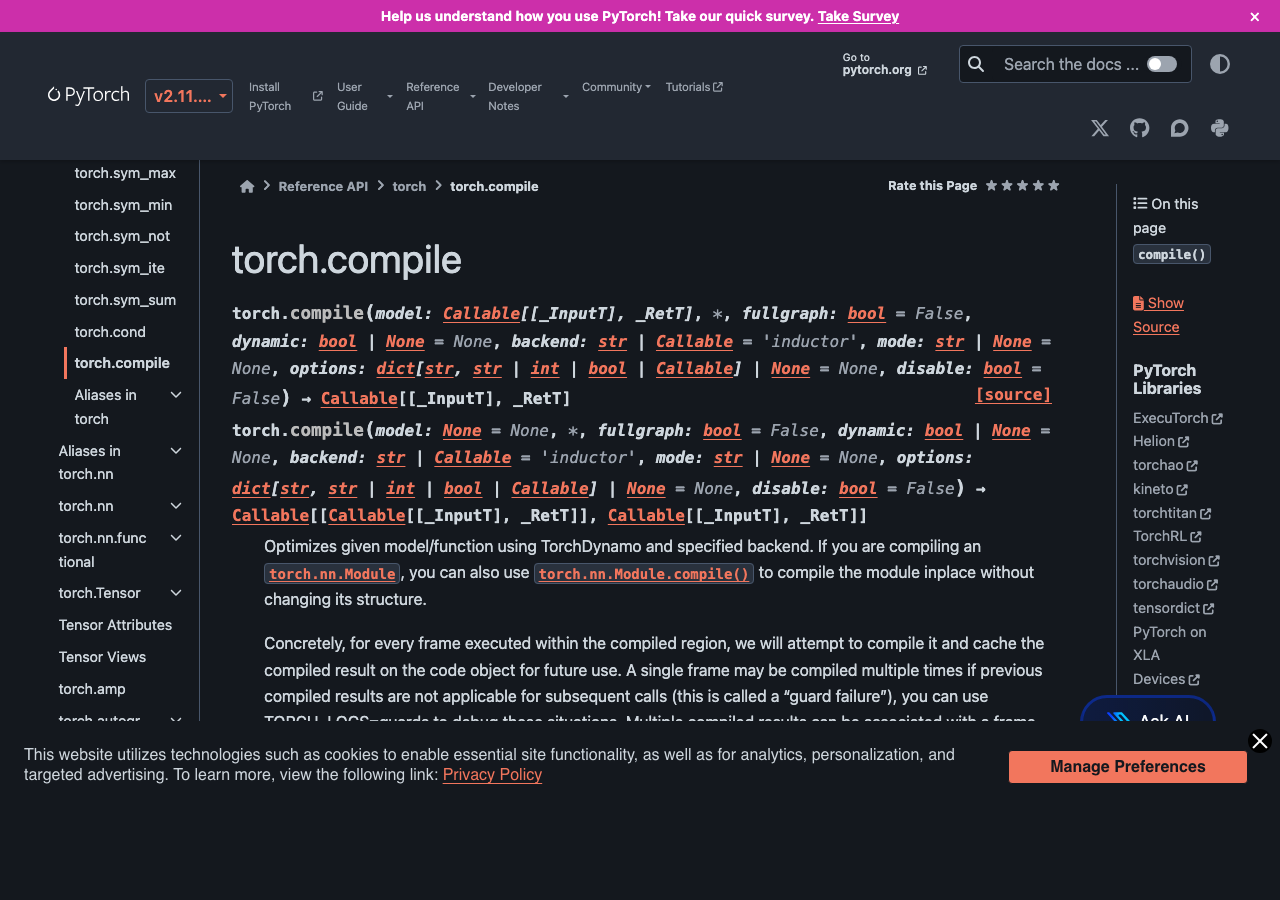

PyTorch 2.5 made torch.compile good enough that you can drop it into a real training script and expect a speedup most of the time.

Mastering Small Language Models: A Deep Dive into Pure PyTorch Implementations for Local AI

The landscape of artificial intelligence is undergoing a significant paradigm shift. While massive proprietary models continue to grab headlines in OpenAI.

PyTorch 2.8: Supercharging LLM Inference on CPUs with Intel Optimizations

The world of artificial intelligence is in a constant state of flux, with major developments announced almost daily.

Unpacking PyTorch 2.8: A Deep Dive into CPU-Accelerated LLM Inference

The world of artificial intelligence has long been dominated by the narrative that high-performance computing, especially for Large Language Models.