Beyond Checkmate: How AI Chess Tournaments on Kaggle Are Redefining AI Reasoning

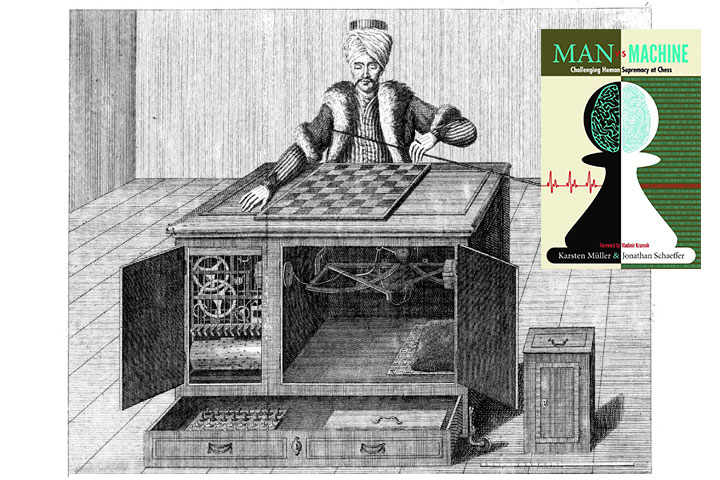

The world of artificial intelligence is in a perpetual state of evolution, constantly seeking more challenging benchmarks to measure its progress. For decades, the game of chess has served as the ultimate intellectual crucible for machine intelligence, from Deep Blue’s historic victory over Garry Kasparov to AlphaZero’s demonstration of self-taught superhuman ability. Today, as AI models, particularly Large Language Models (LLMs), claim increasingly sophisticated cognitive capabilities, the community is once again turning to the 64 squares. Platforms like Kaggle are becoming the modern arenas for these digital gladiators, hosting tournaments designed not just to find the strongest player, but to rigorously evaluate the nuanced reasoning, planning, and strategic depth of the world’s leading AI systems.

These new competitions represent a significant shift. The focus is expanding beyond pure computational power and brute-force search to probe the very nature of AI reasoning. Can an LLM trained on the vast corpus of human text truly understand strategic concepts like positional advantage or a long-term sacrificial attack? How do these models compare to specialized, game-tree-searching engines? This article delves into the technical underpinnings of building and evaluating chess-playing AI, exploring the core concepts, practical implementations, and advanced strategies that define this exciting frontier. We will examine the tools and techniques, from foundational libraries to cutting-edge MLOps practices, that are powering this new wave of AI evaluation, providing a glimpse into the future of machine cognition.

The New Grandmasters: Core Concepts of AI Chess

At its heart, programming an AI to play chess involves three fundamental components: board representation, move generation, and move selection. Understanding these building blocks is crucial before tackling more complex strategies. The modern AI landscape, filled with updates from Google DeepMind News and Meta AI News, builds upon these foundational ideas.

1. Board Representation and Game State

Before an AI can “think,” it needs to “see” the board. This involves creating a data structure that accurately represents the position of all pieces, whose turn it is, castling rights, and other game state rules. In Python, the python-chess library is the undisputed standard, providing a robust and efficient way to manage the game’s logic.

This library handles all the complex rules of chess, allowing developers to focus on the decision-making logic. You can easily set up a board, push moves in various notations (like Standard Algebraic Notation or SAN), and check for game-ending conditions like checkmate or stalemate.

# Filename: chess_basics.py

# Description: A simple demonstration of the python-chess library to manage a game state.

import chess

import chess.svg

def demonstrate_board_basics():

"""

Initializes a chess board, makes a few moves, and prints the final state.

"""

# Create a new board object in the standard starting position

board = chess.Board()

print("Initial board state (FEN):")

print(board.fen())

print(board)

# --- Making some moves ---

# The python-chess library automatically validates moves.

# An illegal move would raise an exception.

# 1. e4

board.push_san("e4")

# 1... c5 (Sicilian Defense)

board.push_san("c5")

# 2. Nf3

board.push_san("Nf3")

print("\nBoard state after 1. e4 c5 2. Nf3:")

print(board.fen())

print(board)

# --- Checking game status ---

print(f"\nIs the game over? {board.is_game_over()}")

print(f"Is it white's turn? {board.turn == chess.WHITE}")

print(f"List of legal moves: {[board.san(move) for move in board.legal_moves]}")

if __name__ == "__main__":

demonstrate_board_basics()2. Move Generation and Evaluation

Once the board is represented, the AI needs to know what its options are. The `board.legal_moves` attribute from `python-chess` provides an iterable list of all possible moves in the current position. The real challenge, however, is choosing the *best* move from this list. This is where the “evaluation function” comes in. An evaluation function assigns a numerical score to a given board position, typically from the perspective of one player (e.g., positive for White’s advantage, negative for Black’s). A very simple function might just count the material value of the pieces on each side.

Building Your Digital Chess Contender: A Practical Implementation

With the core concepts in place, we can construct a basic chess-playing agent. The simplest form of such an agent uses a search algorithm to look ahead a few moves, applying its evaluation function to the resulting positions to make a decision. The Minimax algorithm is a classic choice for this kind of adversarial search.

Implementing a Simple Minimax Agent

The Minimax algorithm explores the game tree to a certain depth. It assumes that you (the “maximizer”) will always choose the move that leads to the best possible score for you, while your opponent (the “minimizer”) will always choose the move that leads to the worst score for you (and the best for them). By propagating these scores back up the tree, the algorithm can select the optimal move at the current position, given its limited search depth.

Let’s implement a simple agent that uses a material-based evaluation function and a one-ply (one half-move) search to pick the best move. This forms the basis for more complex engines, which might be trained using frameworks discussed in PyTorch News or TensorFlow News and tracked with tools from MLflow News or Weights & Biases News.

# Filename: simple_chess_agent.py

# Description: A basic chess agent that uses a material evaluation function to select a move.

import chess

import random

# A simple mapping of piece types to their approximate material value

PIECE_VALUES = {

chess.PAWN: 1,

chess.KNIGHT: 3,

chess.BISHOP: 3,

chess.ROOK: 5,

chess.QUEEN: 9,

chess.KING: 0 # The king's value is effectively infinite

}

def evaluate_board(board: chess.Board) -> float:

"""

Calculates the material advantage of the current board state.

Positive score means White is ahead, negative means Black is ahead.

"""

total_score = 0.0

for square in chess.SQUARES:

piece = board.piece_at(square)

if piece:

value = PIECE_VALUES[piece.piece_type]

if piece.color == chess.WHITE:

total_score += value

else:

total_score -= value

return total_score

def find_best_move_simple(board: chess.Board) -> chess.Move:

"""

Finds the best move for the current player using a simple one-move lookahead.

"""

legal_moves = list(board.legal_moves)

if not legal_moves:

return None

best_move = None

if board.turn == chess.WHITE:

# White wants to maximize the score

max_score = -float('inf')

for move in legal_moves:

board.push(move)

score = evaluate_board(board)

if score > max_score:

max_score = score

best_move = move

board.pop() # Backtrack to the original position

else:

# Black wants to minimize the score

min_score = float('inf')

for move in legal_moves:

board.push(move)

score = evaluate_board(board)

if score < min_score:

min_score = score

best_move = move

board.pop()

# If no move improves the situation, pick a random one

return best_move if best_move else random.choice(legal_moves)

if __name__ == "__main__":

board = chess.Board("r1bqkbnr/pppp1ppp/2n5/4p3/4P3/5N2/PPPP1PPP/RNBQKB1R w KQkq - 2 3") # A common opening

print("Current board:")

print(board)

best_move_for_white = find_best_move_simple(board)

print(f"\nThe simple agent suggests the move: {board.san(best_move_for_white)}")

board.push(best_move_for_white)

print("\nBoard after the suggested move:")

print(board)Advanced Strategies and the LLM Gambit

While simple evaluation functions and Minimax are foundational, state-of-the-art chess AI employs far more sophisticated techniques. Modern engines like Stockfish use highly optimized alpha-beta pruning with complex, hand-crafted evaluation functions. Neural network-based engines like AlphaZero and Leela Chess Zero use deep learning, often trained via reinforcement learning, to discover strategic patterns far beyond human comprehension. This is where tooling from the NVIDIA AI News ecosystem, like CUDA and TensorRT, becomes critical for performance.

The Rise of LLMs in Strategic Games

The most recent and fascinating development is the application of LLMs to chess. Unlike specialized engines, models from providers featured in OpenAI News, Anthropic News, or Mistral AI News are not explicitly programmed with chess rules or search algorithms. Instead, they attempt to play based on the patterns they've learned from ingesting immense amounts of text, including millions of chess games. This tests their ability to reason symbolically and strategically.

A key challenge is that LLMs can "hallucinate" and suggest illegal moves. Therefore, a practical implementation requires a validation layer. We can prompt an LLM for a move and then use `python-chess` to verify its legality before executing it. This hybrid approach leverages the potential strategic insight of the LLM while ensuring the integrity of the game. Frameworks like LangChain News or tools like LlamaIndex can help structure these complex, multi-step interactions with models.

# Filename: llm_chess_agent.py

# Description: A conceptual example of how to interface an LLM with a chess board.

# NOTE: This requires an installed LLM library like 'openai' or 'huggingface_hub'.

# This is a mock implementation for demonstration purposes.

import chess

from typing import Optional

# MOCK FUNCTION: In a real application, this would make an API call.

def query_llm_for_move(board_fen: str, player_color: str) -> str:

"""

Mocks a call to an LLM to get a chess move in SAN format.

"""

print("\n--- Querying Mock LLM ---")

print(f"Board FEN: {board_fen}")

prompt = f"You are a world-class chess grandmaster. It is {player_color}'s turn to move. The board state in FEN is: {board_fen}. What is the best move in Standard Algebraic Notation (SAN)? Respond with only the move."

print(f"Prompt: {prompt}")

# In a real scenario, the LLM might return various text.

# We are mocking a plausible, but not always legal, response.

mock_responses = ["Nf3", "e5", "d4", "Be2", "Axf7#", "O-O"] # A mix of legal/illegal moves

import random

chosen_move = random.choice(mock_responses)

print(f"Mock LLM Response: '{chosen_move}'")

print("-------------------------\n")

return chosen_move

def get_llm_move(board: chess.Board) -> Optional[chess.Move]:

"""

Queries an LLM for a move and validates it. Retries if the move is illegal.

"""

player_color = "White" if board.turn == chess.WHITE else "Black"

for _ in range(3): # Try up to 3 times to get a legal move

try:

san_move = query_llm_for_move(board.fen(), player_color)

move = board.parse_san(san_move)

return move

except (ValueError, chess.IllegalMoveError, chess.AmbiguousMoveError) as e:

print(f"LLM suggested an invalid move ('{san_move}'). Error: {e}. Retrying...")

print("LLM failed to provide a legal move after 3 attempts. Falling back to random move.")

return random.choice(list(board.legal_moves))

if __name__ == "__main__":

board = chess.Board()

print("Initial board:")

print(board)

# Get a move from our LLM agent for White

llm_suggested_move = get_llm_move(board)

if llm_suggested_move:

print(f"Validated LLM move for White: {board.san(llm_suggested_move)}")

board.push(llm_suggested_move)

print("\nBoard after LLM's move:")

print(board)Best Practices and Optimization for Competition

Success in a Kaggle News competition or any AI chess tournament requires more than just a clever algorithm. It demands rigorous engineering, optimization, and adherence to best practices throughout the MLOps lifecycle.

1. Environment and Reproducibility

Competitions run in standardized environments. It's crucial to develop your agent using tools that ensure reproducibility. Containerization with Docker is standard practice. Platforms like Google Colab News and AWS SageMaker provide managed environments that help streamline development, while MLOps platforms like Vertex AI and Azure Machine Learning offer robust pipelines for training and deployment.

2. Performance and Inference Optimization

In timed matches, speed is everything. For neural network models developed with Keras News or JAX News, inference must be lightning-fast. This is where optimization frameworks are essential. ONNX News (Open Neural Network Exchange) provides a standard format for models, which can then be accelerated using runtimes like OpenVINO News on Intel hardware or TensorRT News on NVIDIA GPUs. For LLMs, specialized inference servers like Triton Inference Server News or libraries like vLLM News can dramatically increase throughput.

3. Experiment Tracking and Hyperparameter Tuning

Building a strong AI is an iterative process. Tools like Comet ML News and ClearML News are invaluable for logging experiments, comparing model versions, and tracking metrics. When tuning the vast number of hyperparameters in a deep learning model or a search algorithm, automated tools are a necessity. Optuna News is a popular, framework-agnostic hyperparameter optimization library that can help systematically find the best settings for your agent.

4. Choosing the Right Tools

The modern AI stack is rich and diverse. For data processing at scale, one might look to Apache Spark MLlib News. For distributed training of massive models, Ray News and DeepSpeed News are leading choices. When building interactive demos of your agent, libraries like Gradio News or Streamlit News make it simple. The key is to select the right tool for the job, from data ingestion to final deployment, creating a seamless workflow from concept to competitive agent.

Conclusion: The Checkered Future of AI Reasoning

The emergence of AI chess tournaments on platforms like Kaggle marks a pivotal moment in the evaluation of artificial intelligence. These competitions are pushing the boundaries beyond raw predictive accuracy, forcing us to develop and benchmark models on their ability to plan, strategize, and reason in complex, adversarial environments. By combining foundational algorithms with cutting-edge deep learning and the novel capabilities of LLMs, developers are creating a new generation of digital grandmasters.

For data scientists and AI engineers, this presents a unique and exciting challenge. The path to building a competitive chess AI involves a mastery of diverse tools—from the `python-chess` library for game logic to advanced MLOps platforms like MLflow and inference optimizers like TensorRT. As models from Cohere News, Stability AI News, and others continue to advance, these tournaments will serve as a crucial, transparent, and unforgiving measure of their true reasoning capabilities. The ultimate prize isn't just winning a game, but advancing our collective understanding of intelligence itself.