ONNX News: Python 3.13 Support Paves the Way for Next-Gen AI Deployments

In the rapidly evolving landscape of artificial intelligence, interoperability remains a cornerstone of innovation and practical deployment. The ability to train a model in one framework and seamlessly deploy it in another is not just a convenience; it’s a critical requirement for building robust, scalable, and efficient AI systems. At the heart of this interoperability lies the Open Neural Network Exchange (ONNX), an open standard that has become the lingua franca for AI models. As the AI community pushes the boundaries of what’s possible, the foundational tools that support it must also evolve. The latest exciting development in this space is the proactive work to bring ONNX support to Python 3.13. This move, while seemingly an incremental update, is a significant enabler for the entire ecosystem, unlocking performance enhancements and new language features that will shape the future of AI development and deployment. This article explores the core concepts of ONNX, its practical implementation, and why its compatibility with upcoming Python versions is pivotal news for developers and organizations alike.

The Indispensable Role of ONNX in a Multi-Framework World

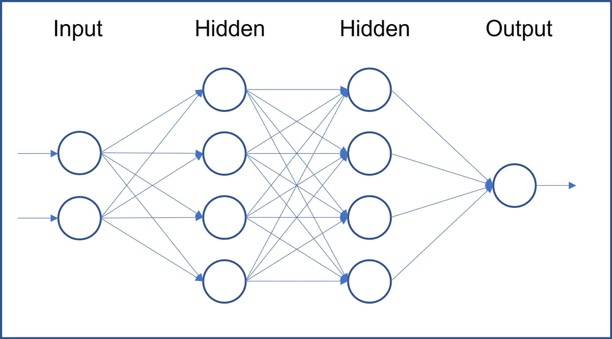

The modern AI ecosystem is a vibrant but fragmented collection of powerful frameworks. Teams may leverage PyTorch for its research-friendly flexibility, TensorFlow or Keras for production-scale training, and JAX for high-performance, hardware-accelerated computation. This specialization is powerful but creates a significant challenge: how do you move a model from a research environment built on PyTorch to a production inference server optimized with NVIDIA’s TensorRT or Intel’s OpenVINO? Rewriting models is time-consuming, error-prone, and inefficient. This is precisely the problem ONNX was created to solve.

What is ONNX and Why Does It Matter?

ONNX is an open-source format for representing machine learning models. It defines a common set of operators—the building blocks of ML models—and a standard file format (.onnx) to structure them. Think of it as a universal translator. A model, once converted to the ONNX format, becomes a self-contained, portable asset. It captures the model’s architecture (the computational graph) and its learned parameters (the weights) in a framework-agnostic way. This decoupling of training from inference is transformative. A data scientist can use the latest tools from the PyTorch News or TensorFlow News cycles for experimentation, and then hand off a single ONNX file to an MLOps engineer who can deploy it on a variety of targets—from powerful cloud servers running on Azure AI or AWS SageMaker to resource-constrained edge devices—without ever needing to touch the original training code.

A Practical Export Example: From PyTorch to ONNX

The process of converting a model to ONNX is straightforward in most major frameworks. Let’s look at a simple example of exporting a basic convolutional neural network (CNN) from PyTorch. This is often the first step in any ONNX-based deployment pipeline.

import torch

import torch.nn as nn

import onnx

# 1. Define a simple PyTorch model

class SimpleCNN(nn.Module):

def __init__(self):

super(SimpleCNN, self).__init__()

self.conv1 = nn.Conv2d(1, 16, kernel_size=3, stride=1, padding=1)

self.relu = nn.ReLU()

self.pool = nn.MaxPool2d(kernel_size=2, stride=2)

self.fc1 = nn.Linear(16 * 14 * 14, 10) # Assuming 28x28 input image

def forward(self, x):

x = self.conv1(x)

x = self.relu(x)

x = self.pool(x)

x = x.view(-1, 16 * 14 * 14)

x = self.fc1(x)

return x

# 2. Instantiate the model and set it to evaluation mode

model = SimpleCNN()

model.eval()

# 3. Create a dummy input tensor with the correct shape

# The batch size is set to 1, but can be made dynamic

dummy_input = torch.randn(1, 1, 28, 28)

onnx_model_path = "simple_cnn.onnx"

# 4. Export the model to ONNX format

torch.onnx.export(

model, # The model to be exported

dummy_input, # A sample input to trace the model's execution

onnx_model_path, # Where to save the model

export_params=True, # Store the trained weights within the model file

opset_version=12, # The ONNX operator set version to use

do_constant_folding=True, # Execute constant folding for optimization

input_names=['input'], # The model's input names

output_names=['output'], # The model's output names

dynamic_axes={ # Specify dynamic axes for variable batch size

'input': {0: 'batch_size'},

'output': {0: 'batch_size'}

}

)

print(f"Model has been successfully exported to {onnx_model_path}")

# Optional: Verify the exported model

onnx_model = onnx.load(onnx_model_path)

onnx.checker.check_model(onnx_model)

print("ONNX model check passed!")Bridging the Gap: ONNX Runtime and Cross-Platform Inference

Exporting a model to the .onnx format is only the first half of the journey. To actually execute this model and perform inference, you need a specialized engine that can read, interpret, and run the ONNX graph. This is where ONNX Runtime (ORT) comes in. Developed and maintained by Microsoft, ONNX Runtime is a high-performance, cross-platform inference engine designed specifically for ONNX models.

Introducing ONNX Runtime (ORT)

ONNX Runtime is engineered for flexibility and speed. Its key advantage is its extensible architecture of “Execution Providers,” which allows it to leverage hardware-specific acceleration libraries. This means the same ONNX model can be run on a CPU, an NVIDIA GPU using CUDA or TensorRT, an Intel CPU/GPU using OpenVINO, or even on mobile and web platforms. This abstracts away the complexity of hardware optimization from the developer. You simply provide the ONNX model and specify the desired execution environment, and ORT handles the rest. This is a core component of many production systems, including those featured in NVIDIA AI News and Azure Machine Learning updates, where performance is paramount.

Running Inference with ONNX Runtime in Python

Once you have your .onnx file, using ONNX Runtime in Python is remarkably simple. The onnxruntime library provides a clean and intuitive API for loading models and running predictions. Let’s use the simple_cnn.onnx model we exported earlier.

import onnxruntime as ort

import numpy as np

# 1. Set the path to the ONNX model

onnx_model_path = "simple_cnn.onnx"

# 2. Create an inference session

# You can specify execution providers, e.g., ['CUDAExecutionProvider', 'CPUExecutionProvider']

session = ort.InferenceSession(onnx_model_path, providers=['CPUExecutionProvider'])

# 3. Get model input and output names

input_name = session.get_inputs()[0].name

output_name = session.get_outputs()[0].name

print(f"Input Name: {input_name}")

print(f"Output Name: {output_name}")

# 4. Prepare a sample input tensor

# The input must be a NumPy array. Let's create a batch of 4 random images.

batch_size = 4

input_data = np.random.randn(batch_size, 1, 28, 28).astype(np.float32)

# 5. Run inference

# The input is provided as a dictionary mapping input names to NumPy arrays

results = session.run([output_name], {input_name: input_data})

# 6. Process the output

# The result is a list of NumPy arrays

output_tensor = results[0]

print(f"Output shape: {output_tensor.shape}") # Should be (4, 10) for our batch of 4

print("Inference completed successfully.")

# Example of getting predictions for the first image in the batch

predictions = np.argmax(output_tensor[0])

print(f"Predicted class for the first image: {predictions}")The Road to Python 3.13: A Game-Changer for the ONNX Ecosystem

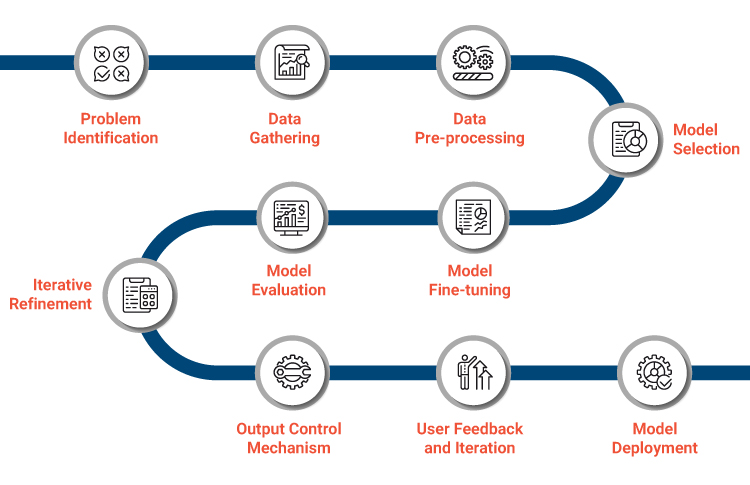

In any complex software stack, especially in machine learning, dependency management is a critical challenge. The entire ecosystem, from data processing libraries to model training frameworks and deployment tools, is interconnected. A single foundational library failing to keep pace with language updates can create a significant bottleneck, preventing entire projects from adopting new, more efficient versions of Python. As a cornerstone library, ONNX’s compatibility is paramount for countless projects, including popular frameworks covered in LangChain News and LlamaIndex News.

What’s New in Python 3.13 and Why It Matters for AI

Python 3.13, currently in its alpha stage, promises several features that are particularly exciting for the AI and high-performance computing communities:

- Experimental JIT Compiler: A new copy-and-patch Just-In-Time (JIT) compiler is being introduced. For the computationally intensive workloads common in model inference, a JIT can provide substantial performance boosts by translating Python bytecode into optimized machine code at runtime. This could directly accelerate the Python-based components of inference pipelines that use ONNX Runtime.

- No-GIL Mode (Experimental): Python 3.13 will offer an experimental build flag to disable the infamous Global Interpreter Lock (GIL). The GIL has historically limited Python’s ability to perform true parallel execution on multi-core CPUs. For CPU-bound inference tasks, a GIL-free Python could unlock massive performance gains by allowing ONNX Runtime to more effectively utilize all available CPU cores for parallel processing of requests.

- Improved Typing and Performance: General improvements to the language runtime and enhanced static typing features contribute to building more robust, maintainable, and efficient ML systems.

The proactive work by the ONNX community to build and test wheels for Python 3.13 ensures that as soon as the new version is released, developers can immediately start experimenting with and benefiting from these features without being blocked by their core model deployment library. This foresight is what keeps the open-source ecosystem healthy and dynamic.

# Example of how a developer might install a pre-release wheel for a new Python version

# This command is illustrative and depends on the project's release process.

# First, ensure you are using a Python 3.13 environment

# python3.13 -m venv venv-313

# source venv-313/bin/activate

echo "Attempting to install pre-release ONNX for Python 3.13..."

# Pip can be instructed to look for pre-releases and specific wheels

# The exact URL would be provided by the ONNX maintainers during testing phases.

pip install --pre --upgrade onnx onnxruntime

# If official pre-releases aren't on PyPI yet, one might point to a specific index:

# pip install onnx --extra-index-url https://pypi.org/simple/

echo "Installation complete. You can now test ONNX with Python 3.13 features."Best Practices and Advanced ONNX Techniques

Beyond basic model conversion and inference, the ONNX ecosystem provides powerful tools for optimization and debugging. Adopting these best practices is crucial for moving from a functional prototype to a production-grade deployment.

Model Optimization and Quantization

Raw exported models are often not optimized for inference. ONNX Runtime includes sophisticated graph optimization capabilities that can be applied automatically. These include techniques like node fusion (merging multiple operations into a single, more efficient one), constant folding, and operator elimination. Furthermore, for deployment on edge devices or to reduce latency, quantization is a powerful technique. It involves converting a model’s weights from 32-bit floating-point (FP32) to lower-precision formats like 8-bit integer (INT8). This drastically reduces model size and can lead to significant speedups on compatible hardware.

from onnxruntime.quantization import quantize_dynamic, QuantType

# Path to the original FP32 model

onnx_model_path = "simple_cnn.onnx"

# Path for the new quantized INT8 model

quantized_model_path = "simple_cnn_quant.onnx"

print("Starting dynamic quantization...")

# Perform dynamic quantization

quantize_dynamic(

model_input=onnx_model_path,

model_output=quantized_model_path,

weight_type=QuantType.QInt8

)

print(f"Quantized model saved to {quantized_model_path}")

print("Compare the file sizes of the original and quantized models to see the reduction.")Debugging and Visualization

When an export fails or a model behaves unexpectedly, it’s essential to have tools to inspect the ONNX graph. The most popular tool for this is Netron, a web-based and desktop visualizer for neural network models. By simply opening your .onnx file in Netron, you can explore every node, view its properties, and understand the data flow, which is invaluable for debugging. Additionally, always use the built-in ONNX checker to validate your model’s structure immediately after export.

import onnx

# A simple but crucial best practice after any model export

onnx_model_path = "simple_cnn.onnx"

try:

onnx_model = onnx.load(onnx_model_path)

onnx.checker.check_model(onnx_model)

print("ONNX model is well-formed and valid.")

except Exception as e:

print(f"ONNX model check failed: {e}")Conclusion: The Future is Open and Interoperable

The continuous evolution of foundational technologies like ONNX is a testament to the strength and collaborative spirit of the open-source AI community. The upcoming support for Python 3.13 is more than just a version bump; it’s a forward-looking move that ensures the entire ecosystem can leverage cutting-edge language features for enhanced performance and productivity. By maintaining its role as the universal standard for model representation, ONNX empowers developers to choose the best tools for their tasks—from the latest models in Hugging Face Transformers News to the most efficient deployment platforms like Triton Inference Server. As AI becomes more integrated into our world, the principles of openness, interoperability, and proactive development championed by projects like ONNX will be more critical than ever in driving the next wave of innovation.