LangSmith Goes General Availability: A Deep Dive into Production-Grade LLM Observability

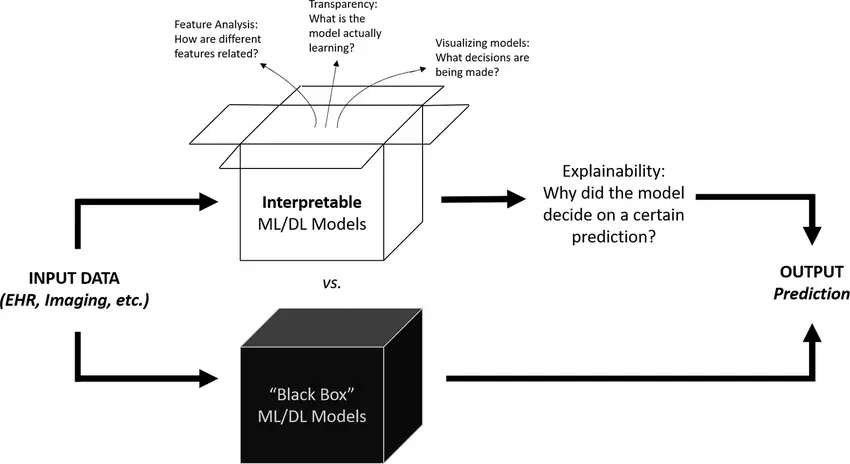

The landscape of Generative AI has shifted dramatically in recent months. We have moved past the initial phase of experimentation—where “vibes-based” evaluation was acceptable—into a mature era of enterprise adoption. With the recent announcement of LangChain’s Series A funding and the General Availability (GA) of LangSmith, the industry now has a definitive answer to the “black box” problem of Large Language Models (LLMs). For developers and data scientists tracking LangSmith News, this milestone represents a critical evolution in the LLM technology stack.

While frameworks like LangChain and LlamaIndex democratized the construction of LLM applications, taking those applications to production has remained fraught with challenges. Non-deterministic outputs, latency spikes, and hallucination risks make traditional software testing insufficient. This article explores the technical architecture of LangSmith, how it integrates with the broader AI ecosystem—from OpenAI News to Hugging Face News—and provides practical implementation strategies for engineering teams aiming to deploy reliable AI agents.

The Core Philosophy: Tracing and Observability

At its heart, LangSmith is an observability platform designed specifically for the complexities of chains and agents. Unlike traditional ML monitoring tools often discussed in MLflow News or Weights & Biases News, which focus heavily on loss curves and training metrics, LangSmith focuses on the interaction layer. It provides visibility into exactly what happens between the user input and the final response.

Understanding the Trace

A “trace” in LangSmith visualizes the execution path of an LLM application. Whether you are using a simple prompt-response pair or a complex RAG (Retrieval-Augmented Generation) pipeline involving Pinecone News or Weaviate News for vector storage, LangSmith logs every step. This includes:

- Inputs and Outputs: The exact text sent to and received from the model.

- Latency: How long each step (retrieval, formatting, generation) took.

- Token Usage: Critical for cost estimation with providers like Anthropic or OpenAI.

- Metadata: Custom tags to track environments (staging vs. production) or user IDs.

To start tracing, you typically only need to set environment variables. However, for more granular control within a Python application, you can utilize the LangChain integration directly. Here is how you initialize a basic tracing setup:

import os

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

# 1. Set Environment Variables for LangSmith Tracing

os.environ["LANGCHAIN_TRACING_V2"] = "true"

os.environ["LANGCHAIN_API_KEY"] = "ls__your_api_key_here"

os.environ["LANGCHAIN_PROJECT"] = "production-chatbot-v1"

# 2. Define a simple chain

model = ChatOpenAI(model="gpt-4-turbo")

prompt = ChatPromptTemplate.from_template("Explain {topic} to a {audience}.")

output_parser = StrOutputParser()

chain = prompt | model | output_parser

# 3. Invoke the chain (This run is automatically logged to LangSmith)

try:

response = chain.invoke({"topic": "quantum computing", "audience": "5th grader"})

print(f"Response: {response}")

except Exception as e:

print(f"Error during invocation: {e}")

This simplicity is deceptive. Behind the scenes, LangSmith is capturing the full serialization of the chain. If you were to swap the model for a local one using Ollama News or an open-source model via Hugging Face Transformers News, the tracing mechanism remains consistent, providing a unified view of your application’s performance regardless of the underlying model architecture.

Evaluation-Driven Development (EDD)

The most significant leap forward with LangSmith’s general availability is its robust evaluation framework. In traditional software, we write unit tests with assertions. In LangChain News and LLM development, “assertions” are fuzzy. Does the summary capture the key points? Is the tone polite?

LangSmith solves this by allowing developers to create Datasets (pairs of inputs and expected outputs) and run Evaluators against them. This aligns with trends seen in Azure Machine Learning News and AWS SageMaker News, where MLOps is transitioning to LLMOps.

Implementing Automated Evaluation

To ensure your RAG pipeline isn’t hallucinating, you can use an “LLM-as-a-Judge” evaluator. This involves using a strong model (like GPT-4) to grade the output of your application based on specific criteria (correctness, relevance, harmfulness).

The following example demonstrates how to create a dataset and run a custom evaluation using the LangSmith SDK:

from langsmith import Client

from langchain.smith import RunEvalConfig, run_on_dataset

client = Client()

# 1. Create a Dataset (or load one if it exists)

dataset_name = "RAG_QA_Golden_Set"

if not client.has_dataset(dataset_name=dataset_name):

dataset = client.create_dataset(dataset_name=dataset_name, description="QA pairs for RAG")

client.create_examples(

inputs=[

{"question": "What is LangSmith?"},

{"question": "How do I optimize latency?"}

],

outputs=[

{"answer": "LangSmith is a platform for building production-grade LLM applications."},

{"answer": "Use caching and smaller models where possible."}

],

dataset_id=dataset.id,

)

# 2. Define the factory function for your app

def construct_chain():

# In a real scenario, this would return your actual RAG chain

model = ChatOpenAI(model="gpt-3.5-turbo")

prompt = ChatPromptTemplate.from_template("Answer this: {question}")

return prompt | model | StrOutputParser()

# 3. Configure Evaluators

# We will use 'qa' (correctness) and 'conciseness' criteria

eval_config = RunEvalConfig(

evaluators=["qa", "cot_qa"], # Chain of Thought QA for better reasoning

)

# 4. Run the evaluation

results = run_on_dataset(

client=client,

dataset_name=dataset_name,

llm_or_chain_factory=construct_chain,

evaluation=eval_config,

)

print(f"Evaluation Results: {results}")

This code snippet highlights the shift from manual inspection to automated metrics. By integrating this into your CI/CD pipeline, you prevent regression. If a prompt change causes the “correctness” score to drop below 90%, the deployment can be halted. This is similar to practices advocated in DataRobot News and Vertex AI News regarding model governance.

Advanced Techniques: Feedback Loops and Fine-Tuning

Once your application is live, the challenge shifts to monitoring and improvement. LangSmith allows you to capture user feedback (thumbs up/down) and associate it with specific traces. This data is gold for fine-tuning models, a topic frequently covered in LlamaFactory News and Mistral AI News.

Closing the Data Flywheel

By filtering traces that received positive feedback, you can export high-quality examples to fine-tune a smaller, cheaper model (like Llama 3 via Meta AI News or a model on Replicate News) to perform as well as a larger model for your specific use case. This process is essential for cost optimization.

Here is how you might programmatically annotate runs with feedback in a web application context (e.g., using FastAPI News or Streamlit News):

from langsmith import Client

client = Client()

def log_user_feedback(run_id, score, comment):

"""

Logs user feedback to a specific run in LangSmith.

Args:

run_id (str): The ID of the run (trace) generated during the chat.

score (float): 0.0 to 1.0 (or -1 to 1) representing sentiment.

comment (str): Text feedback from the user.

"""

try:

client.create_feedback(

run_id,

key="user_score",

score=score,

comment=comment

)

print(f"Feedback logged for run {run_id}")

except Exception as e:

print(f"Failed to log feedback: {e}")

# Example usage within a web route

# Assuming 'run_id' was stored in the user's session during generation

# User clicks 'Thumbs Up'

log_user_feedback(

run_id="a1b2c3d4-e5f6-...",

score=1.0,

comment="Great answer, very helpful!"

)

This feedback mechanism allows you to query LangSmith for all runs with a `user_score` of 0 (negative feedback), inspect them, correct the ground truth, and add them to your evaluation dataset. This creates a virtuous cycle of improvement, distinguishing a prototype from a production system.

Best Practices and Ecosystem Integration

As you scale, simply logging everything is not enough. You must adopt best practices to manage the volume of data and integrate with other tools in the stack, such as Vector Database News sources like Qdrant News or Milvus News.

1. Managing Costs and Privacy

Tracing every token in high-throughput applications can be expensive and noisy. LangSmith provides sampling capabilities. Furthermore, for industries dealing with sensitive data (Finance, Healthcare), ensuring PII is masked before it hits the trace logs is vital. While tools like Snowflake Cortex News focus on secure data storage, LangSmith allows for project-level isolation and RBAC (Role-Based Access Control) in its enterprise tier.

2. The “Prompt Hub” Workflow

Hardcoding prompts in Python files is an anti-pattern. LangSmith includes a Prompt Hub (similar in concept to registries seen in Comet ML News or ClearML News) where non-technical stakeholders can edit prompts. Developers then pull the latest prompt version via the API.

from langchain import hub

# Pull the latest version of a prompt managed in LangSmith

# This decouples prompt engineering from code deployment

prompt = hub.pull("my-org/customer-support-agent:latest")

# Use it in your chain immediately

chain = prompt | model | output_parser

3. Integration with Local and Cloud Models

LangSmith is model-agnostic. Whether you are using proprietary models via Google DeepMind News (Gemini) and Anthropic News (Claude), or deploying open weights models via vLLM News or RunPod News, the tracing protocol remains the standard. This flexibility is crucial as the “best” model changes almost weekly in the current AI climate. It allows teams to switch back ends without losing their testing history.

Conclusion

The transition of LangSmith to General Availability marks a pivotal moment in the AI engineering timeline. It signals that the tools for building LLM applications are catching up to the capabilities of the models themselves. By providing a unified platform for tracing, evaluation, and monitoring, LangSmith addresses the fragmentation often seen in TensorFlow News or PyTorch News ecosystems, which are traditionally more focused on training than inference orchestration.

For developers, the takeaway is clear: Observability is not an afterthought; it is a requirement. Whether you are building with LlamaIndex News patterns or standard LangChain agents, implementing a robust evaluation pipeline using LangSmith will differentiate successful products from failed experiments. As the ecosystem expands—from NVIDIA AI News hardware accelerations to Amazon Bedrock News managed services—LangSmith acts as the glue that ensures your application logic remains sound, performant, and reliable.

Start by integrating tracing into your development environment today, build your first golden dataset, and move toward a metric-driven development lifecycle. The era of “blind” LLM deployment is over.