Unlocking High-Performance AI Inference: A Deep Dive into the Latest NVIDIA Triton Inference Server Updates

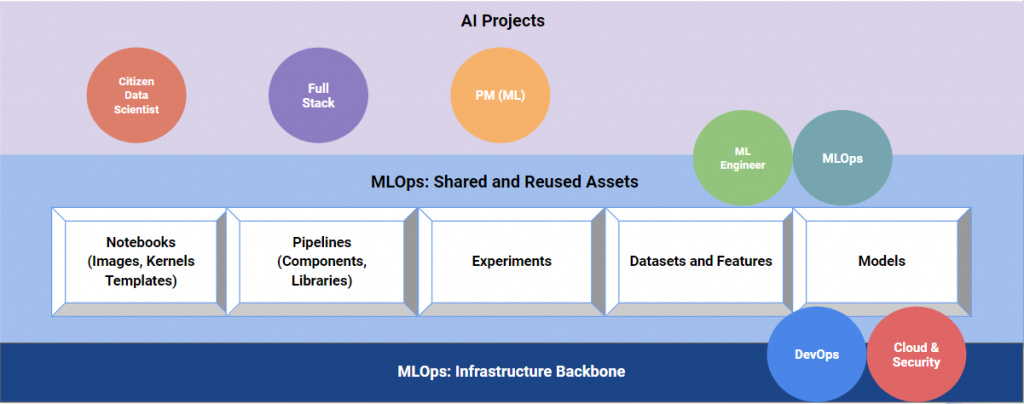

In the rapidly evolving landscape of artificial intelligence, the journey from a trained model to a scalable, production-ready application is fraught with challenges. Latency, throughput, and resource utilization are critical metrics that can make or break an AI-powered service. As models, particularly Large Language Models (LLMs), grow in complexity, the need for a robust, flexible, and high-performance serving solution has never been more acute. This is where NVIDIA Triton Inference Server emerges as a cornerstone of modern MLOps infrastructure.

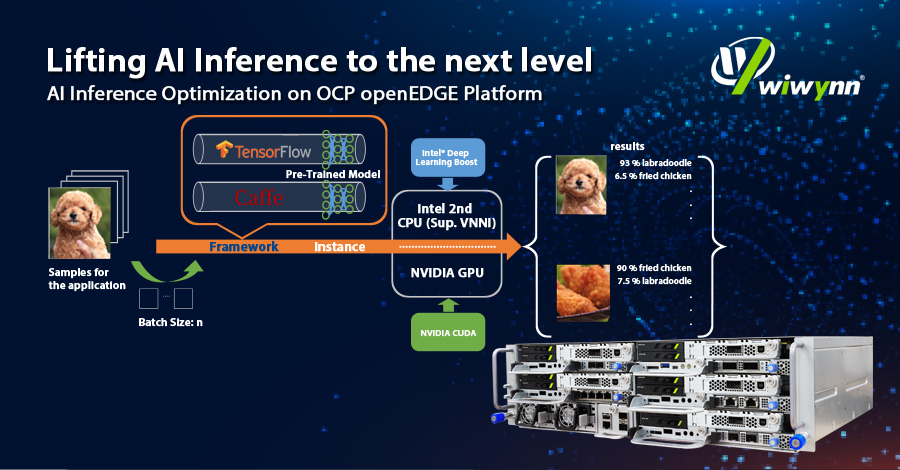

Originally developed to streamline inference for NVIDIA’s internal teams, Triton is now an open-source, production-grade inference server that simplifies the deployment of AI models at scale. It offers a unified interface to serve models from virtually any framework, on any GPU- or CPU-based infrastructure, whether in the cloud, on-premises, or at the edge. Recent developments have significantly expanded its capabilities, solidifying its position as a go-to solution for everything from classic computer vision tasks to cutting-edge generative AI. This article explores the core concepts of Triton, dives into practical implementation details, and highlights the latest news and advanced features that are shaping the future of AI inference.

The Core of Triton: Flexible, High-Throughput Inference

At its heart, Triton Inference Server is designed to solve the operational complexities of deploying diverse AI models. It acts as a standardized bridge between client applications and the underlying hardware, abstracting away the specifics of different model frameworks and optimization libraries. This allows data science and engineering teams to focus on building great models and applications rather than getting bogged down in bespoke deployment code.

What is Triton Inference Server?

Triton is a microservice that listens for inference requests via standard network protocols (gRPC and HTTP/REST). When a request arrives, Triton routes it to the appropriate model, manages batching for optimal hardware utilization, and returns the results. Its power lies in its backend system, a pluggable architecture that allows it to run models from nearly any framework. This is a major point of interest in recent PyTorch News and TensorFlow News, as developers can deploy models from these frameworks without code changes. Triton natively supports:

- TensorRT: For highly optimized NVIDIA GPU inference.

- TensorFlow: Both GraphDef and SavedModel formats.

- PyTorch: TorchScript and, more recently, eager-mode models via the Python backend.

- ONNX: The Open Neural Network Exchange format for framework interoperability.

- OpenVINO: For optimized inference on Intel hardware.

- Python & C++: For custom backends that can execute arbitrary logic.

Key Features Explained

Three core features set Triton apart and are central to its performance:

- Concurrent Model Execution: Triton can load and run multiple models (or multiple instances of the same model) simultaneously on a single GPU or across multiple GPUs. This maximizes the utilization of expensive hardware, which is a constant theme in NVIDIA AI News.

- Dynamic Batching: This is Triton’s “secret sauce.” It automatically groups individual inference requests from different clients into a single batch on the server side. Processing a batch of inputs is far more efficient for GPUs than processing them one by one. Triton intelligently waits for a configurable amount of time to accumulate a batch, balancing latency against throughput.

- Model Ensembles and Pipelines: Triton can chain models together to create complex inference pipelines. A single request can trigger a sequence of models, where the output of one becomes the input of the next, all handled within the server.

Your First Triton Request: A Simple Client Example

Interacting with a running Triton server is straightforward using its client libraries. The following Python example demonstrates how to send a request to a simple “add_sub” model that takes two input vectors and returns their sum and difference.

import numpy as np

import tritonclient.http as httpclient

# Define the server URL

TRITON_SERVER_URL = "localhost:8000"

# Create a Triton client

try:

triton_client = httpclient.InferenceServerClient(url=TRITON_SERVER_URL)

except Exception as e:

print("Channel creation failed: " + str(e))

exit(1)

# Define the model name and version

MODEL_NAME = "add_sub"

MODEL_VERSION = "1"

# Create some input data

input0_data = np.random.rand(16).astype(np.float32)

input1_data = np.random.rand(16).astype(np.float32)

# Set up the input tensors

inputs = [

httpclient.InferInput("INPUT0", [1, 16], "FP32"),

httpclient.InferInput("INPUT1", [1, 16], "FP32"),

]

inputs[0].set_data_from_numpy(input0_data, binary_data=True)

inputs[1].set_data_from_numpy(input1_data, binary_data=True)

# Set up the output tensors

outputs = [

httpclient.InferRequestedOutput("OUTPUT0", binary_data=True),

httpclient.InferRequestedOutput("OUTPUT1", binary_data=True),

]

# Send the inference request

results = triton_client.infer(

model_name=MODEL_NAME,

model_version=MODEL_VERSION,

inputs=inputs,

outputs=outputs

)

# Process the results

output0_data = results.as_numpy("OUTPUT0")

output1_data = results.as_numpy("OUTPUT1")

print(f"INPUT0: {input0_data}")

print(f"INPUT1: {input1_data}")

print("-" * 20)

print(f"OUTPUT0 (Sum): {output0_data}")

print(f"OUTPUT1 (Difference): {output1_data}")From Model to Production: A Practical Guide to Triton Deployment

Deploying a model with Triton involves creating a specific directory structure known as the “model repository.” Triton continuously polls this repository, automatically loading new models and unloading updated or removed ones without server restarts. This is a critical feature for CI/CD workflows in MLOps, a topic often covered in MLflow News and Weights & Biases News, as models tracked by these platforms can be easily packaged for Triton deployment.

The Model Repository: Structure and Configuration

Every model in the repository must have a specific structure. At a minimum, it requires a directory for the model name and a subdirectory for each version.

model_repository/

└── my_model/

├── config.pbtxt

└── 1/

└── model.plan # Or model.pt, model.onnx, etc.

The config.pbtxt file is the most important piece. It’s a Protocol Buffer text file that tells Triton everything it needs to know about the model: its name, the backend to use, the names and data types of its inputs and outputs, and performance-tuning settings like dynamic batching.

# config.pbtxt for a TensorRT image classification model

name: "densenet_onnx"

platform: "tensorrt_plan"

max_batch_size: 128

input [

{

name: "input__0"

data_type: TYPE_FP32

dims: [ 3, 224, 224 ]

}

]

output [

{

name: "output__0"

data_type: TYPE_FP32

dims: [ 1000 ]

}

]

# Enable dynamic batching

dynamic_batching {

preferred_batch_size: [4, 8, 16, 32]

max_queue_delay_microseconds: 100

}

# Specify instance groups for GPU execution

instance_group [

{

count: 1

kind: KIND_GPU

}

]This configuration defines a model named “densenet_onnx” that uses the TensorRT backend. It specifies one input tensor and one output tensor with their respective names, data types, and shapes. Crucially, it enables dynamic batching with preferred batch sizes and a maximum queue delay of 100 microseconds, allowing Triton to optimize for throughput. This level of control is why Triton is a popular choice on platforms like AWS SageMaker, Azure Machine Learning, and Google’s Vertex AI.

Beyond the Basics: Leveraging Triton’s Advanced Capabilities for Modern AI

While Triton excels at serving traditional models, recent updates have focused on enhancing its flexibility and performance for the new wave of generative AI and complex, multi-stage pipelines. This is where the latest Triton Inference Server News gets particularly exciting.

The Python Backend: Custom Logic Inside the Server

One of Triton’s most powerful features is the Python backend. It allows you to run arbitrary Python code as a model, effectively turning Triton into a highly performant Python application server. This is ideal for tasks that are difficult to express in traditional model graphs, such as:

- Preprocessing and Postprocessing: Tokenizing text, resizing images, or decoding model outputs into human-readable formats.

- Business Logic: Integrating rule-based systems or calling external APIs as part of an inference pipeline.

- Running Pure Python Models: Serving models from libraries like scikit-learn, XGBoost, or even custom code without needing to convert them to another format.

Here’s an example of a model.py file for a simple tokenizer model using the Hugging Face Transformers News-making library.

import json

import numpy as np

import triton_python_backend_utils as pb_utils

from transformers import AutoTokenizer

class TritonPythonModel:

def initialize(self, args):

"""

Called once when the model is loaded.

"""

self.model_dir = args['model_repository']

self.tokenizer = AutoTokenizer.from_pretrained("bert-base-uncased")

self.model_config = json.loads(args['model_config'])

def execute(self, requests):

"""

This function is called for every batch of inference requests.

"""

responses = []

for request in requests:

# Decode the input tensor (text)

input_tensor = pb_utils.get_input_tensor_by_name(request, "TEXT")

texts = [t.decode('utf-8') for t in input_tensor.as_numpy()]

# Tokenize the text

tokens = self.tokenizer(texts, padding=True, truncation=True, return_tensors="np")

# Create output tensors

output_ids = pb_utils.Tensor("input_ids", tokens["input_ids"].astype(np.int64))

attention_mask = pb_utils.Tensor("attention_mask", tokens["attention_mask"].astype(np.int64))

# Create a response object

inference_response = pb_utils.InferenceResponse(output_tensors=[output_ids, attention_mask])

responses.append(inference_response)

return responses

def finalize(self):

"""

Called once when the model is unloaded.

"""

print('Cleaning up...')Revolutionizing LLM Serving: vLLM Backend and Generative AI

Perhaps the most significant recent development is the integration of specialized backends for Large Language Models. The new vLLM backend is a game-changer. As a leader in LLM News, vLLM implements PagedAttention and continuous batching, dramatically increasing throughput for LLM inference. By integrating it as a Triton backend, users get the best of both worlds: vLLM’s state-of-the-art performance and Triton’s robust, enterprise-grade serving features. This makes serving models from sources like Hugging Face, OpenAI, or Mistral AI far more efficient. This integration also brings native support for streaming responses, which is essential for creating responsive chatbots and other interactive generative AI applications built with frameworks like LangChain or LlamaIndex.

Model Ensembles for Complex Pipelines

Triton’s ensemble feature allows you to define a directed acyclic graph (DAG) of model executions. This is configured entirely in the `config.pbtxt` file, eliminating the need for an external orchestrator like Ray or services like FastAPI to glue models together. For example, you could build a pipeline that first uses a text-to-vector model (like one from Sentence Transformers News) and then feeds the resulting vector into a vector database search model that interacts with Milvus or Pinecone.

Here is a conceptual `config.pbtxt` for an ensemble that chains a preprocessing model with a main inference model:

name: "end_to_end_pipeline"

platform: "ensemble"

max_batch_size: 128

input [

{

name: "RAW_IMAGE"

data_type: TYPE_UINT8

dims: [ -1 ] # Variable size image bytes

}

]

output [

{

name: "PREDICTIONS"

data_type: TYPE_FP32

dims: [ 1000 ]

}

]

ensemble_scheduling {

step [

{

model_name: "image_preprocessor"

model_version: -1 # Latest version

input_map {

key: "IMAGE_IN"

value: "RAW_IMAGE"

}

output_map {

key: "IMAGE_OUT"

value: "preprocessed_tensor"

}

},

{

model_name: "densenet_classifier"

model_version: -1

input_map {

key: "input__0"

value: "preprocessed_tensor"

}

output_map {

key: "output__0"

value: "PREDICTIONS"

}

}

]

}Optimizing Your Triton Deployment: Best Practices for Peak Performance

Deploying Triton is one thing; optimizing it is another. To extract maximum performance, several best practices should be followed.

Mastering Dynamic Batching

The single most important tuning parameter is dynamic batching. Setting `max_batch_size` too low will underutilize the GPU, while setting it too high can lead to excessive memory consumption. The `preferred_batch_size` array tells Triton which batch sizes your model is most optimized for (e.g., powers of 2). The `max_queue_delay_microseconds` parameter is a critical lever to trade latency for throughput. A lower value means less waiting and lower latency, while a higher value allows Triton to build larger, more efficient batches, increasing throughput.

Model Formats and Optimizations

The choice of model format has a massive impact on performance. While Triton can serve native PyTorch and TensorFlow models, performance is almost always better with an optimized format. Converting models to ONNX provides a portable performance boost, but for NVIDIA GPUs, compiling to a TensorRT engine yields the highest possible throughput and lowest latency. Tools like DeepSpeed can also be used to optimize models before they are prepared for Triton.

Monitoring and Warm-up

Triton exposes a Prometheus metrics endpoint out of the box. Monitoring metrics like GPU utilization, inference latency (queue time, compute time), and request count is essential for understanding performance bottlenecks. Furthermore, loading a model can be time-consuming. Triton’s model warm-up feature allows you to provide sample input data that will be used to run mock inferences as soon as the model is loaded. This ensures the GPU is “warm” and ready for traffic, preventing initial requests from experiencing cold-start latency.

Conclusion: The Future of Scalable AI Inference

NVIDIA Triton Inference Server has firmly established itself as a critical component in the production AI stack. Its multi-framework support, powerful performance features like dynamic batching, and a robust architecture make it an ideal choice for a wide range of inference workloads. The latest updates, particularly the deep integration with LLM-optimized backends like vLLM and the unmatched flexibility of the Python backend, demonstrate a clear commitment to supporting the next generation of AI applications.

By abstracting the complexity of hardware optimization and model serving, Triton empowers teams to deploy faster, scale more efficiently, and innovate with confidence. For any organization serious about deploying AI in production, mastering Triton is no longer just an option—it’s a strategic advantage. As a next step, explore the official documentation to deploy your first model, experiment with the Python backend for custom preprocessing, and investigate the performance gains offered by the TensorRT and vLLM backends for your specific use case.