Navigating the Frontier of AI Safety: A Technical Deep Dive into DataRobot’s Governance and Trust Features

The Imperative of AI Safety in the Age of Generative AI

The rapid proliferation of artificial intelligence, supercharged by advancements in large language models (LLMs), has shifted the conversation from “what can AI do?” to “how can we ensure AI acts safely and responsibly?” As organizations race to deploy AI solutions, the risks of bias, lack of transparency, hallucinations, and security vulnerabilities have become critical business concerns. Staying current with developments from industry leaders, as reflected in OpenAI News, Anthropic News, and Google DeepMind News, is no longer sufficient. Enterprises need a robust, operational framework for AI governance and safety. This is where platforms like DataRobot are carving out an essential role, providing an end-to-end solution designed to embed trust and safety directly into the AI lifecycle.

Simply building a powerful model using the latest from PyTorch News or TensorFlow News is only half the battle. The real challenge lies in deploying these models confidently, ensuring they align with ethical principles, comply with emerging regulations, and deliver reliable value without introducing unforeseen risks. This article provides a technical deep dive into how DataRobot addresses these challenges. We will explore its core features for bias detection and explainability, its mechanisms for scalable governance, and its advanced safeguards for the new frontier of Generative AI, complete with practical code examples for implementation.

Section 1: Foundations of Trustworthy AI: Bias, Fairness, and Explainability

Before an AI model can be trusted, it must be understood. The foundational pillars of trustworthy AI are fairness, transparency, and explainability. DataRobot integrates these principles directly into its automated machine learning (AutoML) workflow, allowing teams to build not just accurate models, but also equitable and interpretable ones.

Automated Bias and Fairness Detection

AI models trained on historical data can inadvertently learn and amplify existing societal biases. Identifying and mitigating this bias is a critical first step. DataRobot automates this process by allowing users to define “protected features” (e.g., race, gender, age) and then calculating a suite of fairness metrics to assess model behavior across different groups.

Key fairness metrics include:

- Proportional Parity: Measures whether the proportion of each class in the predicted outcomes is similar across different groups.

- Equal Opportunity: Assesses if the model has an equal true positive rate for all groups.

- Predictive Equality: Checks for an equal false positive rate across groups.

Using the DataRobot Python client, you can programmatically kick off a project and later retrieve these fairness insights. This enables integration into automated testing and validation pipelines.

# Example: Initiating a DataRobot project and accessing fairness metrics

# Note: This is a conceptual example. You need an active DataRobot instance and API key.

import datarobot as dr

import pandas as pd

# 1. Connect to DataRobot

# dr.Client(token='YOUR_API_TOKEN', endpoint='https://app.datarobot.com/api/v2')

# 2. Load sample data (e.g., a loan application dataset)

# Fictional data for demonstration

data = {

'loan_amount': [10000, 25000, 5000, 30000],

'credit_score': [720, 650, 750, 680],

'applicant_gender': ['Male', 'Female', 'Female', 'Male'],

'loan_approved': [1, 0, 1, 1]

}

df = pd.DataFrame(data)

# 3. Create a project in DataRobot

# project = dr.Project.create(sourcedata=df, project_name='Loan Approval Fairness')

# 4. Set the target and modeling mode

# project.set_target(

# target='loan_approved',

# mode=dr.enums.AUTOPILOT_MODE.FULL_AUTO,

# worker_count=-1

# )

# 5. Define protected features and run fairness calculations

# fairness_options = dr.FairnessOptions(

# protected_columns=['applicant_gender'],

# favorable_outcome='1' # '1' means the loan was approved

# )

# project.run_fairness(fairness_options)

# project.wait_for_autopilot()

# 6. Retrieve fairness scores for a specific model

# best_model = project.get_models()[0]

# fairness = best_model.get_fairness()

# print(f"Fairness metrics for model: {best_model.model_type}")

# for metric in fairness:

# print(f"- Metric: {metric.name}, Score: {metric.score}")

# for per_class_metric in metric.per_class_metrics:

# print(f" - Class: {per_class_metric.class_name}, Value: {per_class_metric.value}")

Deep Explainability with SHAP and Prediction Explanations

A model that cannot be explained is a black box that cannot be trusted. DataRobot provides multiple layers of Explainable AI (XAI) to demystify model behavior. It automatically generates Feature Impact and Feature Effects plots, showing which variables are most influential. For individual predictions, it provides Prediction Explanations powered by techniques like SHAP (SHapley Additive exPlanations), which assign a contribution value to each feature for a specific prediction. This level of transparency is crucial for debugging, stakeholder communication, and regulatory compliance. This focus on explainability is a common theme in the MLOps world, with tools discussed in MLflow News and Weights & Biases News also prioritizing model interpretability.

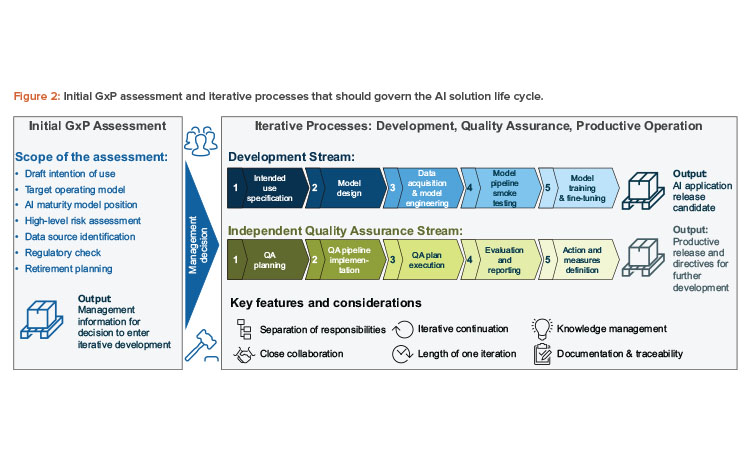

Section 2: Implementing AI Governance and Compliance at Scale

As an organization’s AI footprint grows, managing hundreds or thousands of models becomes a significant governance challenge. Without a centralized system, teams risk deploying redundant, non-compliant, or undocumented models. DataRobot provides the infrastructure to manage this complexity through its centralized Model Registry and automated documentation features.

Centralized Model Registry for Any Model

The DataRobot Model Registry serves as a single source of truth for all predictive models across an enterprise, regardless of their origin. This is a critical feature, as many organizations use a diverse set of tools. A team might build a model using Hugging Face Transformers News libraries, another might use AWS SageMaker, and a third might use DataRobot’s AutoML. The registry can ingest these “external” models, allowing them to be governed under a unified framework.

Registering an external model allows you to track its metadata, version it, and attach compliance documentation, creating a comprehensive inventory for audit and review. You can even deploy and monitor these external models through DataRobot’s MLOps infrastructure.

# Example: Registering an external Scikit-learn model to DataRobot's Model Registry

# This demonstrates the platform's openness to the broader Python ecosystem.

import datarobot as dr

from sklearn.ensemble import RandomForestClassifier

from sklearn.datasets import make_classification

import pickle

# 1. Train a simple external model

X, y = make_classification(n_samples=100, n_features=10, random_state=42)

model = RandomForestClassifier()

model.fit(X, y)

# 2. Serialize the model object

model_filename = 'external_rf_model.pkl'

with open(model_filename, 'wb') as f:

pickle.dump(model, f)

# 3. Define the model metadata for the registry

# Assume connection to DataRobot client is already established

# target_info = dr.TargetInfo(

# name='classification_target',

# type='Binary',

# positive_class_label='1',

# negative_class_label='0'

# )

# 4. Create a new custom model version in the registry

# custom_model = dr.CustomModel.create(

# name="External Random Forest",

# target_type=dr.enums.CUSTOM_MODEL_TARGET_TYPE.BINARY,

# description="A sample Scikit-learn model registered from an external environment."

# )

#

# custom_model_version = dr.CustomModelVersion.create_from_files(

# custom_model_id=custom_model.id,

# base_environment_id="YOUR_PYTHON_ENV_ID", # Provided by DataRobot

# files=[model_filename],

# main_filename="custom.py" # You'd provide a script with a predict() hook

# )

#

# print(f"Successfully registered model '{custom_model.name}' with version '{custom_model_version.id}'")

Automated Compliance and Model Documentation

Meeting regulatory requirements like GDPR or the upcoming EU AI Act requires meticulous documentation. Manually creating these reports is time-consuming and prone to error. DataRobot automates this by generating comprehensive compliance documentation for any model under its management. This report includes details on the model’s architecture, training data, feature processing steps, performance metrics, and fairness assessments. This “auto-doc” feature significantly reduces the manual burden on data science teams and provides a standardized, auditable record for compliance and risk management teams.

Section 3: Advanced Safeguards for Generative AI and LLMs

The rise of Generative AI brings a new set of challenges. LLMs can hallucinate, produce toxic content, or leak sensitive information. Traditional monitoring is insufficient. DataRobot extends its governance framework to GenAI, providing specialized tools to build, monitor, and secure LLM-based applications.

Implementing Guardrails for LLM Applications

To safely deploy LLMs, you need “guardrails”—policies and technical controls that constrain the model’s behavior. DataRobot allows you to configure multiple layers of guardrails for your LLM applications, whether they are built using models from Cohere News, hosted on Amazon Bedrock News, or fine-tuned open-source models from sources like Mistral AI News.

These guardrails can:

- Detect and block toxic language: Prevent the model from generating harmful or inappropriate content.

- Enforce topic relevance: Ensure the LLM’s responses stay within a predefined domain (e.g., only answer questions about financial products).

- Prevent PII leakage: Scan outputs for personally identifiable information and redact it.

- Check for hallucinations: Integrate with knowledge bases or vector databases like those from Pinecone News or Weaviate News to ground responses in factual data.

These policies can be defined declaratively and applied at deployment time, providing a crucial safety net for production applications.

// Conceptual JSON for defining an LLM guardrail policy in DataRobot

// This policy would be applied to an LLM-powered chatbot deployment.

{

"deploymentId": "chatbot-prod-v1",

"guardrails": [

{

"name": "ToxicityFilter",

"enabled": true,

"parameters": {

"threshold": 0.85,

"action": "block_response",

"message": "Response flagged as potentially harmful. Please rephrase your query."

}

},

{

"name": "TopicEnforcement",

"enabled": true,

"parameters": {

"allowed_topics": ["customer_support", "product_info", "billing"],

"action": "redirect",

"message": "I can only help with customer support, product information, or billing questions."

}

},

{

"name": "PIIRedaction",

"enabled": true,

"parameters": {

"pii_types": ["EMAIL", "PHONE_NUMBER", "SSN"],

"action": "redact"

}

}

]

}

Custom Metrics and Performance Monitoring for GenAI

Evaluating LLMs requires a new set of metrics. DataRobot allows you to define and track custom metrics tailored to your GenAI application. For a RAG (Retrieval-Augmented Generation) system, you might track metrics for context relevance and answer faithfulness. For a summarization tool, you could monitor for factual consistency. This is an area of active development across the industry, with tools like LangSmith News also providing deep insights into LLM chains. DataRobot’s platform allows you to implement custom Python functions to calculate these metrics and visualize them over time.

# Example: A custom evaluation function to check for response evasiveness

# This function could be uploaded to DataRobot to monitor an LLM deployment.

import pandas as pd

def check_for_evasiveness(y_true: pd.Series, y_pred: pd.Series) -> float:

"""

Custom metric to calculate the percentage of LLM responses that are evasive.

An evasive response is defined as one containing phrases like "I cannot answer"

or "I don't have information".

Args:

y_true (pd.Series): Ground truth (not used in this metric but required by signature).

y_pred (pd.Series): The predicted responses from the LLM.

Returns:

float: The percentage of evasive responses.

"""

evasive_phrases = [

"i cannot answer",

"i don't know",

"i do not have information",

"as an ai model"

]

evasive_count = 0

total_responses = len(y_pred)

if total_responses == 0:

return 0.0

for response in y_pred:

response_lower = str(response).lower()

if any(phrase in response_lower for phrase in evasive_phrases):

evasive_count += 1

return (evasive_count / total_responses) * 100

# You would then register this function within the DataRobot platform

# and assign it to a specific LLM deployment for continuous monitoring.

Section 4: Best Practices and Integration with the Modern AI Stack

Effectively leveraging a platform like DataRobot requires more than just using its features; it involves integrating it into a broader MLOps strategy and adhering to best practices for responsible AI.

Key Best Practices

- Human-in-the-Loop is Non-Negotiable: While automation accelerates development, human oversight is essential. Use DataRobot’s insights to guide decisions, not replace them. Domain experts should always validate fairness assessments and review prediction explanations for critical use cases.

- Monitor Continuously and Holistically: AI safety is not a one-time check. Models drift, data changes, and new vulnerabilities emerge. Use DataRobot MLOps to continuously monitor for performance degradation, data drift, and fairness violations in production.

- Foster Cross-Functional Collaboration: A centralized platform breaks down silos. Use the Model Registry and automated documentation to facilitate collaboration between data scientists, MLOps engineers, legal teams, and business leaders, ensuring everyone is aligned on AI risk and performance.

Ecosystem Integration

No tool exists in a vacuum. DataRobot is designed to be a central hub in the modern AI stack. It seamlessly connects to data warehouses and platforms like those discussed in Snowflake Cortex News, pulls code from repositories, and deploys models to various targets. Its API-first approach means it can be integrated into CI/CD pipelines, allowing for automated model validation and deployment triggers.

# Example: A hypothetical CI/CD pipeline step (e.g., in GitLab CI or GitHub Actions)

# This YAML snippet shows how the DataRobot CLI could be used to deploy a model

# after it passes automated tests, demonstrating integration into DevOps workflows.

deploy_to_staging:

stage: deploy

script:

- echo "Deploying model to DataRobot Staging Environment..."

- pip install datarobot-mlops

# Set DR_API_TOKEN and DR_ENDPOINT as environment variables in the CI/CD runner

- mlops-agent deploy --model-id "65a9b8c7d6e5f4a3b21c0d9e" --deployment-name "CreditRiskModel-Staging" --environment "Staging"

- echo "Deployment initiated. Check the DataRobot UI for status."

rules:

- if: '$CI_COMMIT_BRANCH == "main"'

Conclusion: Building a Future of Trusted AI

As AI becomes more powerful and integrated into our lives, the need for robust safety and governance frameworks is paramount. The latest DataRobot News reflects a clear industry trend: moving from theoretical AI ethics to practical, scalable, and automated solutions for managing AI risk. By providing integrated tools for fairness, explainability, governance, and LLM safety, platforms like DataRobot empower organizations to innovate responsibly.

The key takeaway is that AI safety cannot be an afterthought; it must be a core, continuous discipline woven into every stage of the AI lifecycle. By leveraging centralized platforms and adopting a culture of responsible development, organizations can unlock the immense potential of AI while building a future where these powerful systems are safe, fair, and worthy of our trust.