Bridging Worlds: Mastering PyTorch and Keras Interoperability in R with the Latest Updates

Introduction: Unifying the Deep Learning Landscape in R

The world of artificial intelligence is a vibrant, fast-paced ecosystem characterized by relentless innovation. Keeping up with the latest Keras News, PyTorch News, and TensorFlow News reveals a landscape of powerful, specialized tools. While TensorFlow and Keras have long been mainstays for production-ready models, PyTorch has captured the hearts of researchers with its flexibility and intuitive design. For data scientists and developers working within the R ecosystem, the ability to leverage the strengths of all these frameworks is not just a convenience—it’s a strategic advantage. The challenge, however, has always been interoperability: how can you seamlessly integrate a state-of-the-art PyTorch model, perhaps from the Hugging Face Transformers News cycle, into a robust Keras pipeline built in R?

This is where the power of the Keras for R package truly shines. It acts as a sophisticated bridge, allowing R users to build, train, and deploy complex deep learning models. A recent, crucial update enhances this bridge, specifically targeting the integration of PyTorch modules. This article delves into a significant enhancement to the layer_torch_module_wrapper() function, a change that dramatically improves the reliability and predictability of combining PyTorch and Keras workflows. We will explore the core concepts behind this feature, walk through practical code examples, discuss advanced applications, and outline best practices for creating hybrid models that harness the best of both worlds, right from your R environment.

Section 1: The Core Challenge of Shape Inference in Hybrid Models

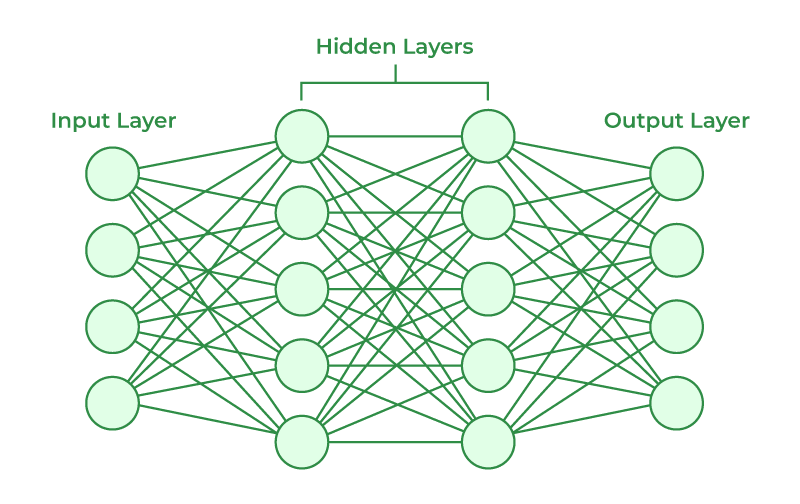

At the heart of any deep learning framework is the concept of a computational graph. Keras, particularly when using the TensorFlow backend, excels at building and optimizing static computation graphs. A key requirement for this process is knowing the shape (i.e., the dimensions) of the tensors that will flow between layers. When you stack standard Keras layers, the framework can automatically infer the output shape of one layer to determine the input shape of the next. For example, it knows that a layer_dense(units = 64) will output a tensor with a final dimension of 64.

However, this automatic inference breaks down when you introduce an external, “black box” component like a custom PyTorch module. When Keras encounters a wrapped PyTorch layer, it can’t simply look inside to understand its internal logic and predict its output shape. The framework would have to perform a forward pass with dummy data to guess the shape, a process that can be inefficient, unreliable, or sometimes impossible, especially with modules that have complex, data-dependent control flow.

Introducing layer_torch_module_wrapper()

The keras R package provides the layer_torch_module_wrapper() function precisely for this purpose: to take a PyTorch nn.Module and encapsulate it so it behaves like a standard Keras Layer. This is a monumental feature for R users, as it unlocks the vast ecosystem of PyTorch models and custom components. You can define a complex attention mechanism in PyTorch and slot it directly into a Keras keras_model_sequential() pipeline.

The original implementation relied heavily on this “best-effort” automatic shape inference. While functional for many simple cases, it created a point of fragility. A recent update addresses this head-on by introducing an output_shape argument. This simple but powerful addition allows the developer to explicitly tell Keras the exact shape of the tensor that the PyTorch module will return. This eliminates guesswork, makes model construction more robust, and prevents cryptic runtime errors related to shape mismatches.

A Simple PyTorch Module in R

To understand the context, let’s first see how we can define a PyTorch module within R using the torch and reticulate packages. This example defines a simple module that reshapes the input and applies a linear transformation.

# Load necessary libraries

library(keras)

library(torch)

library(reticulate)

# Ensure you have torch installed in your Python environment

# reticulate::py_install("torch")

# Define a custom PyTorch nn.Module

SimpleTorchModule <- nn_module(

"SimpleTorchModule",

initialize = function(input_features, output_features) {

self$linear <- nn_linear(input_features, output_features)

self$relu <- nn_relu()

},

forward = function(x) {

# A simple transformation: flatten, linear, relu

x <- torch_flatten(x, start_dim = 2)

x <- self$linear(x)

self$relu(x)

}

)In this code, we define a class SimpleTorchModule that inherits from nn_module. It has an initialization function to set up its layers (a linear layer and a ReLU activation) and a forward method defining the computation. This is the component we want to integrate into a Keras model.

Section 2: Practical Implementation: From Ambiguity to Explicitness

Now, let’s see how the new output_shape argument transforms the integration process. We will build a simple Keras model that uses our custom PyTorch module, first illustrating the potential ambiguity of the old method and then demonstrating the clarity of the new approach.

The Challenge: Relying on Implicit Inference

Without the output_shape argument, Keras must try to infer the output shape of the wrapped PyTorch layer. For a model expecting a 2D input tensor of shape (batch_size, features), this might work. But what if our input is a 3D tensor, like (batch_size, timesteps, features)? Our PyTorch module flattens the last two dimensions. Keras needs to know this to correctly connect the subsequent layer.

Let’s instantiate our module. Suppose our input to the Keras model will be of shape (NULL, 10, 5), where 10 is the number of timesteps and 5 is the number of features. Our PyTorch module will flatten this to (NULL, 50) and then apply a linear layer to produce, say, 32 output features.

# Instantiate the PyTorch module

# It takes 10*5 = 50 input features and outputs 32

torch_module_instance <- SimpleTorchModule(input_features = 50, output_features = 32)

# Build the Keras model

# Note: This relies on Keras correctly inferring the shape after the wrapper

model_implicit <- keras_model_sequential(input_shape = c(10, 5)) %>%

# Wrap the PyTorch module

layer_torch_module_wrapper(module = torch_module_instance) %>%

# Add a final Keras layer

layer_dense(units = 1, activation = "sigmoid")

# Let's try to get a summary

# This might fail or produce warnings in certain complex cases

summary(model_implicit)In this case, Keras might successfully run a trace to figure out the output is of shape (NULL, 32). However, in more complex scenarios involving dynamic operations or multiple outputs, this inference can fail, leading to frustrating debugging sessions. This is a common pain point discussed in forums related to framework interoperability, touching on topics from ONNX News to custom ops in TensorFlow.

The Solution: Explicit Shape Declaration with output_shape

The new output_shape argument provides a robust and explicit solution. We can now directly inform the Keras graph-building engine about the shape contract of our PyTorch layer. This makes the model definition more readable, deterministic, and less prone to errors.

Let’s rebuild the same model, but this time using the new argument. We know our module will output a tensor of shape (NULL, 32), so we specify the non-batch dimension, which is c(32).

# Instantiate the PyTorch module again

torch_module_instance <- SimpleTorchModule(input_features = 50, output_features = 32)

# Build the Keras model with the explicit output_shape

model_explicit <- keras_model_sequential(input_shape = c(10, 5)) %>%

# Wrap the PyTorch module, providing the output shape

layer_torch_module_wrapper(

module = torch_module_instance,

output_shape = c(32)

) %>%

# Add a final Keras layer

layer_dense(units = 1, activation = "sigmoid")

# The summary is now guaranteed to be correct and build instantly

print("Model Summary with Explicit Shape:")

summary(model_explicit)

# We can now compile and train this model like any other Keras model

model_explicit %>% compile(

optimizer = "adam",

loss = "binary_crossentropy",

metrics = "accuracy"

)By providing output_shape = c(32), we have removed all ambiguity. Keras immediately knows that the tensor emerging from our wrapped layer will have 32 features, allowing it to correctly initialize the subsequent layer_dense. This small change is a massive quality-of-life improvement, making hybrid model development feel as seamless as a pure Keras workflow.

Section 3: Advanced Techniques and Real-World Applications

The true power of this feature is realized when dealing with more complex, real-world modules. Many cutting-edge architectures, often discussed in releases from Google DeepMind News or Meta AI News, are first implemented in PyTorch. The ability to wrap them reliably in R Keras opens up new frontiers for R users.

Wrapping a Module with Multiple Inputs/Outputs

Consider a PyTorch module that implements a custom attention mechanism. It might take multiple inputs (query, key, value) and produce multiple outputs (the attended features and the attention weights). While the wrapper’s support for multiple I/O is a deeper topic, specifying the output_shape becomes absolutely critical. If the output is a list of tensors, output_shape would be a list of shape tuples, providing Keras with a complete structural map of the layer’s output.

Integrating Pre-Trained Models from the Python Ecosystem

Perhaps the most compelling use case is leveraging the vast repository of pre-trained models available in the Python ecosystem, especially through libraries like Hugging Face Transformers. While the keras package offers many native models, you might find a niche, state-of-the-art model on Hugging Face Hub that only has a PyTorch implementation. Using reticulate, you can load this model and wrap it.

Let’s imagine we want to use a specific block from a pre-trained PyTorch vision transformer (ViT). We can load the model, extract the specific encoder block, and wrap it as a Keras layer. This allows us to use it for feature extraction within a larger Keras-based image classification pipeline.

# This is a conceptual example. Requires `timm` library in Python.

# reticulate::py_install("timm")

library(keras)

library(torch)

library(reticulate)

# Import the Python library

timm <- import("timm")

# Load a pre-trained Vision Transformer model in PyTorch

vit_model <- timm$create_model("vit_base_patch16_224", pretrained = TRUE)

# Extract a single component, for example, the first encoder block

first_encoder_block <- vit_model$blocks[0]

# Define the input and output shape for this block

# For ViT base, the patch embedding dimension is 768

input_shape <- c(197, 768) # (num_patches + class_token, embedding_dim)

output_shape <- c(197, 768) # Encoder blocks preserve shape

# Now, we can build a Keras model using this block

feature_extractor <- keras_model_sequential(input_shape = input_shape) %>%

layer_torch_module_wrapper(

module = first_encoder_block,

output_shape = output_shape

) %>%

layer_global_average_pooling_1d() %>%

layer_dense(units = 10, activation = "softmax") # For a 10-class problem

print("ViT Feature Extractor Summary:")

summary(feature_extractor)In this advanced scenario, manually specifying output_shape is not just a best practice; it’s a necessity. Keras has no way of knowing the internal architecture of the timm ViT block. By providing the shape explicitly, we enable seamless integration, allowing us to combine powerful pre-trained PyTorch components with the familiar and robust Keras API for fine-tuning and deployment. This opens the door to using the latest models from NVIDIA AI News or research papers directly in R.

Section 4: Best Practices, Optimization, and Ecosystem Context

To make the most of this powerful interoperability feature, it’s important to follow some best practices and understand the broader context of model deployment and optimization.

Best Practices for Wrapping Modules

- Always Specify

output_shape: Even if you think Keras can infer the shape, explicitly defining it makes your code more readable, robust, and future-proof. It serves as documentation for the next developer (or your future self). - Manage Devices Carefully: When you mix PyTorch and TensorFlow, be mindful of the device (CPU/GPU) on which tensors reside. Ensure that data is moved to the correct device before entering and after exiting the wrapped module to avoid performance bottlenecks or errors.

- Keep Wrapped Components Cohesive: It’s generally better to wrap a single, cohesive PyTorch module rather than many tiny ones. Each wrapper introduces a small amount of overhead for switching between the TensorFlow and PyTorch runtimes.

- Thoroughly Test Shapes: Before building a large model, instantiate your PyTorch module and pass a sample tensor through it to manually verify its output shape. This confirms that the shape you provide to the Keras wrapper is correct.

Performance and Deployment Considerations

While wrapping provides incredible flexibility during development, it’s essential to consider the deployment pipeline. The overhead of running two deep learning frameworks simultaneously might be acceptable for research but could be a concern for high-performance inference. For production, consider these alternatives:

- Model Conversion with ONNX: The Open Neural Network Exchange (ONNX) format is an industry standard for model interoperability. You can export your PyTorch model to ONNX and then import it into a TensorFlow/Keras environment. This creates a single, framework-agnostic model. Keeping up with ONNX News is crucial for anyone working in a multi-framework environment.

- Inference Optimization: Once your model is finalized, use tools like NVIDIA TensorRT or Intel’s OpenVINO for optimization. These tools take a saved model (often in ONNX or TensorFlow’s SavedModel format) and compile it for specific hardware, dramatically improving inference speed.

- MLOps Platforms: Integrating such hybrid models into a production workflow requires robust MLOps tools. Platforms like MLflow or Weights & Biases can track experiments involving these mixed models, while deployment platforms like AWS SageMaker, Google’s Vertex AI, or Azure Machine Learning can serve them.

Conclusion: A More Connected Future for R and AI

The addition of the output_shape argument to Keras for R’s layer_torch_module_wrapper is more than just a minor API update; it’s a significant step towards a more unified and powerful deep learning ecosystem for R users. By removing the ambiguity of shape inference, this feature makes the process of building hybrid TensorFlow/PyTorch models more reliable, predictable, and developer-friendly. It empowers R developers to confidently reach into the vast PyTorch ecosystem, grab the exact component they need—be it a custom layer or a state-of-the-art model from Hugging Face—and seamlessly integrate it into their familiar Keras workflows.

As the AI landscape continues to evolve with rapid advancements from industry leaders and the open-source community, the importance of such interoperability cannot be overstated. This update ensures that the R community remains a first-class citizen in the world of deep learning, equipped with the flexible tools needed to build the next generation of intelligent applications. The next step for developers is to explore this feature, experiment with wrapping novel PyTorch architectures, and push the boundaries of what’s possible at the intersection of R, Keras, and PyTorch.