Orchestrating High-Compute Workloads: How Modal is Redefining AI Agent Capabilities

Introduction

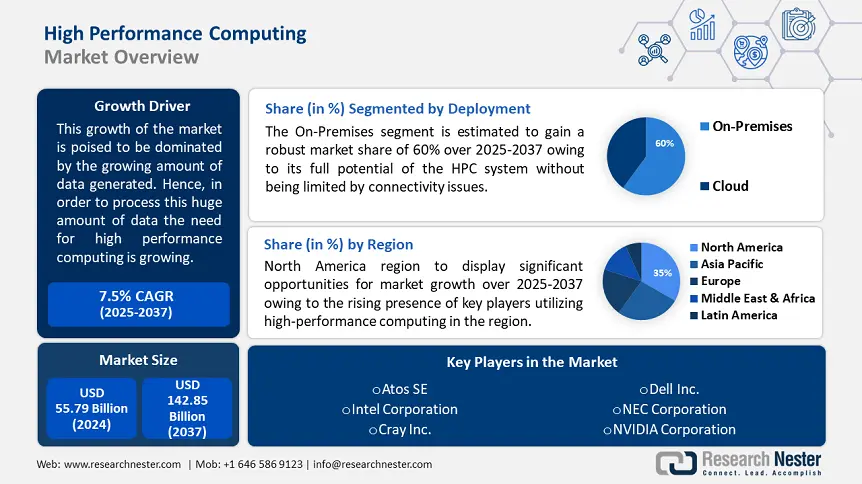

The landscape of artificial intelligence is undergoing a seismic shift. We are moving rapidly from an era of passive chatbots to an era of active agents—systems capable not just of generating text, but of executing complex tasks, analyzing massive datasets, and performing scientific computations. However, a significant bottleneck remains: the compute environment. While Large Language Models (LLMs) hosted by providers like Anthropic or OpenAI are powerful reasoning engines, they cannot natively run heavy scientific simulations or train neural networks within the chat interface. This is where the recent surge in Modal News becomes critical for developers and data scientists alike.

The integration of serverless infrastructure with AI agents represents the next frontier in software architecture. By decoupling the reasoning capabilities of an LLM from the execution environment, developers can now empower agents to spin up hundreds of CPUs or GPUs on demand. This paradigm allows a “brain” (like Claude or GPT-4) to offload “muscle” work to the cloud instantly. In this comprehensive guide, we will explore how Modal serves as the perfect backend for these high-compute workloads, enabling seamless scaling for scientific skills and data processing. We will delve into the technical architecture, practical implementations, and the broader ecosystem involving PyTorch News, TensorFlow News, and emerging agentic frameworks.

Section 1: The Serverless Compute Paradigm for AI

Traditionally, running high-performance computing (HPC) workloads required provisioning virtual machines on platforms like AWS or Google Cloud, configuring Docker containers, and managing Kubernetes clusters. For an AI agent trying to execute a task autonomously, this overhead is prohibitive. The agent needs an environment that is ephemeral, fast to start, and infinitely scalable. This is the core value proposition discussed in recent Modal News cycles.

Understanding the Architecture

Modal allows developers to define infrastructure as code directly within their Python scripts. There is no YAML configuration hell; you simply decorate a function, and Modal handles the containerization, deployment, and scaling. When we look at AWS SageMaker News or Vertex AI News, the focus is often on enterprise pipelines. Modal, conversely, focuses on granular, function-level execution which is ideal for agentic workflows.

This architecture is particularly vital when integrating with tools highlighted in LangChain News or LlamaIndex News. An agent can determine it needs to run a complex Monte Carlo simulation, generate a Python script, and execute it on a remote machine with 64GB of RAM, all within seconds. This capability bridges the gap between local prototyping and cloud-scale production.

Basic Implementation: The Remote Function

Let’s look at how simple it is to turn a local function into a remote, scalable workload. This is the foundational building block for giving AI agents “skills.”

import modal

# Define the container image with necessary dependencies

# This is where you would add libraries found in NumPy or SciPy News

image = modal.Image.debian_slim().pip_install("numpy", "pandas")

app = modal.App("scientific-skills-demo")

@app.function(image=image)

def heavy_computation(data_size):

import numpy as np

import pandas as pd

print(f"Generating {data_size} random numbers...")

# Simulate a heavy workload

data = np.random.rand(data_size, 100)

df = pd.DataFrame(data)

# Perform a complex aggregation

result = df.mean().mean()

return float(result)

@app.local_entrypoint()

def main():

# This runs locally, but triggers the function in the cloud

print("Starting remote execution...")

result = heavy_computation.remote(1000000)

print(f"Remote calculation result: {result}")In the example above, the `heavy_computation` function never runs on your local laptop. The `modal` client serializes the function, pushes it to the cloud, spins up a container with NumPy and Pandas installed, executes the code, and returns the result. This seamless transition is what makes Modal News so exciting for developers building tools for Anthropic News or OpenAI News based agents.

Section 2: GPU Acceleration and Deep Learning Integration

While CPU tasks are useful, the heart of modern AI lies in GPU acceleration. Whether you are following NVIDIA AI News regarding the latest H100 chips or tracking Hugging Face News for the latest open-source models, access to GPUs is often a bottleneck. Agents equipped with scientific skills often need to run inference on local models or fine-tune networks dynamically.

Seamless GPU Provisioning

One of the most powerful features of Modal is the ability to request specific GPU hardware with a single line of code. This is significantly easier than managing CUDA drivers manually. For an AI agent, this means it can decide to “upgrade” its hardware for a specific task. If an agent needs to generate an image or transcribe audio using Whisper, it can provision a GPU for the duration of that task and shut it down immediately after, optimizing costs—a topic frequently discussed in FinOps and Azure AI News.

Below is an example of running a PyTorch model on a GPU. This setup is relevant for those following PyTorch News and DeepSpeed News for optimizing inference.

import modal

# Define an image with PyTorch and CUDA support

# We also install transformers, relevant to Hugging Face Transformers News

image = (

modal.Image.debian_slim()

.pip_install("torch", "transformers", "accelerate")

)

app = modal.App("gpu-inference-skill")

# Request an A10G GPU for this function

@app.function(image=image, gpu="A10G")

def run_inference(prompt: str):

import torch

from transformers import pipeline

print("Loading model onto GPU...")

# Using a lightweight model for demonstration

generator = pipeline(

"text-generation",

model="distilgpt2",

device=0,

torch_dtype=torch.float16

)

output = generator(prompt, max_length=50, num_return_sequences=1)

return output[0]['generated_text']

@app.local_entrypoint()

def main():

prompt = "The future of serverless AI is"

print(f"Prompt: {prompt}")

response = run_inference.remote(prompt)

print(f"Generated: {response}")This code snippet demonstrates how an agent could utilize a “creative writing skill” backed by a private model running on Modal. This approach is also applicable to Stability AI News, where image generation models can be hosted ephemerally.

Section 3: Advanced Techniques – Orchestrating Distributed Systems

The true power of integrating Modal with scientific skills lies in parallelism. When we look at Ray News or Dask News, the focus is on distributed computing. Modal simplifies this by allowing you to map a function over a list of inputs, automatically scaling the number of containers to match the workload. This is crucial for tasks like hyperparameter tuning (relevant to Optuna News) or processing massive datasets for RAG (Retrieval-Augmented Generation).

Building a Map-Reduce Style Skill

Imagine an AI agent tasked with analyzing sentiment across 10,000 documents. Doing this sequentially is too slow. Doing it locally requires too much RAM. By using `app.function.map`, the agent can process hundreds of documents in parallel. This scalability aligns with the capabilities often touted in Apache Spark MLlib News but with a much lower barrier to entry.

import modal

image = modal.Image.debian_slim().pip_install("nltk")

app = modal.App("distributed-analysis")

@app.function(image=image)

def analyze_sentiment(text):

import nltk

from nltk.sentiment import SentimentIntensityAnalyzer

# Ensure resources are downloaded in the container

try:

nltk.data.find('vader_lexicon')

except LookupError:

nltk.download('vader_lexicon', quiet=True)

sia = SentimentIntensityAnalyzer()

score = sia.polarity_scores(text)

return score['compound']

@app.local_entrypoint()

def main():

# Simulate a large dataset

texts = [

"Modal is incredibly fast and efficient.",

"Waiting for servers to provision is frustrating.",

"AI agents are the future of work.",

"Serverless GPUs make deep learning accessible."

] * 25 # Scale up to 100 items for demonstration

print("Distributing work across containers...")

# .map() runs the function in parallel across multiple containers

results = list(analyze_sentiment.map(texts))

average_sentiment = sum(results) / len(results)

print(f"Processed {len(results)} texts.")

print(f"Average Sentiment Score: {average_sentiment:.4f}")In this scenario, Modal might spin up 20, 50, or even 100 containers simultaneously to process the list, depending on the concurrency limits. This rapid scaling is what makes the “Scientific Skills” concept feasible for real-time interaction with LLMs.

Integration with Vector Databases and RAG

Modern AI applications heavily rely on vector databases. When following Pinecone News, Weaviate News, Milvus News, or Qdrant News, the challenge is often the ingestion pipeline—embedding thousands of documents. Modal functions can generate embeddings using libraries like Sentence Transformers News and push them to these databases in parallel. This creates a robust pipeline for LlamaIndex News workflows.

Section 4: Best Practices and Ecosystem Integration

Implementing high-compute workloads via Modal requires adherence to best practices to ensure security, cost-efficiency, and reliability. As we integrate these tools with broader ecosystems like Google DeepMind News research or Meta AI News open-source releases, architectural discipline becomes paramount.

1. Environment Management and Containerization

Unlike Google Colab News or Kaggle News where the environment is interactive but often static, Modal environments are defined by code. It is best practice to pin versions of your libraries. If you are using JAX News or Flax, ensure your CUDA versions match the underlying hardware provided by Modal. Utilizing custom Docker images is also supported, which allows for compatibility with OpenVINO News or ONNX News runtimes for optimized inference.

2. Handling State and Storage

Serverless functions are stateless. However, scientific workloads often require state. Modal provides `Volumes` and `NetworkFileSystems`. If you are training a model (relevant to Fast.ai News or Weights & Biases News), you should mount a volume to save checkpoints. This prevents data loss if a spot instance is preempted.

import modal

vol = modal.Volume.from_name("model-checkpoints", create_if_missing=True)

app = modal.App("training-job")

@app.function(volumes={"/data": vol})

def train_step(epoch_data):

# ... training logic ...

# Save checkpoint to the persistent volume

with open("/data/checkpoint.pt", "w") as f:

f.write("model_weights_data")

vol.commit() # Ensure data is persisted3. Observability and Monitoring

When an agent triggers a remote job, you need to know if it succeeded. Integrating with observability tools is crucial. While Modal has built-in logs, enterprise applications might stream these logs to platforms discussed in Datadog or Splunk contexts. For AI-specific monitoring, integration with LangSmith News or MLflow News allows you to track the inputs and outputs of the agent’s tool usage, ensuring the “scientific skill” is performing as expected.

4. Security and Secrets

Never hardcode API keys. Whether you are accessing Cohere News APIs, Mistral AI News endpoints, or Replicate News services, use Modal’s `Secret` management. This injects environment variables securely into the container at runtime.

The Broader AI Ecosystem

The rise of Modal as a backend for AI agents connects various disparate parts of the machine learning landscape. By providing a unified compute layer, it acts as the glue between:

- Model Registries: Fetching models from Hugging Face or W&B.

- Serving Frameworks: Hosting vLLM News or Ollama News compatible endpoints.

- Frontend Interfaces: Powering backends for Streamlit News, Gradio News, or Chainlit News applications.

- AutoML: Running parallel trials for AutoML News frameworks.

Even users of enterprise platforms like IBM Watson News, Amazon Bedrock News, or Snowflake Cortex News are finding value in offloading specific, custom Python workloads to Modal due to its flexibility and startup speed.

Conclusion

The integration of high-compute capabilities into AI agents marks a turning point in how we interact with artificial intelligence. We are moving away from simple text generation toward comprehensive problem solving. By leveraging platforms like Modal, developers can give their agents “Scientific Skills”—the ability to crunch numbers, run simulations, and process vast datasets on hundreds of CPUs or GPUs instantly.

As we continue to see updates in Modal News, the barrier to entry for supercomputing is lowering. Whether you are a researcher implementing the latest papers from Google DeepMind News, or an engineer building the next generation of autonomous agents using LangChain, the ability to scale compute programmatically is your most powerful tool. The code examples provided here serve as a starting point. The next step is to build your own “skills” and empower your agents to leave the chat window and start working in the real world.