Simplifying Enterprise AI: A Deep Dive into Amazon Bedrock’s New AgentCore Gateway

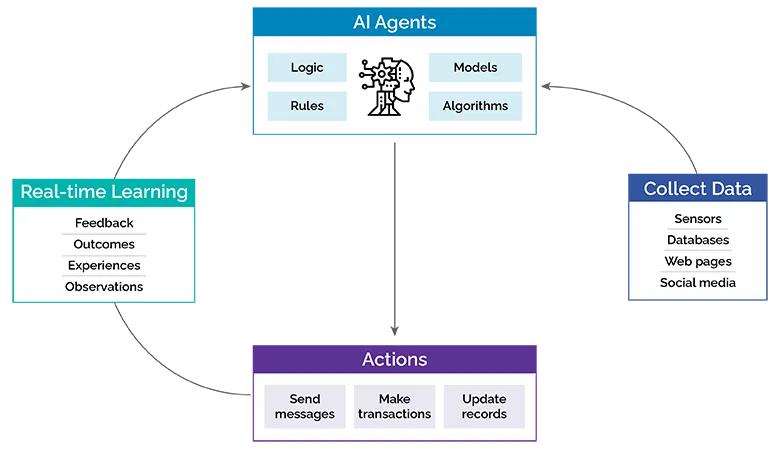

The rapid evolution of generative AI has ushered in a new era of application development, with autonomous agents at the forefront. These agents, powered by large language models (LLMs), promise to automate complex tasks, streamline workflows, and create more intuitive user experiences. However, building and deploying enterprise-grade AI agents is fraught with challenges. Developers grapple with complex tool integrations, brittle API connections, inconsistent security models, and a lack of centralized management. As the number of tools and agents grows, this complexity can quickly become unmanageable, stifling innovation and increasing operational overhead. This is a common pain point discussed in the latest Amazon Bedrock News and across the AI community.

Addressing this critical gap, Amazon Web Services (AWS) has introduced a transformative new service: the Amazon Bedrock AgentCore Gateway. This managed service acts as a centralized, secure, and scalable bridge between Bedrock Agents and their underlying tools, such as APIs, databases, and AWS Lambda functions. By decoupling the agent’s logic from the implementation details of its tools, the AgentCore Gateway simplifies development, enhances security, and provides a robust framework for managing agent capabilities at scale. This article provides a comprehensive technical deep dive into this groundbreaking service, exploring its core concepts, implementation details, advanced features, and best practices for building next-generation enterprise AI agents.

Understanding the Core Concepts of the AgentCore Gateway

At its heart, the AgentCore Gateway is an abstraction layer. Instead of an agent invoking a tool’s API endpoint directly, it makes a request to the gateway, which then securely handles the invocation, authentication, and response handling. This seemingly simple change has profound implications for agent architecture. It shifts the burden of managing tool connectivity, credentials, and access policies from the individual agent’s business logic to a dedicated, managed infrastructure component. This aligns with modern microservices principles and is a significant update in the landscape of AI development, rivaling recent announcements in Azure AI News and Vertex AI News.

Key Components and Architecture

The AgentCore Gateway is built around a few key concepts:

- Tool Registry: A centralized catalog where you define and register all the tools (APIs) available to your agents. Each tool is defined using an OpenAPI specification, which provides a structured schema that the foundation model uses to understand the tool’s functionality, inputs, and outputs.

- Managed Authentication: The gateway integrates seamlessly with AWS Secrets Manager and IAM to securely store and manage API keys, OAuth tokens, and other credentials. The agent itself never needs to handle sensitive credentials directly.

- Invocation Policies: Fine-grained IAM policies control which agents or roles are permitted to invoke specific tools or even specific paths within a tool’s API. This provides a robust security posture essential for enterprise environments.

- Centralized Logging and Monitoring: All tool invocations are logged to Amazon CloudWatch, and tracing can be enabled with AWS X-Ray. This provides a single pane of glass for monitoring agent performance, debugging errors, and auditing tool usage, a feature often sought after by users of tools like LangSmith News and MLflow News.

To register a new tool, you first define its contract using an OpenAPI 3.0 schema. This schema is crucial, as it’s what the agent’s underlying LLM—be it a model from the latest Anthropic News like Claude 3 or from Meta AI News like Llama 3—will use for reasoning and function calling.

Here is an example of how you might use the boto3 SDK in Python to register a new “InventoryCheck” tool with the gateway:

import boto3

import json

# Initialize the Bedrock Agent client

bedrock_agent_client = boto3.client('bedrock-agent')

# Define the OpenAPI schema for the inventory checking tool

openapi_schema = {

"openapi": "3.0.0",

"info": {

"title": "Inventory Management API",

"version": "1.0.0",

"description": "API for checking product inventory levels."

},

"paths": {

"/inventory/{productId}": {

"get": {

"summary": "Get stock level for a specific product",

"parameters": [

{

"name": "productId",

"in": "path",

"required": True,

"schema": {

"type": "string"

}

}

],

"responses": {

"200": {

"description": "Successful response with stock count",

"content": {

"application/json": {

"schema": {

"type": "object",

"properties": {

"productId": {"type": "string"},

"stockCount": {"type": "integer"}

}

}

}

}

}

}

}

}

}

}

# The actual endpoint of our internal inventory service

api_endpoint_url = "https://api.internal.mycompany.com/v1/inventory"

# Register the tool with the AgentCore Gateway

response = bedrock_agent_client.create_agent_tool(

agentId='YOUR_AGENT_ID',

agentVersion='DRAFT',

toolName='InventoryCheckTool',

description='A tool to check the current stock level of a product.',

toolSpecification={

'openApiSchema': {

's3': {

# In a real scenario, you'd upload the schema to S3

# For this example, we pass it inline (hypothetical parameter)

'payload': json.dumps(openapi_schema)

}

}

},

# This is where the AgentCore Gateway configuration would go

gatewayConfiguration={

'endpoint': api_endpoint_url,

'authenticationType': 'AWS_SECRETS_MANAGER',

'secretArn': 'arn:aws:secretsmanager:us-east-1:123456789012:secret:my-inventory-api-key-AbCdEf'

}

)

print(f"Tool 'InventoryCheckTool' created with ID: {response['agentTool']['toolId']}")Implementing an Agent with the Gateway

Once your tools are registered in the AgentCore Gateway, building an agent that uses them becomes remarkably simple. The agent’s configuration no longer needs to contain complex logic for API calls, authentication, or error handling for each tool. Instead, you simply associate the agent with the registered tools from the gateway.

Configuring the Bedrock Agent

When you create or update a Bedrock Agent, you can now specify the tools by referencing their registered names in the gateway. The agent’s underlying orchestration logic automatically understands how to route tool invocation requests through the gateway. This is a significant improvement over traditional methods where developers often rely on frameworks like LangChain or LlamaIndex to manually wire up tools, a topic frequently covered in LangChain News and LlamaIndex News.

Let’s imagine we have a simple internal order management service built with FastAPI. This service exposes endpoints for checking inventory and placing orders.

from fastapi import FastAPI

from pydantic import BaseModel

app = FastAPI()

class Order(BaseModel):

productId: str

quantity: int

# Dummy database

inventory_db = {"prod_123": 100, "prod_456": 50}

orders_db = []

@app.get("/inventory/{product_id}")

def get_inventory(product_id: str):

stock_count = inventory_db.get(product_id, 0)

return {"productId": product_id, "stockCount": stock_count}

@app.post("/orders")

def place_order(order: Order):

if inventory_db.get(order.productId, 0) >= order.quantity:

inventory_db[order.productId] -= order.quantity

orders_db.append(order.dict())

return {"status": "success", "orderId": len(orders_db)}

else:

return {"status": "failed", "reason": "Insufficient stock"}

# To run this: uvicorn main:app --reload

After deploying this FastAPI application and registering its endpoints as tools in the AgentCore Gateway, you can create a Bedrock Agent. In the agent’s configuration, you would simply enable the “InventoryCheckTool” and “PlaceOrderTool”. The agent’s instruction prompt could be as simple as: “You are a helpful assistant that can check inventory and place orders.”

When a user prompts the agent with, “Is product prod_123 in stock and can you order 5 for me?”, the agent’s reasoning process is as follows:

- The LLM parses the user’s request and identifies two distinct tasks: checking inventory and placing an order.

- It consults the available tool schemas provided by the AgentCore Gateway and determines that “InventoryCheckTool” and “PlaceOrderTool” are the appropriate functions.

- It formulates a plan: first, call `InventoryCheckTool` with `productId=’prod_123’`.

- The agent runtime sends this invocation request to the AgentCore Gateway.

- The gateway authenticates, calls the actual FastAPI endpoint, and returns the stock count to the agent.

- The agent receives the response (e.g., `{“stockCount”: 100}`).

- Seeing sufficient stock, the LLM then formulates the second call: `PlaceOrderTool` with `productId=’prod_123’` and `quantity=5`.

- The gateway processes this second call, and the agent confirms the order placement to the user.

Advanced Techniques and Enterprise Integration

The true power of the AgentCore Gateway shines in complex enterprise scenarios. It enables advanced patterns that are difficult to implement with traditional agent architectures, pushing the boundaries of what’s possible and keeping pace with innovations from the OpenAI News and Google DeepMind News communities.

Dynamic Tool Invocation and Cross-Account Access

In large organizations, services are often distributed across multiple AWS accounts. The AgentCore Gateway facilitates secure cross-account tool invocation. An agent running in a central “AI” account can be granted IAM permissions to invoke a tool (e.g., a Lambda function) registered in a departmental “Finance” account. The gateway handles the cross-account role assumption securely, abstracting this complexity from the agent developer.

Integration with Vector Databases for Advanced RAG

While the gateway excels at transactional tool use, it can also be used to manage access to data sources for Retrieval-Augmented Generation (RAG). You can create a tool that queries a vector database like Pinecone, Milvus, or Weaviate. By routing this through the gateway, you can centralize access control, log all data retrieval queries, and manage credentials for the vector database securely. This is a more robust approach than embedding database connection logic directly into the agent’s code, a topic of interest for readers of Pinecone News and Milvus News.

Here’s a conceptual example of a Lambda function, registered as a tool, that queries a vector database for RAG.

import json

import os

import pinecone

from sentence_transformers import SentenceTransformer

# This would be initialized outside the handler for performance

pinecone_api_key = os.environ.get("PINECONE_API_KEY")

pinecone.init(api_key=pinecone_api_key, environment="us-west1-gcp")

index = pinecone.Index("company-knowledge-base")

model = SentenceTransformer('all-MiniLM-L6-v2')

def lambda_handler(event, context):

"""

This Lambda function is a tool for querying the company knowledge base.

It's registered in the AgentCore Gateway.

"""

try:

# The agent provides the query in the event body

body = json.loads(event['body'])

query_text = body['query']

# Embed the query

query_vector = model.encode(query_text).tolist()

# Query the vector database

query_results = index.query(

vector=query_vector,

top_k=3,

include_metadata=True

)

# Format the results for the agent

context_snippets = [

match['metadata']['text'] for match in query_results['matches']

]

return {

'statusCode': 200,

'body': json.dumps({"context": context_snippets})

}

except Exception as e:

return {

'statusCode': 500,

'body': json.dumps({"error": str(e)})

}

Best Practices and Optimization Strategies

To maximize the benefits of the Amazon Bedrock AgentCore Gateway, developers should adhere to several best practices.

1. Design Clear and Concise OpenAPI Schemas

The quality of your tool schemas directly impacts the agent’s performance. The LLM relies entirely on the schema’s descriptions, summaries, and parameter names to decide which tool to use. Use clear, descriptive language. For example, instead of a parameter named `p_id`, name it `productIdentifier` and add a description: “The unique SKU or ID of the product.”

2. Implement Idempotent Tools Where Possible

Network issues can cause an agent to retry a tool invocation. If a tool performs a state-changing action (e.g., creating an order), design it to be idempotent. This means that calling it multiple times with the same input will produce the same result as calling it once. For example, include a unique `transactionId` in the request and have the API check if an order with that ID has already been created.

3. Leverage Fine-Grained IAM Policies

Use the principle of least privilege. Create specific IAM policies that grant an agent permission to use only the tools it absolutely needs. You can even restrict access to specific API paths within a tool. This minimizes the potential impact of a misbehaving or compromised agent.

4. Monitor and Optimize for Cost and Latency

Use the CloudWatch logs generated by the gateway to monitor which tools are used most frequently and which have the highest latency. This data is invaluable for optimization. If a tool is called constantly with the same parameters, consider implementing a caching layer. If a tool is slow, it will degrade the entire user experience of the agent, so it should be a priority for performance tuning. These MLOps principles are also central to platforms like AWS SageMaker News and Weights & Biases News.

Conclusion: The Future of Enterprise Agent Development

The Amazon Bedrock AgentCore Gateway represents a significant leap forward in the field of enterprise AI. By abstracting away the complexity of tool integration, it allows developers to focus on what truly matters: designing intelligent, capable, and reliable AI agents that solve real-world business problems. The gateway’s emphasis on security, scalability, and observability provides the foundational infrastructure that enterprises need to deploy agents with confidence.

As the AI landscape continues to evolve, with constant updates from NVIDIA AI News on hardware and Hugging Face Transformers News on models, the importance of robust, flexible, and manageable agent architectures will only grow. The AgentCore Gateway is more than just a new feature; it’s a strategic component that positions Amazon Bedrock as a premier platform for building the next generation of sophisticated, enterprise-ready AI applications. For any organization serious about leveraging autonomous agents, exploring and adopting this new service should be a top priority.