Supercharging LLM Inference: A Deep Dive into TensorRT Optimization for Streaming Applications

Unlocking Blazing-Fast LLM Inference with NVIDIA TensorRT

The proliferation of Large Language Models (LLMs) has revolutionized countless industries, but their deployment in real-time, interactive applications remains a significant engineering hurdle. The immense computational cost of LLM inference often leads to high latency, hindering user experience in applications like chatbots, live coding assistants, and interactive content generation. While frameworks like PyTorch and TensorFlow are exceptional for training, they are not always optimized for the bare-metal performance required for production inference. This is where NVIDIA TensorRT, a high-performance deep learning inference optimizer and runtime library, enters the picture, promising to drastically reduce latency and increase throughput on NVIDIA GPUs.

This article provides a comprehensive technical guide to leveraging TensorRT for LLM inference. We will explore the core optimization principles, walk through a practical example of converting a Hugging Face model, delve into advanced techniques essential for streaming applications, and discuss best practices for production deployment. By understanding and implementing these strategies, developers can unlock significant performance gains, making responsive and scalable AI applications a reality. This exploration is particularly relevant given the latest PyTorch News and Hugging Face News, which highlight a growing focus on production-ready model optimization.

The Foundations: What Makes TensorRT So Fast?

At its core, TensorRT is not a training framework but a post-training optimizer. It takes a model trained in a framework like PyTorch or TensorFlow (usually via an intermediate ONNX format) and compiles it into a highly optimized runtime “engine” specifically tuned for a target NVIDIA GPU architecture. This process involves a suite of sophisticated optimizations that work in concert to maximize performance.

Key Optimization Techniques

TensorRT’s power comes from several key automated optimizations:

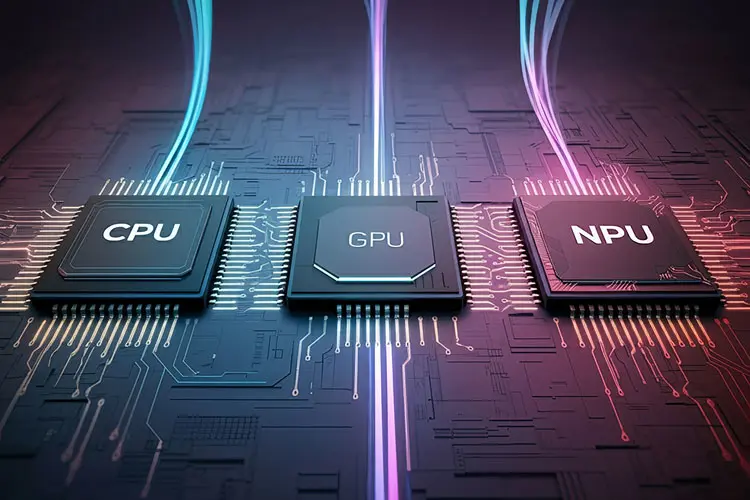

- Precision Calibration (Quantization): One of the most effective techniques is reducing the numerical precision of model weights from 32-bit floating-point (FP32) to FP16, or even 8-bit integers (INT8). Lower precision reduces the model’s memory footprint, decreases memory bandwidth requirements, and leverages specialized Tensor Cores on NVIDIA GPUs for massive computational speedups. INT8 quantization requires a calibration step with a representative dataset to minimize accuracy loss.

- Layer and Tensor Fusion: TensorRT intelligently fuses multiple individual layers into a single, custom kernel. For example, a sequence of Convolution, Bias, and ReLU (CBR) layers can be merged into one operation. This “vertical fusion” dramatically reduces kernel launch overhead and memory access latency, as intermediate results are kept within registers or L2 cache instead of being written back to and read from global GPU memory.

- Kernel Auto-Tuning: NVIDIA GPUs have thousands of CUDA cores, and there are often multiple ways to implement a given deep learning operation (e.g., convolution). TensorRT profiles and selects the most efficient pre-written kernels from its library (like cuDNN or cuBLAS) for the specific layers, parameters, and target GPU, ensuring optimal performance.

- Dynamic Tensor Memory: TensorRT optimizes memory management by reusing memory for tensors whose lifecycles do not overlap, significantly reducing the overall memory footprint of the model during inference.

The Crucial Role of ONNX

To apply these optimizations, TensorRT needs a standardized way to understand the model’s architecture. The Open Neural Network Exchange (ONNX) format serves as this essential bridge. Exporting a trained PyTorch or TensorFlow model to ONNX creates a graph-based, framework-agnostic representation that TensorRT can parse and optimize. This interoperability is a cornerstone of modern MLOps pipelines and a frequent topic in ONNX News.

# Example: Exporting a simple PyTorch model to ONNX

import torch

import torch.nn as nn

# 1. Define a simple model

class SimpleModel(nn.Module):

def __init__(self):

super(SimpleModel, self).__init__()

self.linear1 = nn.Linear(128, 256)

self.relu = nn.ReLU()

self.linear2 = nn.Linear(256, 10)

def forward(self, x):

x = self.linear1(x)

x = self.relu(x)

x = self.linear2(x)

return x

# 2. Instantiate the model and create a dummy input

model = SimpleModel()

model.eval() # Set model to evaluation mode

dummy_input = torch.randn(1, 128) # (batch_size, input_features)

onnx_program = torch.onnx.export(model, dummy_input, "simple_model.onnx")

print("Model has been successfully exported to simple_model.onnx")A Practical Walkthrough: Optimizing a Hugging Face Model

Let’s move from theory to practice by optimizing a pre-trained model from the Hugging Face Hub. We’ll use the `transformers` library to load a model, export it to ONNX, and then use the TensorRT Python API to build a high-performance engine. This workflow is central to deploying models discussed in Hugging Face Transformers News.

Step 1: Exporting a Transformer Model to ONNX

Exporting a complex model like a Transformer requires careful handling of its inputs and outputs. The Hugging Face Optimum library simplifies this process, providing robust tools for ONNX export.

# Ensure you have optimum and transformers installed:

# pip install optimum[onnxruntime] transformers

from optimum.exporters.onnx import main_export

# The main_export function provides a command-line-like interface

# to export models easily.

model_name = "distilbert-base-uncased-finetuned-sst-2-english"

output_path = "distilbert_onnx"

main_export(

model_name_or_path=model_name,

output=output_path,

task="text-classification",

# You can add more options like opset version here

)

print(f"Model {model_name} exported to ONNX format in ./{output_path}")Step 2: Building the TensorRT Engine

With the ONNX model ready, we can use the TensorRT Python API to build the optimized engine. This step performs all the heavy lifting: parsing the ONNX graph, applying fusions, selecting kernels, and tuning for the specific GPU.

# Ensure tensorrt is installed:

# pip install tensorrt

import tensorrt as trt

TRT_LOGGER = trt.Logger(trt.Logger.WARNING)

onnx_model_path = "distilbert_onnx/model.onnx"

engine_file_path = "distilbert.engine"

def build_engine(onnx_path, engine_path):

# 1. Create a builder, network, and parser

builder = trt.Builder(TRT_LOGGER)

network = builder.create_network(1 << int(trt.NetworkDefinitionCreationFlag.EXPLICIT_BATCH))

parser = trt.OnnxParser(network, TRT_LOGGER)

# 2. Set up builder configuration

config = builder.create_builder_config()

config.set_memory_pool_limit(trt.MemoryPoolType.WORKSPACE, 1 << 30) # 1GB workspace

# For better performance, you can enable FP16

if builder.platform_has_fast_fp16:

config.set_flag(trt.BuilderFlag.FP16)

# 3. Parse the ONNX model

with open(onnx_path, 'rb') as model:

if not parser.parse(model.read()):

for error in range(parser.num_errors):

print(parser.get_error(error))

return None

print(f"Completed parsing ONNX file from {onnx_path}")

# 4. Build the serialized engine

# This step can take a while as TensorRT performs optimizations

plan = builder.build_serialized_network(network, config)

if not plan:

return None

with open(engine_path, "wb") as f:

f.write(plan)

print(f"Completed building engine and saved to {engine_path}")

return plan

build_engine(onnx_model_path, engine_file_path)Advanced Techniques for Streaming LLM Inference

For interactive LLM applications, simply optimizing a single forward pass isn’t enough. The autoregressive nature of token generation—where each new token depends on all previous tokens—presents a unique challenge. This is where advanced techniques like in-flight batching and efficient KV cache management become critical, forming the basis for technologies like the Triton Inference Server and high-performance libraries like vLLM.

The Challenge: Managing the KV Cache

During generation, an LLM calculates Key (K) and Value (V) tensors for each token in its attention layers. To avoid re-computing these for the entire sequence at every step, they are stored in a “KV cache.” For long sequences, this cache can become enormous, creating a memory bottleneck. Furthermore, traditional static batching is inefficient for chat applications, as different requests in a batch may have vastly different lengths and finish at different times, leaving GPU resources idle.

In-Flight Batching and PagedAttention

Modern inference servers solve this with in-flight batching (also known as continuous batching). Instead of waiting for a full batch, the server continuously adds new requests to the GPU’s workload as soon as space is available. This maximizes GPU utilization.

This is often paired with an optimized KV cache management system like PagedAttention. Inspired by virtual memory and paging in operating systems, PagedAttention allocates the KV cache in non-contiguous memory blocks (pages). This allows for much more flexible memory management, preventing fragmentation and enabling advanced features like sharing context between different requests (e.g., for multi-turn conversations), which is a hot topic in Meta AI News and Google DeepMind News.

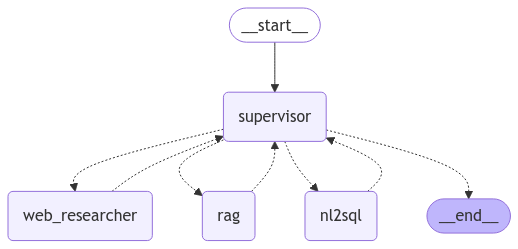

Conceptual Streaming Pipeline with TensorRT-LLM

While implementing these systems from scratch is complex, libraries like NVIDIA’s TensorRT-LLM provide pre-optimized components for building such pipelines. The following conceptual code illustrates the logic of a streaming generation loop.

# Conceptual code for a streaming LLM pipeline using a TensorRT-LLM-like API

# This is a simplified representation to illustrate the flow.

# Assume 'model' is a loaded TensorRT-LLM model and 'tokenizer' is available

prompt = "The future of AI is"

input_ids = tokenizer.encode(prompt, return_tensors="pt").cuda()

# Initialize the generation process, which sets up the KV cache

generation_session = model.start_generation(input_ids)

print(f"Prompt: {prompt}", end="")

# Streaming loop: generate one token at a time

while not generation_session.is_done():

# The 'step' function performs one forward pass, using and updating the KV cache

new_token_id = generation_session.step()

# Decode and yield the new token

new_token_text = tokenizer.decode(new_token_id)

print(new_token_text, end="", flush=True)

print("\nGeneration complete.")Best Practices and Production Considerations

Successfully deploying a TensorRT-optimized model requires attention to detail. Here are some best practices and considerations for integrating this workflow into your MLOps pipeline, which often involves tools like MLflow or platforms like AWS SageMaker and Azure Machine Learning.

Tips for Optimal Performance

- Precision is Key: Always benchmark FP16 and INT8 performance against your FP32 baseline. For most LLMs, FP16 provides a great balance of speed and accuracy. INT8 can offer further speedups but requires a careful calibration process with a representative dataset to avoid significant quality degradation.

- Handle Dynamic Shapes: LLM inputs (batch size and sequence length) are often variable. Use Optimization Profiles in TensorRT to define a range of expected input dimensions. This allows TensorRT to generate kernels that perform well across that range, though it may be slightly less optimal than a fixed-shape engine.

- Build Once, Deploy Many Times: The TensorRT engine build process is time-consuming but only needs to be done once for a specific model, precision, and GPU architecture. Always serialize and save the resulting engine file. In production, your application should simply load this pre-built engine to start the inference server.

- Leverage High-Level Toolkits: For common LLMs like Llama, Mistral, or GPT, consider using NVIDIA’s TensorRT-LLM. It is an open-source library that contains pre-built components and recipes for optimizing these models, abstracting away much of the complexity of manual ONNX export and layer-by-layer configuration. This is a key part of the latest NVIDIA AI News.

Integration with the AI Ecosystem

A TensorRT engine is a deployment artifact. It should be versioned and stored in a model registry like MLflow or Weights & Biases. Deployment platforms like Vertex AI or custom solutions built with Triton Inference Server can then pull this engine for serving. This ensures a reproducible and scalable path from research to production.

Conclusion and Next Steps

NVIDIA TensorRT is an indispensable tool for anyone serious about deploying Large Language Models in performance-critical applications. By converting trained models into optimized runtime engines, developers can achieve order-of-magnitude improvements in latency and throughput. The journey from a PyTorch model to a TensorRT engine involves a clear, multi-step process: exporting to the ONNX format, building the engine with specific configurations, and running inference through the optimized runtime.

For cutting-edge streaming and interactive use cases, advanced techniques like in-flight batching and optimized KV cache management are paramount. As the AI landscape rapidly evolves, with constant updates from OpenAI News to Mistral AI News, the ability to efficiently serve these powerful models will become an even greater competitive advantage. By mastering tools like TensorRT and integrating them into robust MLOps workflows, you can ensure your AI applications are not only intelligent but also incredibly fast and responsive.