Mistral AI: A Technical Deep Dive into Europe’s Generative AI Powerhouse

The Meteoric Rise of Mistral AI: Beyond the Hype

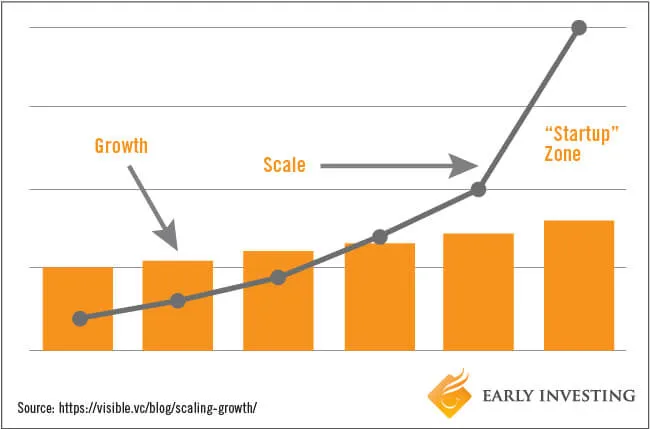

The generative AI landscape is witnessing a seismic shift, and much of the recent tremor originates from Paris. Mistral AI, a European startup, has rapidly evolved from a promising newcomer to a formidable contender, challenging the dominance of established players. The latest Mistral AI News isn’t just about record-breaking funding; it’s about the profound technical innovations and open-source philosophy driving this ascent. While the headlines often focus on the competition with giants, the real story for developers and engineers lies in the unique architecture, superior performance, and accessibility of Mistral’s models.

This article provides a comprehensive technical breakdown of what makes Mistral AI’s models, such as Mistral 7B and Mixtral 8x7B, so compelling. We will move beyond the surface-level OpenAI News and Anthropic News comparisons to explore the core architectural decisions, practical implementation strategies, and advanced optimization techniques that empower developers to leverage these powerful tools. From local inference on a laptop to scalable production deployments, we’ll cover the full spectrum of using Mistral models, complete with actionable code examples and best practices. This is the essential guide for anyone looking to understand and integrate the next wave of open-source language models into their applications.

Section 1: Deconstructing Mistral’s Core Innovations

Mistral AI’s success is not accidental; it’s built on a foundation of clever engineering and architectural choices that address key limitations in traditional Transformer models. Two concepts are central to understanding their flagship open-source models: Sliding Window Attention (SWA) and Sparse Mixture of Experts (SMoE).

Sliding Window Attention (SWA) in Mistral 7B

The original Transformer architecture uses a full attention mechanism, where every token attends to every other token in the input. This is computationally expensive, with complexity scaling quadratically (O(n²)) with the sequence length. Mistral 7B introduced Sliding Window Attention (SWA) to tackle this. In SWA, each token only attends to a fixed number of preceding tokens (a “window,” e.g., 4096 tokens). This simple yet effective modification reduces the computational complexity to a linear scale (O(n)), allowing the model to handle much longer contexts efficiently without a catastrophic increase in memory or processing time. This is a significant development in the world of Hugging Face Transformers News, offering a practical solution to a long-standing problem.

Sparse Mixture of Experts (SMoE) in Mixtral 8x7B

The Mixtral 8x7B model takes a different approach to scale. It employs a Sparse Mixture of Experts (SMoE) architecture. Instead of a single, dense feed-forward network, an SMoE model has multiple “expert” networks. For each token at each layer, a router network selects a small subset of these experts (in Mixtral’s case, two out of eight) to process the token. This means that while the model has a large number of total parameters (around 47B), only a fraction of them (about 13B) are used for inference on any given token. This results in a model with the knowledge capacity of a much larger model but the inference speed and cost of a smaller one. This innovative design has been a hot topic in recent PyTorch News and Meta AI News, as it offers a new paradigm for building efficient, powerful models.

Getting started with a Mistral model is straightforward using the Hugging Face `transformers` library. Here’s how you can run a simple inference with Mistral 7B.

# Make sure you have transformers and torch installed

# pip install transformers torch accelerate

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

# Define the model ID from Hugging Face Hub

model_id = "mistralai/Mistral-7B-Instruct-v0.2"

# Load the tokenizer and model

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype=torch.bfloat16, # Use bfloat16 for efficiency

device_map="auto" # Automatically use GPU if available

)

# Create a conversation prompt

messages = [

{"role": "user", "content": "What is the difference between Sliding Window Attention and a Sparse Mixture of Experts?"},

]

# Apply the chat template and tokenize the input

model_inputs = tokenizer.apply_chat_template(messages, return_tensors="pt").to("cuda")

# Generate a response

generated_ids = model.generate(model_inputs, max_new_tokens=256, do_sample=True)

# Decode and print the response

response = tokenizer.batch_decode(generated_ids)[0]

print(response)Section 2: Practical Implementation with RAG and Local Deployment

Beyond simple text generation, the true power of models like Mixtral is unlocked when they are integrated into larger systems, such as Retrieval-Augmented Generation (RAG) pipelines. RAG enhances LLMs by providing them with external knowledge from a custom dataset, reducing hallucinations and enabling them to answer questions about specific, private data. The open nature of Mistral models makes them ideal for such applications, especially when data privacy is a concern.

Building a RAG Pipeline with LangChain and Mistral

Frameworks like LangChain and LlamaIndex have become central to building LLM applications. The latest LangChain News often features improved support for open-source models. Here’s a practical example of how to build a basic RAG pipeline using LangChain, the `Ollama` framework for local model serving, and a vector database like ChromaDB.

First, ensure you have Ollama installed and have pulled the Mixtral model by running `ollama run mixtral` in your terminal. This makes the model available through a local API endpoint, a key feature highlighted in recent Ollama News.

# Install necessary libraries

# pip install langchain langchain_community langchain_core chromadb pypdf

from langchain_community.llms import Ollama

from langchain_community.document_loaders import PyPDFLoader

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain_community.embeddings import OllamaEmbeddings

from langchain_community.vectorstores import Chroma

from langchain_core.prompts import ChatPromptTemplate

from langchain.chains import create_retrieval_chain

from langchain.chains.combine_documents import create_stuff_documents_chain

# 1. Initialize the local Mixtral model via Ollama

llm = Ollama(model="mixtral")

embeddings = OllamaEmbeddings(model="mixtral")

# 2. Load and process a document

loader = PyPDFLoader("your_document.pdf") # Replace with your document

docs = loader.load()

text_splitter = RecursiveCharacterTextSplitter(chunk_size=1000, chunk_overlap=200)

splits = text_splitter.split_documents(docs)

# 3. Create a vector store (e.g., ChromaDB)

# This is a key area discussed in Chroma News and Pinecone News

vectorstore = Chroma.from_documents(documents=splits, embedding=embeddings)

retriever = vectorstore.as_retriever()

# 4. Create a prompt template

prompt_template = """Answer the following question based only on the provided context:

<context>

{context}

</context>

Question: {input}"""

prompt = ChatPromptTemplate.from_template(prompt_template)

# 5. Create the RAG chain

question_answer_chain = create_stuff_documents_chain(llm, prompt)

rag_chain = create_retrieval_chain(retriever, question_answer_chain)

# 6. Invoke the chain and get a response

question = "What are the key findings from the document?"

response = rag_chain.invoke({"input": question})

print(response["answer"])This example demonstrates an end-to-end workflow: loading a document, splitting it into chunks, embedding those chunks, storing them in a vector database, and finally using a Mistral model to answer a question based on the retrieved context. This pattern is incredibly powerful for building internal knowledge bases, customer support bots, and research assistants.

Section 3: Advanced Techniques for Optimization and Performance

While Mistral’s models are efficient, running them effectively—especially on consumer-grade hardware or in high-throughput production environments—requires advanced optimization techniques. Two key areas are model quantization and high-performance inference serving.

Model Quantization for Reduced Memory Footprint

Quantization is the process of reducing the precision of a model’s weights (e.g., from 16-bit floating-point to 4-bit integers). This dramatically reduces the model’s memory footprint and can speed up inference, making it possible to run large models like Mixtral on GPUs with limited VRAM. The `bitsandbytes` library, often featured in NVIDIA AI News for its deep integration with CUDA, is a popular choice for this.

Here’s how you can load a 4-bit quantized version of Mixtral using `transformers`:

# Install libraries for quantization

# pip install transformers torch accelerate bitsandbytes

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, BitsAndBytesConfig

model_id = "mistralai/Mixtral-8x7B-Instruct-v0.1"

# Define the quantization configuration

quantization_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_compute_dtype=torch.bfloat16,

bnb_4bit_quant_type="nf4",

bnb_4bit_use_double_quant=True,

)

# Load the tokenizer

tokenizer = AutoTokenizer.from_pretrained(model_id)

# Load the quantized model

model = AutoModelForCausalLM.from_pretrained(

model_id,

quantization_config=quantization_config,

device_map="auto",

)

# Now you can use the model for inference as usual, but with a much smaller memory footprint

messages = [

{"role": "user", "content": "Explain the benefits of model quantization for deploying LLMs."},

]

model_inputs = tokenizer.apply_chat_template(messages, return_tensors="pt").to(model.device)

generated_ids = model.generate(model_inputs, max_new_tokens=200)

response = tokenizer.batch_decode(generated_ids)[0]

print(response)High-Performance Inference with vLLM

For production environments that demand high throughput and low latency, specialized inference servers are essential. The latest vLLM News highlights its state-of-the-art performance for LLM serving. vLLM uses a novel memory management technique called PagedAttention to minimize memory waste and enable much higher batch sizes, dramatically increasing throughput. It can serve Mistral models out-of-the-box with impressive speed.

Here is a simple example of serving a Mistral model with the vLLM engine:

# Install vLLM

# pip install vllm

from vllm import LLM, SamplingParams

# A list of prompts to process in a batch

prompts = [

"The latest Google DeepMind News is about",

"A key update from Stability AI News involves",

"What is the core technology behind Amazon Bedrock?",

]

# Create sampling parameters

sampling_params = SamplingParams(temperature=0.7, top_p=0.95, max_tokens=50)

# Initialize the vLLM engine with a Mistral model

# vLLM automatically downloads the model from Hugging Face

llm = LLM(model="mistralai/Mistral-7B-Instruct-v0.2")

# Generate responses for the prompts

outputs = llm.generate(prompts, sampling_params)

# Print the results

for output in outputs:

prompt = output.prompt

generated_text = output.outputs[0].text

print(f"Prompt: {prompt!r}, Generated: {generated_text!r}")

Using tools like vLLM, or alternatives like NVIDIA’s Triton Inference Server with TensorRT optimization, is crucial for moving from a prototype on a platform like Google Colab to a production-ready service on AWS SageMaker or Azure Machine Learning.

Section 4: Best Practices and the Competitive Ecosystem

Effectively using Mistral models requires more than just code; it involves strategic thinking about model selection, prompt engineering, and understanding the broader ecosystem.

Choosing the Right Model

- Mistral 7B: Ideal for tasks that require high speed and low resource consumption. It’s perfect for simple classification, summarization, and constrained chatbot applications. It often outperforms larger models from previous generations.

- Mixtral 8x7B: The workhorse model. Its SMoE architecture provides the performance of a much larger model at a fraction of the inference cost. It excels at complex reasoning, coding, and multilingual tasks. It is the go-to choice for most RAG and agent-based applications.

- Mistral Large: The flagship proprietary model, competing directly with GPT-4 and Claude 3. It offers top-tier reasoning capabilities and is available via API. This is the choice for mission-critical applications where maximum performance is non-negotiable, and it is becoming more accessible through platforms like Azure AI News and Amazon Bedrock News.

Prompt Engineering and Fine-Tuning

Mistral’s “Instruct” models are fine-tuned to follow instructions within a specific chat template. Always use the `tokenizer.apply_chat_template` method, as shown in the examples, to ensure your prompts are formatted correctly. For specialized tasks, fine-tuning a model like Mistral 7B on your own data can yield incredible performance. Tools like `LlamaFactory` and platforms like Weights & Biases or MLflow are invaluable for managing these fine-tuning experiments.

The Open-Source Advantage

Mistral’s commitment to open models is its key differentiator from competitors like OpenAI and Anthropic. This openness fosters a vibrant community, accelerates innovation, and gives organizations full control over their data and infrastructure. While proprietary models offer convenience, open models provide transparency, customizability, and long-term strategic value, a trend that is also being pushed by developments in Meta AI News with their Llama models.

Conclusion: The New Era of Open and Powerful AI

The latest Mistral AI News signals more than just financial success; it validates a powerful technical vision. By combining architectural innovations like SWA and SMoE with a commitment to open-source principles, Mistral AI has provided developers with an unprecedented toolkit. We’ve seen how to harness these models for everything from simple generation to complex, retrieval-augmented pipelines, and how to optimize them for efficient deployment using quantization and high-performance servers.

The key takeaway is that state-of-the-art AI is no longer confined to the APIs of a few large companies. With tools like Hugging Face, LangChain, and vLLM, developers can now build and deploy applications with reasoning capabilities that were science fiction just a few years ago, all on their own infrastructure. As the ecosystem continues to evolve, the next steps for any forward-thinking developer are clear: download a Mistral model, experiment with a RAG pipeline, and start exploring how this new level of open, accessible intelligence can transform your own projects.