Accelerating AI Development: A Deep Dive into the Fast.ai Ecosystem

In today’s rapidly evolving technological landscape, the pace of artificial intelligence innovation is breathtaking. We see a constant stream of news about smarter devices, enhanced camera systems, and AI-powered features that seem to emerge overnight. While headlines are often dominated by large-scale model releases from sources like OpenAI News or Google DeepMind News, the real magic often happens in the tooling that empowers developers to harness this power. One of the most potent, yet sometimes overlooked, libraries in this domain is Fast.ai.

Fast.ai is more than just a library; it’s a philosophy. Built on top of PyTorch, it aims to “make neural nets uncool again” by democratizing deep learning. It achieves this by providing a high-level, expressive API that encapsulates best practices, allowing developers to achieve state-of-the-art results with minimal code. However, its layered design also provides the flexibility for deep customization when needed. This article will serve as a comprehensive guide to the Fast.ai ecosystem, exploring its core concepts, advanced techniques, and its place in the modern AI stack, which includes everything from Hugging Face Transformers News to MLOps platforms like MLflow News and Weights & Biases News.

The Fast.ai Philosophy: Simplicity and Power

The core philosophy of Fast.ai is to make deep learning accessible without sacrificing performance. It achieves this through a carefully designed, layered API and a powerful, declarative system for handling data pipelines. This approach contrasts with the verbosity of raw PyTorch or the sometimes rigid structure of other frameworks, offering a unique blend of ease of use and granular control.

The Layered API: From High-Level Abstraction to Low-Level Control

Fast.ai’s architecture is built in layers, allowing you to operate at the level of abstraction that best suits your task:

- High-Level API: This is where most users start. It consists of `Learner` objects tailored for specific applications (e.g., `vision_learner`, `text_learner`). With just a few lines of code, you can load data, create a state-of-the-art model, and start training using proven techniques.

- Mid-Level API: This layer exposes the core components used by the high-level API, such as transforms, data blocks, and callbacks. It provides the tools to build custom data pipelines and modify the training loop for unique requirements.

- Low-Level API: At its base, Fast.ai is a set of optimized and extended PyTorch components. This layer gives you full control, allowing you to create new primitives and integrate them seamlessly into the Fast.ai framework. This close integration is a key reason why developments in the PyTorch News world are so relevant to Fast.ai users.

The DataBlock API: A Declarative Approach to Data Loading

One of the most significant hurdles in any deep learning project is data processing. The `DataBlock` API is Fast.ai’s elegant solution. It allows you to declaratively define the steps of your data pipeline: where to get the data, how to split it, how to label it, and what transformations to apply. This structure is incredibly flexible and makes debugging data issues significantly easier.

Practical Example: Building a State-of-the-Art Image Classifier

Let’s see how these concepts come together. Here’s how you can train a world-class pet image classifier on the popular Oxford-IIIT Pet Dataset in just a few lines of code.

# Import necessary libraries from fastai

from fastai.vision.all import *

# Download and untar the dataset

path = untar_data(URLs.PETS)

# Define the DataBlock

# 1. blocks: Specify input (ImageBlock) and output (CategoryBlock) types

# 2. get_items: Function to get all image files

# 3. splitter: How to split data into training and validation sets (RandomSplitter)

# 4. get_y: Function to extract the label from the filename

# 5. item_tfms: Transformations applied to each item (e.g., resize)

# 6. batch_tfms: Transformations applied to a batch on the GPU (e.g., data augmentation)

pets = DataBlock(blocks=(ImageBlock, CategoryBlock),

get_items=get_image_files,

splitter=RandomSplitter(seed=42),

get_y=using_attr(RegexLabeller(r'(.+)_\d+.jpg$'), 'name'),

item_tfms=Resize(460),

batch_tfms=aug_transforms(size=224, min_scale=0.75))

# Create DataLoaders from the DataBlock

dls = pets.dataloaders(path/"images")

# Create a vision learner with a pre-trained ResNet34 model

# The learner automatically downloads the model and sets up the loss function and metrics

learn = vision_learner(dls, resnet34, metrics=error_rate)

# Fine-tune the model using best practices

learn.fine_tune(2)In this short snippet, Fast.ai handles downloading the data, structuring it into training and validation sets, applying state-of-the-art data augmentation, and creating a `Learner` with a pre-trained convolutional neural network. The `fine_tune` method automatically implements best practices for transfer learning, making the process incredibly efficient.

Tailoring Fast.ai for Your Unique Problems

While the high-level API is perfect for standard tasks, real-world applications often require more customization. This is where the mid-level API shines, allowing you to adapt Fast.ai to novel problems, from audio classification to processing specialized medical imagery, and even integrating with models from the wider ecosystem, such as those highlighted in Hugging Face News.

Customizing Data Pipelines for Non-Standard Tasks

The `DataBlock` API is not limited to images. You can create custom blocks and `get_y` functions to handle virtually any data type. This flexibility is crucial for research and for building applications that go beyond simple classification. For example, you could create a pipeline for a regression task on tabular data, a segmentation task on satellite images, or, as shown below, an audio classification task.

Practical Example: A Custom DataBlock for Audio Spectrograms

Let’s imagine you want to classify urban sounds. The `fastai.audio` module provides the necessary tools. We can define a `DataBlock` to convert audio files into spectrograms (a visual representation of sound) and classify them.

# Make sure you have fastaudio installed: pip install fastaudio

from fastai.audio.all import *

# Path to your audio dataset (e.g., UrbanSound8K)

path = untar_data(URLs.URBAN8K)

# Define a custom DataBlock for audio

audio_block = DataBlock(blocks=(AudioBlock, CategoryBlock),

get_items=get_audio_files,

splitter=RandomSplitter(seed=42),

get_y=parent_label, # Labels are folder names

item_tfms=Resize(224), # Resize spectrograms

batch_tfms=[RemoveSilence(), ToSpectrogram()])

# Create DataLoaders

dls = audio_block.dataloaders(path/"audio", bs=64)

# Create an audio learner

# We use a vision model because we are classifying spectrogram images

learn = vision_learner(dls, resnet34, metrics=accuracy)

# Train the model

learn.fine_tune(3)This example demonstrates the power of Fast.ai’s composable API. We reuse the `vision_learner` because, after converting audio to spectrograms, the problem becomes an image classification task. This kind of cross-domain flexibility is a hallmark of the library’s design and is a recurring theme in modern AI, as seen in updates from Meta AI News and other leading research labs.

Unleashing Peak Performance with Advanced Training Techniques

Fast.ai isn’t just about simplicity; it’s about embedding deep learning best practices directly into the framework. It pioneered the widespread adoption of several powerful training techniques that are now considered standard practice, helping developers train models faster and achieve better accuracy. These methods are crucial for anyone looking to get the most out of their hardware, a topic frequently covered in NVIDIA AI News.

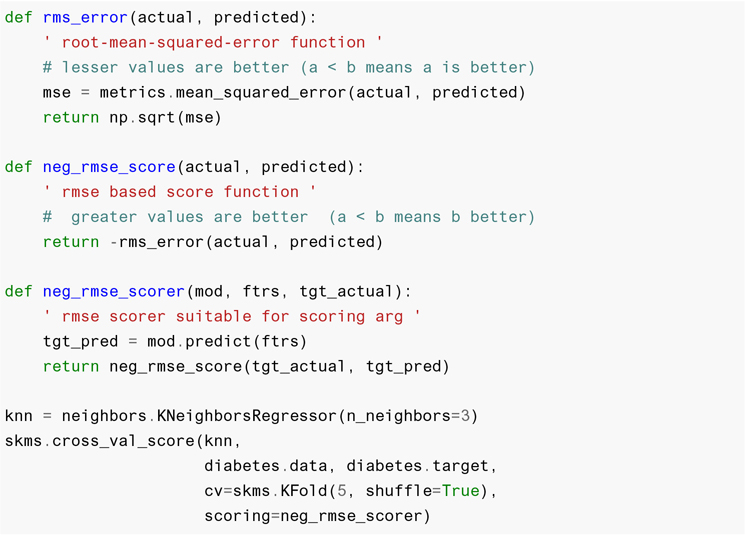

Finding the Optimal Learning Rate with `lr_find`

The learning rate is arguably the most important hyperparameter to tune. Set it too high, and the model diverges; set it too low, and training takes forever. Fast.ai provides a learning rate finder, `learn.lr_find()`, which implements the technique from Leslie Smith’s paper. It trains the model for a few iterations while gradually increasing the learning rate, then plots the loss. The optimal learning rate is typically found just before the loss starts to increase.

The One-Cycle Policy: Faster Training, Better Generalization

Instead of using a fixed or step-decaying learning rate, the one-cycle policy (`fit_one_cycle`) varies the learning rate cyclically. It starts low, increases to a maximum value (found via `lr_find`), and then decreases again. This approach acts as a form of regularization, helping the model avoid sharp minima and generalize better, often resulting in significantly faster training convergence. This is a practical application of research that makes advanced techniques accessible, similar to how frameworks like JAX News are pushing the boundaries of performance.

Practical Example: Applying Advanced Training Methods

Let’s refine our initial pet classifier by using these advanced techniques to achieve better performance.

from fastai.vision.all import *

# Setup DataLoaders as before

path = untar_data(URLs.PETS)

pets = DataBlock(blocks=(ImageBlock, CategoryBlock),

get_items=get_image_files,

splitter=RandomSplitter(seed=42),

get_y=using_attr(RegexLabeller(r'(.+)_\d+.jpg$'), 'name'),

item_tfms=Resize(460),

batch_tfms=aug_transforms(size=224, min_scale=0.75))

dls = pets.dataloaders(path/"images")

# Create the learner

learn = vision_learner(dls, resnet34, metrics=error_rate)

# 1. Use the Learning Rate Finder

# This will output a graph and suggest a learning rate

lr_steepest = learn.lr_find(suggest_funcs=(steep, valley))

print(f"Suggested learning rate: {lr_steepest.steep}")

# 2. Train using the one-cycle policy with the suggested learning rate

learn.fine_tune(5, base_lr=lr_steepest.steep)

# 3. Save the trained model

learn.save('pets-stage-1')By using `lr_find` and `fine_tune` (which uses the one-cycle policy by default), we are no longer guessing hyperparameters. We are using a systematic, proven approach to find optimal settings, leading to more robust and higher-performing models. This is a form of lightweight AutoML News, integrated directly into the training workflow.

From Development to Deployment: Best Practices and the MLOps Landscape

Training a model is only half the battle. A model’s true value is realized when it’s deployed in a production environment. Fast.ai provides tools for exporting models and integrating with the broader MLOps ecosystem, including cloud platforms like AWS SageMaker, Vertex AI, and Azure Machine Learning.

Model Export and Inference Optimization

Once you’re satisfied with your model, you need to prepare it for inference. The `learn.export()` method saves the model’s architecture and weights into a single file. This exported model can then be loaded for inference using `load_learner`, which doesn’t require the original data pipeline, making it lightweight and portable. For high-performance scenarios, you can further optimize this model by converting it to formats like ONNX News, which can then be accelerated using tools like NVIDIA’s TensorRT News or Intel’s OpenVINO News.

Practical Example: Exporting a Model for Production

Exporting a trained model is a one-line command.

# Assuming 'learn' is your trained learner object from the previous example

learn.export('pet_classifier.pkl')

# Later, in a different environment (e.g., a web server)

# You can load the model for inference without the original data

from fastai.vision.all import *

infer_learn = load_learner('pet_classifier.pkl')

# Make a prediction on a new image

prediction = infer_learn.predict('path/to/new/cat_image.jpg')

print(prediction)Best Practices for Reproducibility and Experiment Tracking

As projects grow, it becomes critical to track experiments, manage models, and ensure reproducibility. Fast.ai’s callback system makes it easy to integrate with popular MLOps tools. Callbacks like `WandbCallback` or `MLflowCallback` can automatically log metrics, hyperparameters, and model artifacts to platforms like Weights & Biases News or MLflow News. This ensures that your development process is transparent and your results are reproducible, which is essential for both individual developers and large teams.

Fast.ai in the Modern AI Stack

Fast.ai occupies a unique position in the AI landscape. While frameworks like Keras News offer similar high-level abstractions over TensorFlow, Fast.ai’s deep integration with PyTorch and its opinionated inclusion of best practices set it apart. For building complex applications, a Fast.ai model can serve as the core component of a larger system. For instance, an image classifier built with Fast.ai could be part of a RAG (Retrieval-Augmented Generation) pipeline orchestrated by tools from the LangChain News or LlamaIndex News ecosystems, where image embeddings are stored in a vector database like Pinecone News or Milvus News. This modularity makes it a valuable tool for developers building full-stack AI applications with tools like FastAPI or Streamlit News.

Conclusion: Your Fast Track to State-of-the-Art AI

In a field that moves at lightning speed, Fast.ai remains a cornerstone for practical, results-driven deep learning. It successfully bridges the gap between cutting-edge research and real-world application, providing a toolkit that is both incredibly powerful and remarkably accessible. By embedding best practices directly into its API, it empowers developers to build high-performance models for vision, text, audio, and more with unprecedented speed.

While the latest Mistral AI News or Anthropic News might capture the public’s imagination, it is frameworks like Fast.ai that enable the global community of developers to build upon these breakthroughs. Whether you are a seasoned researcher prototyping a new idea or a developer building your first AI-powered feature, Fast.ai offers a clear, efficient, and powerful path to turning your vision into reality. The next step is to dive in, explore the documentation, and start building.