Build a Real-Time Network Traffic Dashboard with Python and Streamlit

In today’s data-driven world, the ability to monitor and understand network traffic is no longer a luxury reserved for large enterprises with expensive, proprietary software. Thanks to the power and simplicity of Python, developers and network engineers can build their own custom, real-time monitoring tools. This article provides a comprehensive guide to creating a dynamic, interactive network traffic dashboard from scratch. We will leverage the packet manipulation capabilities of Scapy and the rapid web application development framework, Streamlit.

By combining these powerful libraries, you can gain invaluable insights into your network’s activity, identify potential bottlenecks, detect unusual patterns, and troubleshoot issues with unprecedented ease. This hands-on tutorial will walk you through capturing raw network packets, processing them into a structured format, and visualizing the data in a live-updating web interface. Whether you’re a network professional, a cybersecurity enthusiast, or a Python developer looking to build practical applications, this guide will equip you with the skills to turn raw network data into actionable intelligence. The latest Streamlit News often highlights its growing use in operational dashboards, making this a timely and relevant project.

Understanding the Core Components: Scapy and Streamlit

Before diving into the implementation, it’s essential to understand the two primary tools we’ll be using. Scapy is our data collection engine, capturing packets directly from the network interface, while Streamlit serves as our user-friendly front-end, rendering the data in an interactive dashboard.

Scapy for Network Packet Sniffing

Scapy is a powerful Python library that enables the user to send, sniff, dissect, and forge network packets. It’s like a Swiss Army knife for network manipulation. Unlike other tools that separate capture and analysis, Scapy allows you to perform these actions concurrently within a single Python script. Its key strength is its ability to decode a vast number of protocols and present packet data in a clean, accessible format.

To get started, you first need to install Scapy:

pip install scapyHere’s a simple example of how to capture five packets from your network interface and print a summary of each. Note that packet sniffing typically requires administrative or root privileges to access the network interface in promiscuous mode.

from scapy.all import sniff, IP, TCP, UDP

def packet_callback(packet):

"""

This function is called for each captured packet.

It prints a summary of the packet's layers.

"""

print(packet.summary())

def main():

"""

Starts the packet sniffer.

"""

print("Starting packet sniffer... (Capturing 5 packets)")

# Sniff 5 packets and call packet_callback for each one

sniff(count=5, prn=packet_callback)

print("Sniffing complete.")

if __name__ == "__main__":

main()Streamlit for Rapid UI Development

Streamlit is an open-source Python library that makes it incredibly simple to create and share beautiful, custom web apps for machine learning and data science. Its intuitive, script-like approach means you can build a functional UI with just a few lines of Python, without needing any front-end web development experience (HTML, CSS, or JavaScript). While frameworks like Dash News or Gradio News also serve this space, Streamlit’s simplicity is unmatched for rapid prototyping. The latest Streamlit News consistently emphasizes its “write Python, get a web app” philosophy.

First, install Streamlit and other necessary libraries for our project:

pip install streamlit pandas plotlyA basic “Hello, World!” Streamlit app looks like this. You save it as a .py file (e.g., app.py) and run it from your terminal with streamlit run app.py.

import streamlit as st

import time

st.title("My First Streamlit App")

st.write("Hello, Streamlit World!")

# Demonstrate a simple interactive element

if st.button("Click Me"):

st.success("Button clicked!")

# Show how data can be displayed

st.header("Displaying a counter")

placeholder = st.empty()

for i in range(10):

placeholder.metric(label="Counter", value=i)

time.sleep(0.5)Implementation: Building the Real-Time Dashboard

Now we’ll combine Scapy and Streamlit to build our dashboard. The main challenge is that Scapy’s sniff() function is blocking, meaning it will halt the execution of the Streamlit script. To solve this, we’ll run the packet sniffer in a separate thread and use a shared data structure to pass information back to the main Streamlit thread for visualization.

Step 1: Setting Up the Packet Sniffer Thread

We’ll create a function that captures packets, extracts key information (timestamp, source IP, destination IP, protocol, length), and appends it to a list. To ensure thread safety when modifying this list from one thread and reading it from another, we’ll use a threading.Lock.

Our application will store captured packet data in a Pandas DataFrame, which is ideal for analysis and visualization. The sniffer thread will populate a list of dictionaries, which we will periodically convert into a DataFrame in the main thread.

import streamlit as st

import pandas as pd

import plotly.express as px

from scapy.all import sniff, IP, TCP, UDP

import threading

import time

from collections import deque

# --- Global variables for thread-safe data sharing ---

# Use a deque for efficient appends and pops from both ends

packet_data = deque(maxlen=1000) # Store max 1000 packets

data_lock = threading.Lock()

stop_sniffing = threading.Event()

# --- Packet Sniffing Logic ---

def packet_callback(packet):

"""

Processes each captured packet and adds its info to the global list.

"""

if IP in packet:

timestamp = time.time()

src_ip = packet[IP].src

dst_ip = packet[IP].dst

length = len(packet)

protocol = "Other"

if TCP in packet:

protocol = "TCP"

elif UDP in packet:

protocol = "UDP"

with data_lock:

packet_data.append({

"Timestamp": timestamp,

"Source IP": src_ip,

"Destination IP": dst_ip,

"Protocol": protocol,

"Length": length

})

def start_sniffer():

"""

Starts the Scapy sniffer in a non-blocking way.

"""

# Use stop_filter to allow the sniff function to stop gracefully

sniff(prn=packet_callback, store=False, stop_filter=lambda p: stop_sniffing.is_set())

# --- Streamlit App Layout ---

st.set_page_config(layout="wide", page_title="Real-Time Network Traffic Dashboard")

st.title("Live Network Traffic Monitor")

# --- Start/Stop Controls ---

if 'sniffing' not in st.session_state:

st.session_state.sniffing = False

st.session_state.sniffer_thread = None

col1, col2 = st.columns(2)

with col1:

if st.button("Start Sniffing", disabled=st.session_state.sniffing):

st.session_state.sniffing = True

stop_sniffing.clear()

# Start sniffer in a new thread

st.session_state.sniffer_thread = threading.Thread(target=start_sniffer, daemon=True)

st.session_state.sniffer_thread.start()

st.success("Packet sniffing started!")

with col2:

if st.button("Stop Sniffing", disabled=not st.session_state.sniffing):

st.session_state.sniffing = False

stop_sniffing.set()

# Wait for the thread to finish

if st.session_state.sniffer_thread:

st.session_state.sniffer_thread.join(timeout=1)

st.warning("Packet sniffing stopped.")

# --- Real-Time Data Display ---

placeholder = st.empty()

while True:

with data_lock:

if not packet_data:

# If no data, wait a bit before trying again

time.sleep(1)

continue

# Create a DataFrame from a copy of the current packet data

df = pd.DataFrame(list(packet_data))

with placeholder.container():

# --- Key Metrics ---

total_packets = len(df)

total_volume_mb = df['Length'].sum() / (1024 * 1024)

kpi1, kpi2 = st.columns(2)

kpi1.metric(label="Total Packets Captured", value=f"{total_packets}")

kpi2.metric(label="Total Volume (MB)", value=f"{total_volume_mb:.2f}")

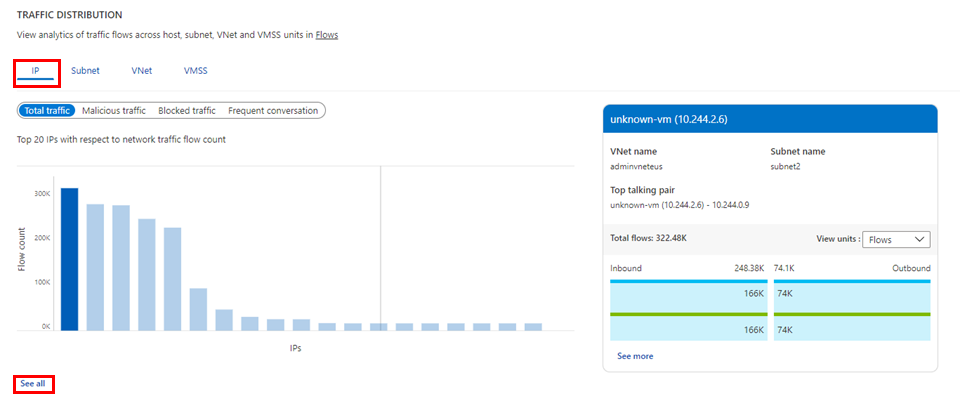

# --- Visualizations ---

st.subheader("Traffic by Protocol")

protocol_counts = df['Protocol'].value_counts().reset_index()

protocol_counts.columns = ['Protocol', 'Count']

fig_protocol = px.pie(protocol_counts, names='Protocol', values='Count', hole=.3)

st.plotly_chart(fig_protocol, use_container_width=True)

st.subheader("Traffic Over Time")

# Resample data to show packets per second

df['Timestamp'] = pd.to_datetime(df['Timestamp'], unit='s')

df.set_index('Timestamp', inplace=True)

traffic_per_second = df['Length'].resample('S').sum().reset_index()

fig_time = px.line(traffic_per_second, x='Timestamp', y='Length', labels={'Length': 'Bytes per Second'})

st.plotly_chart(fig_time, use_container_width=True)

st.subheader("Recent Packets")

st.dataframe(df.tail(10)) # Display the last 10 packets

# Refresh interval

time.sleep(2)

To run this complete application, save the code as dashboard.py and execute streamlit run dashboard.py in your terminal. Remember to run with `sudo` if required by your operating system for network sniffing.

Advanced Techniques and Customizations

Once you have the basic dashboard running, you can enhance it with more advanced features to provide deeper insights. This is where you can integrate more complex data analysis and even machine learning models developed with tools from PyTorch News or TensorFlow News.

Adding Interactive Filters

Streamlit’s interactive widgets make it easy to add filters. For example, you can allow users to filter the displayed traffic by protocol or IP address. This helps in isolating specific conversations or troubleshooting issues related to a particular service.

You can add a filter like this to your main loop:

# ... inside the 'while True' loop, after creating the DataFrame ...

# Add a protocol filter

protocols = df['Protocol'].unique()

selected_protocol = st.sidebar.selectbox("Filter by Protocol:", options=["All"] + list(protocols))

if selected_protocol != "All":

df_filtered = df[df['Protocol'] == selected_protocol]

else:

df_filtered = df

# Use df_filtered for all subsequent charts and tables

st.subheader("Recent Packets (Filtered)")

st.dataframe(df_filtered.tail(10))GeoIP Lookups for Source/Destination Mapping

To make your dashboard more visually compelling, you can perform GeoIP lookups on the source and destination IP addresses to visualize traffic on a world map. Libraries like geoip2 can be used for this. You would first download a GeoIP database (like the free GeoLite2 database from MaxMind) and then create a function to look up the country or city for a given IP address. This data can then be plotted on a map using Plotly.

Anomaly Detection

For a more advanced security-oriented dashboard, you can implement a basic anomaly detection system. This could involve:

- Volume-based alerts: Trigger an alert using

st.warning()orst.error()if the number of packets or total data volume in a short time window exceeds a predefined threshold. - Protocol-based alerts: Flag unusual shifts in protocol distribution, such as a sudden spike in ICMP traffic, which could indicate a network scan.

- ML-powered detection: For more sophisticated analysis, you could use frameworks from Google DeepMind News or Meta AI News to train a model on “normal” traffic patterns and use it to flag deviations. These models, once trained, could be deployed using tools like ONNX News for efficient inference.

Best Practices and Optimization

Building a real-time application comes with its own set of challenges, particularly around performance and stability. Following these best practices will ensure your dashboard remains responsive and reliable.

1. Use Scapy Filters for Efficient Capturing

Sniffing every single packet on a busy network can overwhelm your Python script. It’s far more efficient to filter packets at the capture level using Berkeley Packet Filter (BPF) syntax. This tells the network card to only pass packets matching the filter to your application, significantly reducing the processing load.

sniff(filter="tcp port 80 or tcp port 443", prn=packet_callback)

2. Manage Memory Usage

Storing an unbounded number of packets will eventually consume all available memory. In our code, we used a collections.deque with a maxlen to automatically discard old packets as new ones arrive. This is a crucial technique for long-running monitoring applications.

3. Optimize Data Refresh

Updating the entire Streamlit UI too frequently can cause flickering and high CPU usage. A refresh interval of 1-3 seconds is often sufficient for a “real-time” feel without being resource-intensive. Our example uses time.sleep(2) to control this.

4. Handle Threading Gracefully

When the user stops the sniffing process, it’s important that the sniffer thread terminates cleanly. We used a threading.Event (stop_sniffing) as a flag. The sniff function’s stop_filter parameter checks this event for each packet, allowing it to exit its loop gracefully when the event is set.

5. Consider Deployment and Security

Packet sniffing requires elevated permissions, which poses a security risk. Never run such a tool on an untrusted network. When deploying, consider running the application in a containerized environment with restricted permissions. For sharing your dashboard, Streamlit Community Cloud is an excellent option, though live packet sniffing from a cloud environment would require a more complex setup involving a local agent sending data to the cloud-hosted app, perhaps via a tool from the LangChain News or LlamaIndex News ecosystem if you were analyzing text-based protocols.

Conclusion and Next Steps

You have successfully learned how to build a powerful, real-time network traffic dashboard using Python, Scapy, and Streamlit. We’ve covered the entire process from capturing raw network packets and processing them in a separate thread to visualizing the data in an interactive web application. This project demonstrates the incredible synergy between specialized libraries, turning a complex task into a manageable and rewarding endeavor.

The true power of this approach lies in its extensibility. You can now build upon this foundation to create a tool perfectly tailored to your needs. Consider these next steps:

- Long-Term Storage: Integrate a database like InfluxDB or PostgreSQL to store historical traffic data for trend analysis.

- Advanced Alerting: Set up email or Slack notifications for custom-defined alert conditions.

- Deeper Packet Inspection: Extend the packet callback to extract application-layer data (e.g., HTTP headers, DNS queries) for more granular insights.

- Integrate MLOps: Use tools like MLflow News or Weights & Biases News to track experiments if you decide to build and iterate on anomaly detection models.

By combining the simplicity of Streamlit with the power of network analysis libraries, you are well-equipped to unlock deep insights from your network data, moving from passive observation to active, intelligent monitoring.