DataRobot and NVIDIA: Supercharging Enterprise AI with GPU-Accelerated AutoML and MLOps

The artificial intelligence landscape is in a constant state of high-velocity evolution. Enterprises are no longer just experimenting with AI; they are actively deploying it to solve critical business problems, from fraud detection and supply chain optimization to customer churn prediction and generative AI applications. This shift from experimentation to production has exposed a significant challenge: the immense computational power required to train, optimize, and serve state-of-the-art models at scale. Recent developments, highlighted by strategic collaborations like the one between DataRobot and NVIDIA, underscore a pivotal trend: the fusion of automated machine learning (AutoML) platforms with high-performance GPU hardware and software is becoming the cornerstone of enterprise-ready AI. This synergy promises to democratize access to powerful AI, reduce time-to-value, and unlock new frontiers of innovation.

This article delves into the technical implications of this powerful combination. We will explore how GPU acceleration is transforming the capabilities of platforms like DataRobot, examine practical code examples for leveraging these integrated systems, and discuss the advanced techniques and best practices necessary to build and deploy robust, scalable, and efficient AI solutions in the modern enterprise. From traditional machine learning to the latest in generative AI, the integration of specialized hardware and sophisticated MLOps platforms is setting a new standard for what’s possible.

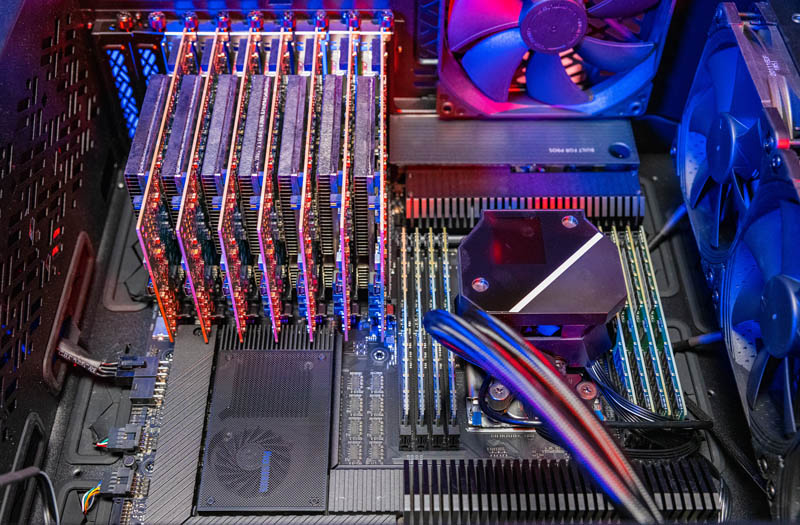

The Core Synergy: AutoML Meets Accelerated Computing

At its heart, the collaboration between a platform like DataRobot and a hardware leader like NVIDIA is about removing friction from the AI lifecycle. AutoML platforms excel at automating the repetitive, time-consuming tasks of model building: feature engineering, algorithm selection, hyperparameter tuning, and model validation. NVIDIA’s GPUs and software ecosystem, including CUDA, cuDNN, and higher-level tools, provide the raw power to execute these tasks orders of magnitude faster than traditional CPU-based infrastructures.

Understanding the Technical Stack

The power of this integration lies in a deeply integrated stack:

- Application Layer (DataRobot): This is the user-facing platform that provides the AutoML and MLOps capabilities. It orchestrates the entire workflow, from data ingestion to model deployment and monitoring. This is where a data scientist or ML engineer interacts with the system.

– Software Acceleration Layer (NVIDIA AI Enterprise): This is a suite of software that optimizes AI workloads for NVIDIA GPUs. Key components relevant here include TensorRT News for optimizing model inference, and Triton Inference Server News for deploying models at scale with high throughput. This layer ensures that models built with popular frameworks like TensorFlow, PyTorch, or JAX are run in the most efficient way possible on the underlying hardware.

– Hardware Layer (NVIDIA GPUs): The foundation of the stack, providing the parallel processing power necessary for training deep learning models and handling large-scale data transformations.

For a developer, interacting with this stack is often abstracted through a client library. The DataRobot Python client, for instance, allows you to programmatically control the entire platform, from starting a project to deploying a model, without needing to manage the underlying Kubernetes clusters or GPU drivers directly.

Connecting and Initiating an AutoML Project

Let’s start with a foundational code example. The first step in any project is to connect to the DataRobot platform and set up a new project. This script demonstrates how to use the `datarobot` Python client to connect via an API token, upload a dataset, and kick off the automated modeling process, specifying a target variable.

import datarobot as dr

import pandas as pd

# --- Configuration ---

# It's a best practice to use environment variables for sensitive info

# For example: export DATAROBOT_API_TOKEN='YOUR_API_TOKEN'

# export DATAROBOT_ENDPOINT='https://app.datarobot.com/api/v2'

API_TOKEN = 'YOUR_API_TOKEN'

ENDPOINT = 'https://app.datarobot.com/api/v2' # or your dedicated endpoint

# --- Connect to DataRobot ---

print("Connecting to DataRobot...")

dr.Client(token=API_TOKEN, endpoint=ENDPOINT)

# --- Prepare and Upload Data ---

# Let's create a sample dataset for a classification problem (e.g., customer churn)

data = {

'tenure': [12, 24, 1, 45, 30, 5, 60, 35],

'monthly_charges': [50.5, 75.2, 20.0, 90.8, 80.1, 30.5, 105.0, 85.5],

'total_charges': [600.0, 1800.5, 20.0, 4000.0, 2400.3, 150.0, 6300.0, 3000.0],

'churn': [0, 0, 1, 0, 0, 1, 0, 1]

}

df = pd.DataFrame(data)

dataset_name = 'customer_churn_demo.csv'

df.to_csv(dataset_name, index=False)

print(f"Uploading dataset: {dataset_name}")

project = dr.Project.create(

sourcedata=dataset_name,

project_name='Customer Churn Prediction Demo'

)

print(f"Project '{project.project_name}' created with ID: {project.id}")

# --- Run AutoML ---

# This is where the magic happens. DataRobot will now analyze the data,

# engineer features, and train dozens of models.

# On a GPU-enabled instance, this process is significantly faster.

print("Starting Autopilot modeling process...")

project.set_target(

target='churn',

mode=dr.AUTOPILOT_MODE.FULL_AUTO,

worker_count=-1 # Use all available workers

)

# You can monitor the project progress in the UI or via the API

project.wait_for_autopilot()

print("Autopilot completed!")In this example, setting `worker_count=-1` tells DataRobot to use all available resources. In an environment powered by NVIDIA, this means the platform can parallelize the training of multiple models across several GPUs, drastically reducing the time it takes to get from raw data to a leaderboard of high-performing models.

Implementation: From GPU-Accelerated Training to Optimized Deployment

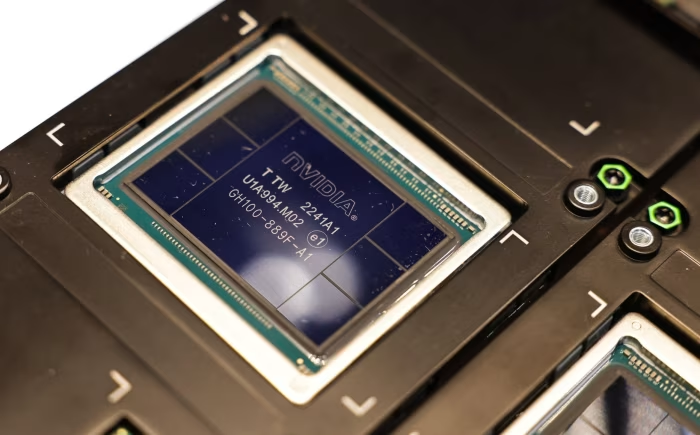

NVIDIA GPU chip – China’s AI Engineers Are Secretly Accessing Banned Nvidia Chips – WSJ

Once the AutoML process completes, you are presented with a leaderboard of models, ranked by a chosen metric (e.g., LogLoss, AUC). The next critical steps are to understand the best model, prepare it for production, and deploy it. This is where the integration with tools like NVIDIA Triton Inference Server becomes invaluable for achieving enterprise-grade performance.

Selecting and Deploying the Best Model

The DataRobot platform not only trains models but also provides deep insights into their behavior through features like SHAP explainability, feature impact, and lift charts. Programmatically, you can retrieve the best model and deploy it to a dedicated prediction server with a few lines of code. Behind the scenes, DataRobot’s MLOps capabilities handle the containerization, scaling, and endpoint creation. When integrated with NVIDIA’s software, this deployment can be automatically optimized for GPU inference.

import datarobot as dr

import pandas as pd

import json

# Assume 'project' is the project object from the previous step

# If running this script separately, you need to connect and get the project first:

# dr.Client(token=API_TOKEN, endpoint=ENDPOINT)

# project = dr.Project.get('YOUR_PROJECT_ID')

# --- Get the Best Model from the Leaderboard ---

print("Fetching the model leaderboard...")

leaderboard = project.get_models()

# The leaderboard is sorted by the default metric, so the first model is usually the best

best_model = leaderboard[0]

print(f"Best model found: {best_model.model_type} with validation score: {best_model.metrics['LogLoss']['validation']}")

# --- Deploy the Model ---

# This creates a scalable REST API endpoint for the model.

# DataRobot MLOps handles the underlying infrastructure, which can be

# GPU-powered for low-latency predictions.

print("Deploying the best model...")

prediction_server = dr.PredictionServer.list()[0] # Get the default prediction server

deployment = dr.Deployment.create_from_learning_model(

model_id=best_model.id,

label='Churn Production Model',

description='API for predicting customer churn',

default_prediction_server_id=prediction_server.id

)

print(f"Deployment created with ID: {deployment.id}")

print(f"Prediction API endpoint: {deployment.prediction_url}")

# --- Make Predictions ---

# Prepare some new data for scoring

new_data = pd.DataFrame({

'tenure': [3, 50],

'monthly_charges': [45.0, 95.5],

'total_charges': [135.0, 4750.0]

})

# The DataRobot Prediction API can take pandas DataFrames directly

print("\nMaking predictions on new data...")

predictions = deployment.predict_dataframe(new_data)

print(predictions)The beauty of this workflow is its simplicity. Complex processes like model containerization, REST API generation, and resource management are handled automatically. For organizations leveraging platforms like AWS SageMaker or Azure Machine Learning, DataRobot can integrate with these environments, ensuring that models are deployed within an existing cloud governance framework while still benefiting from GPU acceleration.

Advanced Techniques: Custom Models and Generative AI Workflows

While AutoML is powerful for structured data problems, enterprises are increasingly adopting custom models from the open-source ecosystem, especially for unstructured data and generative AI tasks. The latest advancements in MLOps platforms focus on providing a governed, scalable environment for these custom models, bridging the gap between open-source flexibility and enterprise requirements.

Integrating a Hugging Face Transformer Model

Imagine you want to deploy a custom sentiment analysis model from Hugging Face. An advanced MLOps platform allows you to bring this model into its managed environment. You can package the model with its dependencies, define a standard inference schema, and deploy it just like an AutoML model. This approach provides centralized monitoring, governance, and data drift detection for all models, regardless of their origin.

The process often involves creating a custom model environment with a `start_server.sh` script and a Python hook for predictions. Here is a conceptual example of the Python prediction code you might package in a custom model artifact. This code would be part of a larger package uploaded to the platform.

import torch

from transformers import pipeline

import pandas as pd

import os

class CustomHuggingFaceModel:

def __init__(self):

"""

Loads the model into memory. This is called once when the model is deployed.

The platform can provision a GPU for this environment.

"""

# Check if a GPU is available and use it

device = 0 if torch.cuda.is_available() else -1

print(f"Using device: {'GPU' if device == 0 else 'CPU'}")

# Load a pre-trained sentiment analysis model from Hugging Face

# The model files would be packaged in the custom model artifact

model_path = os.environ.get("MODEL_PATH", "distilbert-base-uncased-finetuned-sst-2-english")

self.sentiment_pipeline = pipeline(

"sentiment-analysis",

model=model_path,

device=device

)

def predict(self, data):

"""

Makes predictions on incoming data.

'data' is a pandas DataFrame.

"""

# The platform expects a DataFrame and will return one

if not isinstance(data, pd.DataFrame):

raise ValueError("Input data must be a pandas DataFrame")

text_column = data.columns[0]

texts = data[text_column].tolist()

# Run inference

predictions = self.sentiment_pipeline(texts)

# Format the output to match platform requirements

labels = [p['label'] for p in predictions]

scores = [p['score'] for p in predictions]

result_df = pd.DataFrame({

'text': texts,

'predicted_label': labels,

'confidence_score': scores

})

return result_df

# This part is for local testing and would not be called by the platform directly

if __name__ == '__main__':

model = CustomHuggingFaceModel()

test_data = pd.DataFrame({'review_text': [

"This product is absolutely fantastic! I highly recommend it.",

"I am very disappointed with the quality and customer service."

]})

predictions = model.predict(test_data)

print(predictions)By deploying this custom model through a platform like DataRobot, you gain immense benefits. The platform can leverage NVIDIA Triton Inference Server with TensorRT optimization to serve the model with extremely low latency. This is crucial for real-time applications. Furthermore, all prediction requests and responses are logged, enabling automatic drift detection and performance monitoring, features often missing in bespoke deployment scripts.

This same pattern applies to the latest generative AI models from sources like OpenAI News, Cohere News, or Mistral AI News. You can create custom “connectors” or use platform-native integrations to build applications using Retrieval-Augmented Generation (RAG). In a RAG workflow, GPU acceleration is critical not just for the LLM inference but also for the vector database lookups, which often rely on GPU-accelerated libraries like FAISS. The entire pipeline, from vectorizing documents with Sentence Transformers to querying a vector store like Pinecone or Milvus, can be orchestrated and monitored within the MLOps environment.

NVIDIA GPU chip – Who’s making chips for AI? Chinese manufacturers lag behind US …

Best Practices for Optimization and Governance

Leveraging a GPU-accelerated AutoML and MLOps platform is not just about speed; it’s about building a sustainable, governed, and efficient AI practice. Here are some key best practices to consider:

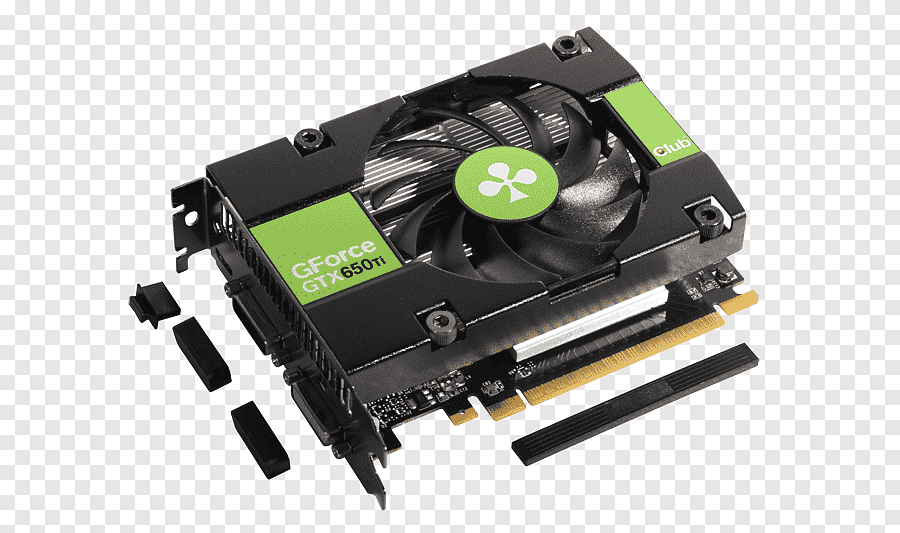

1. Right-Size Your Compute

While GPUs offer incredible speed, they are a premium resource. Use them strategically. For initial data exploration and training of simpler models (e.g., Elastic-Net, LightGBM), CPU-based workers may be more cost-effective. Reserve GPU-powered workers for training deep learning models (e.g., using Keras or PyTorch backends) and for deploying models that require low-latency inference.

2. Embrace MLOps from Day One

Don’t treat deployment and monitoring as an afterthought. Use the integrated MLOps capabilities to track model versions, monitor for data drift and accuracy degradation, and set up automated retraining triggers. Tools like MLflow News and Weights & Biases News have championed this experiment-tracking and model-registry approach, which is now a core component of enterprise-grade platforms.

NVIDIA GPU chip – Graphics Cards & Video Adapters GDDR5 SDRAM Nvidia Graphics …

3. Standardize on a Flexible Architecture

Your platform should be able to handle both AutoML models and custom-built models. This hybrid approach provides the best of both worlds: rapid development for standard problems using AutoML, and the flexibility to incorporate cutting-edge open-source models for specialized tasks. Standardizing deployment through a single platform simplifies security, governance, and cost management.

4. Optimize for Inference

Training is only half the battle. Inference is where the model delivers value, and it often runs 24/7. Use tools like ONNX News (Open Neural Network Exchange) to create a portable model format, and then leverage inference optimizers like NVIDIA TensorRT to quantize models (e.g., to FP16 or INT8) and prune unnecessary layers. This can lead to a 5-10x improvement in inference speed and a significant reduction in computational cost, all while being managed by a serving engine like Triton.

Conclusion: The Future of Enterprise AI is Integrated and Accelerated

The convergence of advanced AutoML platforms like DataRobot with the hardware and software ecosystem from NVIDIA represents a fundamental maturation of the enterprise AI market. It signals a move away from fragmented, do-it-yourself toolchains toward integrated, reliable, and incredibly powerful systems. For developers and data scientists, this means less time spent on infrastructure management and more time focused on solving business problems. For organizations, it means a faster, more scalable, and more governable path to realizing the transformative potential of AI.

By leveraging GPU-accelerated training, developers can iterate on models more quickly. By deploying on optimized inference servers, they can serve predictions with the speed and reliability that modern applications demand. And by managing the entire lifecycle through a unified MLOps platform, they can ensure that their AI investments are robust, transparent, and aligned with business objectives. As AI continues to evolve, particularly with the rise of generative models, this powerful synergy between intelligent software and accelerated hardware will be the engine that drives the next wave of enterprise innovation.