From Data to Deployment: Building and Serving a Custom LLM with Python and FastAPI

The artificial intelligence landscape is no longer dominated solely by massive, general-purpose models from large tech corporations. A powerful new trend is emerging: the creation of smaller, specialized Large Language Models (LLMs) trained on domain-specific data. These “homegrown” models offer superior performance on niche tasks, greater control over data privacy, and significantly lower operational costs. By fine-tuning open-source models on curated datasets, developers can build powerful, efficient AI systems tailored to their unique needs.

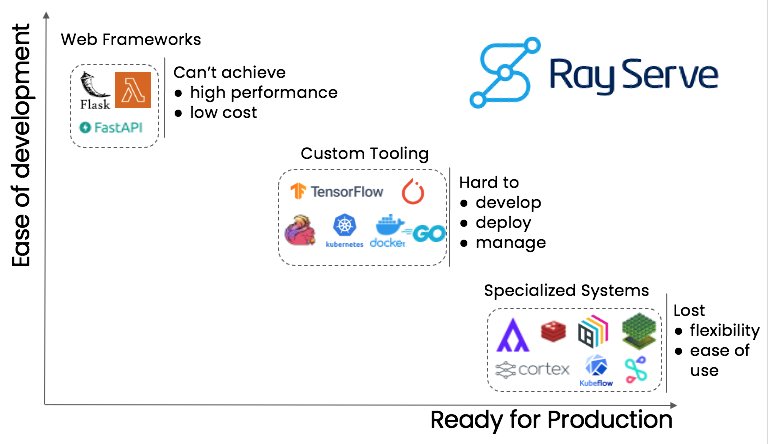

However, building a model is only half the battle. To be truly useful, it must be deployed as a robust, scalable, and accessible service. This is where the synergy of modern machine learning libraries and high-performance web frameworks comes into play. FastAPI, a modern, high-performance web framework for building APIs with Python, has rapidly become a favorite for deploying ML models. Its asynchronous nature, automatic documentation, and Pydantic-based data validation make it the perfect tool for creating production-ready inference endpoints. This article provides a comprehensive guide to the entire lifecycle: fine-tuning a base LLM on custom data, optimizing it for efficient inference, and deploying it as a high-performance API using FastAPI.

The Foundation: Fine-Tuning a Model on Custom Data

The first step in creating a specialized LLM is to select a powerful, open-source base model and fine-tune it on a dataset relevant to your target domain. This process adapts the model’s general knowledge to the specific vocabulary, style, and patterns of your data, resulting in significantly better performance on specialized tasks.

Choosing a Base Model and Preparing Data

The AI community, fueled by advancements from organizations mentioned in Meta AI News and Mistral AI News, offers a plethora of excellent open-source models. The Hugging Face Hub is the primary resource for finding models like Mistral 7B, Llama 3, or smaller, more efficient models like Phi-3 or Gemma. The choice of model often depends on a trade-off between performance and computational resources.

For our example, let’s imagine we’ve scraped a dataset of technology discussions (similar to Hacker News). The goal is to create a model that can generate insightful, context-aware comments about tech topics. The data needs to be preprocessed and formatted into a suitable structure for training, often a simple text file or a structured dataset format like JSON Lines.

The Fine-Tuning Process with Hugging Face

The transformers library from Hugging Face, a frequent topic in Hugging Face Transformers News, provides a high-level API for fine-tuning. The process typically involves using the Trainer API, which handles the complexities of the training loop, optimization, and evaluation. Underlying this are powerful frameworks like PyTorch or TensorFlow, which are constantly evolving as seen in PyTorch News and TensorFlow News.

Here’s a conceptual example of how you might set up a fine-tuning script using the transformers library. This snippet outlines the key components needed to start the process.

import torch

from datasets import load_dataset

from transformers import (

AutoModelForCausalLM,

AutoTokenizer,

TrainingArguments,

Trainer,

BitsAndBytesConfig

)

# 1. Configuration

model_name = "mistralai/Mistral-7B-v0.1"

dataset_path = "path/to/your/hacker_news_dataset.jsonl"

output_dir = "./results/fine-tuned-mistral-hn"

# 2. Load Tokenizer and Model

# Use quantization to reduce memory usage during training

quantization_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_quant_type="nf4",

bnb_4bit_compute_dtype=torch.bfloat16

)

tokenizer = AutoTokenizer.from_pretrained(model_name)

# Add a padding token if it doesn't exist

if tokenizer.pad_token is None:

tokenizer.add_special_tokens({'pad_token': '[PAD]'})

model = AutoModelForCausalLM.from_pretrained(

model_name,

quantization_config=quantization_config,

device_map="auto" # Automatically distribute model across GPUs

)

model.resize_token_embeddings(len(tokenizer))

# 3. Load and Process Dataset

dataset = load_dataset("json", data_files=dataset_path, split="train")

def tokenize_function(examples):

return tokenizer(examples["text"], truncation=True, padding="max_length", max_length=512)

tokenized_dataset = dataset.map(tokenize_function, batched=True)

# 4. Set Training Arguments

training_args = TrainingArguments(

output_dir=output_dir,

num_train_epochs=1,

per_device_train_batch_size=4,

gradient_accumulation_steps=2,

learning_rate=2e-4,

logging_dir='./logs',

logging_steps=50,

save_steps=500,

fp16=True, # Use mixed-precision training

)

# 5. Initialize Trainer

trainer = Trainer(

model=model,

args=training_args,

train_dataset=tokenized_dataset,

)

# 6. Start Fine-Tuning

print("Starting fine-tuning...")

trainer.train()

# 7. Save the fine-tuned model

print("Saving the final model...")

model.save_pretrained(output_dir)

tokenizer.save_pretrained(output_dir)Throughout this process, tools like those featured in Weights & Biases News or MLflow News are invaluable for tracking experiments, visualizing metrics, and managing model artifacts.

Optimization: Preparing the Model for Efficient Inference

A fine-tuned model, especially a large one, is often too slow and memory-intensive for direct deployment in a production environment. Optimization is a critical intermediate step to reduce the model’s size and increase its inference speed without significantly sacrificing accuracy.

The Power of Quantization

Quantization is a popular technique that involves reducing the precision of the model’s weights, typically from 32-bit floating-point numbers (FP32) to 8-bit integers (INT8) or even 4-bit integers (INT4). This drastically reduces the model’s memory footprint and can lead to significant speedups, especially on modern hardware with specialized instruction sets. Libraries like bitsandbytes, AutoGPTQ, and frameworks discussed in OpenVINO News and TensorRT News provide powerful tools for post-training quantization.

Loading an Optimized Model for Inference

Once the model is fine-tuned and saved, you can reload it in a quantized format for your inference service. This is the model that your FastAPI application will use to serve requests. The following code demonstrates how to load the previously fine-tuned model with 4-bit quantization for efficient inference.

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, BitsAndBytesConfig

# Path to your fine-tuned model

model_path = "./results/fine-tuned-mistral-hn"

# Define the quantization configuration for inference

quantization_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_compute_dtype=torch.bfloat16

)

# Load the tokenizer

tokenizer = AutoTokenizer.from_pretrained(model_path)

# Load the fine-tuned model with quantization

model = AutoModelForCausalLM.from_pretrained(

model_path,

quantization_config=quantization_config,

device_map="auto", # Use 'cuda' if you have a GPU

trust_remote_code=True

)

print("Optimized model loaded successfully!")

def generate_text(prompt, max_new_tokens=100):

"""

A helper function to generate text using the loaded model.

"""

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

# Generate output

outputs = model.generate(

**inputs,

max_new_tokens=max_new_tokens,

no_repeat_ngram_size=2,

early_stopping=True

)

# Decode and return the generated text

generated_text = tokenizer.decode(outputs[0], skip_special_tokens=True)

return generated_text

# Example usage

prompt = "The future of AI in software development is"

response = generate_text(prompt)

print(f"Prompt: {prompt}")

print(f"Response: {response}")This optimized model is now ready to be integrated into a web service. For more advanced optimization, developers often explore compiling models to formats like ONNX, a key topic in ONNX News, which can further unlock performance gains across different hardware platforms.

Serving Intelligence: Building the API with FastAPI

With an optimized model ready, we can now build the API endpoint. FastAPI News frequently highlights its advantages for ML deployment: its asynchronous support handles concurrent requests efficiently, and its Pydantic integration provides automatic data validation and API documentation.

Setting Up the FastAPI Application

The core of our service will be a FastAPI application that exposes a single endpoint. This endpoint will accept a text prompt, pass it to our model, and return the generated text. We’ll use Pydantic to define the structure of our request and response data, ensuring our API is robust and well-documented.

Here is a complete, self-contained example of a FastAPI server for our LLM. Save this as main.py.

import torch

from fastapi import FastAPI, HTTPException

from pydantic import BaseModel

from transformers import AutoModelForCausalLM, AutoTokenizer, BitsAndBytesConfig

import uvicorn

# --- 1. Model Loading (executed once at startup) ---

MODEL_PATH = "./results/fine-tuned-mistral-hn" # IMPORTANT: Update with your model path

# Use a global variable to hold the model and tokenizer

model = None

tokenizer = None

app = FastAPI(

title="Homegrown LLM API",

description="An API for interacting with a fine-tuned Mistral model.",

version="1.0.0",

)

@app.on_event("startup")

def load_model():

"""

Load the model and tokenizer at application startup.

This is more efficient than loading it on every request.

"""

global model, tokenizer

print(f"Loading model from: {MODEL_PATH}")

try:

quantization_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_compute_dtype=torch.bfloat16

)

tokenizer = AutoTokenizer.from_pretrained(MODEL_PATH)

model = AutoModelForCausalLM.from_pretrained(

MODEL_PATH,

quantization_config=quantization_config,

device_map="auto"

)

print("Model and tokenizer loaded successfully!")

except Exception as e:

print(f"Error loading model: {e}")

# In a real app, you might want to prevent startup or handle this more gracefully

raise RuntimeError(f"Failed to load model from {MODEL_PATH}") from e

# --- 2. Pydantic Models for Request and Response ---

class GenerationRequest(BaseModel):

prompt: str

max_new_tokens: int = 100

class GenerationResponse(BaseModel):

generated_text: str

prompt: str

# --- 3. API Endpoint ---

@app.post("/generate", response_model=GenerationResponse)

async def generate_text_endpoint(request: GenerationRequest):

"""

Accepts a prompt and returns generated text from the model.

"""

if model is None or tokenizer is None:

raise HTTPException(status_code=503, detail="Model is not loaded yet.")

try:

# Prepare inputs for the model

inputs = tokenizer(request.prompt, return_tensors="pt").to(model.device)

# Generate text

with torch.no_grad():

outputs = model.generate(**inputs, max_new_tokens=request.max_new_tokens)

# Decode the output

response_text = tokenizer.decode(outputs[0], skip_special_tokens=True)

return GenerationResponse(generated_text=response_text, prompt=request.prompt)

except Exception as e:

raise HTTPException(status_code=500, detail=f"An error occurred during text generation: {e}")

# --- 4. Run the application ---

if __name__ == "__main__":

# To run: uvicorn main:app --reload

uvicorn.run(app, host="0.0.0.0", port=8000)To run this server, you would first install the necessary libraries (fastapi, uvicorn, torch, transformers, bitsandbytes, accelerate) and then execute uvicorn main:app --reload in your terminal. You can then access the interactive API documentation at http://127.0.0.1:8000/docs to test your endpoint.

Advanced Techniques and Best Practices

Moving from a simple prototype to a production-ready service requires attention to detail, performance, and scalability. For high-throughput scenarios, specialized serving engines are often necessary.

High-Performance Inference Servers

While FastAPI is excellent for wrapping the model logic, for demanding applications, you might use a dedicated inference server. Tools like those discussed in vLLM News or NVIDIA’s Triton Inference Server News are designed for high-concurrency, low-latency LLM serving. They implement advanced techniques like paged attention and continuous batching to maximize GPU utilization. You can still use FastAPI as a front-end gateway to orchestrate calls to these powerful backends.

Containerization with Docker

Packaging your application, model, and dependencies into a Docker container is a standard best practice. It ensures consistency across development, staging, and production environments and simplifies deployment. A Dockerfile for our FastAPI app would specify the base Python image, copy the application code and model files, install dependencies, and define the command to start the Uvicorn server.

Here’s an example Dockerfile to containerize our application:

# Use an official NVIDIA CUDA image as a base for GPU support

FROM nvidia/cuda:12.1.0-base-ubuntu22.04

# Set environment variables to prevent interactive prompts

ENV DEBIAN_FRONTEND=noninteractive

ENV PYTHONUNBUFFERED=1

# Install Python and pip

RUN apt-get update && \

apt-get install -y python3.10 python3-pip && \

rm -rf /var/lib/apt/lists/*

# Set working directory

WORKDIR /app

# Copy requirements file and install dependencies

COPY requirements.txt .

RUN pip3 install --no-cache-dir -r requirements.txt

# Copy the application code and model files

COPY ./main.py .

# IMPORTANT: Ensure your model directory is copied into the container

# This can be a large directory, consider your build context

COPY ./results /app/results

# Expose the port the app runs on

EXPOSE 8000

# Command to run the application

CMD ["uvicorn", "main:app", "--host", "0.0.0.0", "--port", "8000"]Integration with the Broader AI Ecosystem

A deployed LLM API is often just one component of a larger system. Frameworks covered in LangChain News and LlamaIndex News excel at building complex applications on top of LLMs, such as Retrieval-Augmented Generation (RAG) systems. Your custom FastAPI endpoint can be seamlessly integrated as a custom LLM provider within these frameworks. For RAG, you would also need a vector database, with popular choices often featured in Pinecone News, Milvus News, or Chroma News.

Conclusion: Your Gateway to Custom AI Solutions

We have journeyed through the complete lifecycle of creating and deploying a custom LLM: from fine-tuning an open-source model on a specialized dataset to optimizing it for performance and serving it via a robust FastAPI endpoint. This process democratizes access to powerful, domain-specific AI, enabling developers to build intelligent applications that were once the exclusive domain of large research labs. The combination of the Hugging Face ecosystem for training and FastAPI for deployment provides a powerful, flexible, and scalable stack for bringing your AI ideas to life.

As a next step, consider exploring more advanced deployment strategies on platforms like AWS SageMaker News or Azure Machine Learning News, building interactive user interfaces with Gradio News or Streamlit News, or integrating your model into a larger RAG pipeline. The world of custom AI is vast and rapidly evolving, and with these tools and techniques, you are well-equipped to build the next generation of intelligent applications.