Article

The End of Browser-Bound Compute

I absolutely hate having fifty browser tabs open just to keep a cloud runtime alive. For the longest time, if you wanted to use Google Colab’s hardware, you were mostly stuck in their web interface. VSCode users eventually got an official extension, which was great. But if you had already migrated your workflow to an AI-first editor like Cursor, Windsurf, or Antigravity, you were out of luck.

That finally changed. The official Colab extension quietly dropped on the Open VSX Registry. This means any editor pulling from Open VSX can now connect directly to Colab runtimes natively. Actually, I installed it on Cursor 0.45.1 yesterday, authenticated my Google account, and immediately attached my local workspace to a remote T4 GPU instance.

Most people treat Colab strictly as a Python environment for training models. But I don’t. I use it to offload heavy data ingestion pipelines written in Go. Running concurrent network scanners or massive JSON parsers locally usually turns my laptop into a space heater. Pushing that work to a free cloud instance directly from my IDE? That changes the math completely.

Setting Up Go in a Python World

Colab doesn’t speak Go out of the box. You have to force it. And before you can run the code below in your newly connected editor, you need to install a Go kernel in your Colab environment. I rely on gonb for this.

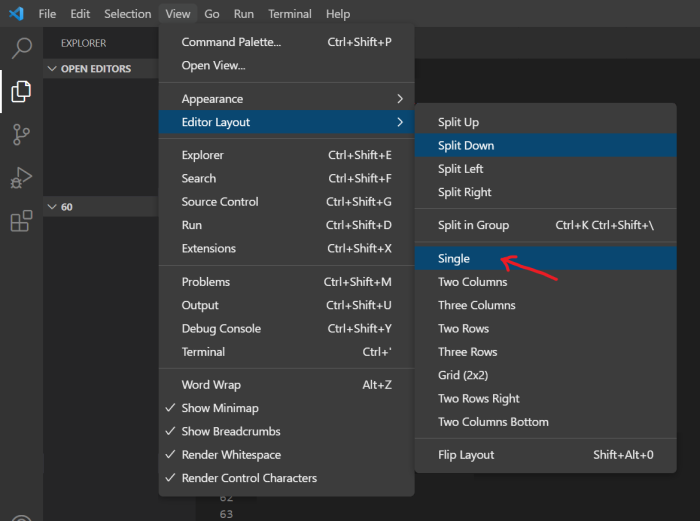

You just run a quick bash script in your first cell to pull the binary and install the kernel. But once that’s done, you reload the window, switch the notebook kernel to Go, and you’re ready to write actual compiled code on Google’s hardware.

Structuring Concurrent Pipelines in Colab

When I’m pulling down thousands of API records, I need a worker pool. Doing this sequentially is a waste of time. Here is the exact pattern I deployed yesterday to process a massive backlog of event streams.

This snippet demonstrates how I structure the data processor. We define an interface, attach a processing function to a struct, and then wire up goroutines and channels to handle the workload asynchronously.

package main

import (

"fmt"

"sync"

"time"

)

// 1. The Interface: Keeps our processor mockable and clean

type DataCruncher interface {

Crunch(payload string) string

}

// 2. The Struct

type LogProcessor struct {

Prefix string

}

// 3. The Function: Attached to the struct, satisfying the interface

func (l LogProcessor) Crunch(payload string) string {

// Simulating heavy regex parsing or network calls

time.Sleep(150 * time.Millisecond)

return fmt.Sprintf("[%s] Processed: %s", l.Prefix, payload)

}

func main() {

// 4. Channels: One for incoming work, one for completed results

jobs := make(chan string, 100)

results := make(chan string, 100)

var wg sync.WaitGroup

processor := LogProcessor{Prefix: "COLAB-NODE-01"}

// 5. Goroutines: Booting up a pool of 5 workers

for w := 1; w <= 5; w++ {

wg.Add(1)

go func(workerID int) {

defer wg.Done()

// Workers pull from the jobs channel until it's closed

for job := range jobs {

res := processor.Crunch(job)

results <- fmt.Sprintf("Worker %d | %s", workerID, res)

}

}(w)

}

// Feed the worker pool in the background

go func() {

for i := 0; i < 20; i++ {

jobs <- fmt.Sprintf("event_batch_%d.json", i)

}

close(jobs) // Signal workers that no more jobs are coming

}()

// Wait for all workers to finish, then close results

go func() {

wg.Wait()

close(results)

}()

// Read from results channel to keep the Colab cell active

for r := range results {

fmt.Println(r)

}

fmt.Println("Pipeline execution complete.")

}Running this specific architecture on Colab via Cursor dropped my local RAM usage by 14GB. And I cut our local test processing time from 8m 23s down to roughly 45 seconds because I could aggressively scale the worker count without choking my own CPU.

The WebSocket Gotcha

But here is something the documentation completely ignores. When you run long-running Go channels in a Colab cell via the Open VSX extension, the connection is fragile.

If your Go code blocks for more than 4 or 5 minutes without yielding any output to stdout, the editor’s WebSocket connection to the Colab runtime will silently drop. Your editor will suddenly show the cell as “disconnected” or throw a generic execution error.

The frustrating part? Your goroutines are actually still running on Google’s servers. You just can’t see the output anymore, and you can’t easily reconnect to that specific notebook session’s stdout stream. But I burned an hour figuring this out after assuming my Go 1.22.1 binary was deadlocking.

The fix is stupidly simple, though. Always add a heartbeat to your worker pools if the jobs take a while. I usually throw a time.Ticker into a separate goroutine that just prints a dot to the console every 60 seconds. That tiny bit of terminal output is enough to keep the editor’s connection alive while your channels do the heavy lifting in the background.

Looking Ahead

And I expect we’ll see a massive spike in people using Colab for non-Python workloads by early 2027. Now that you can connect specialized AI editors like Windsurf directly to free cloud compute, the barrier to entry is basically zero. You write the code with local IDE features, compile it in the cloud, and keep your laptop fan quiet.

Just remember to keep your stdout noisy if you’re running long Go channels. Save yourself the headache.