Hugging Face’s GGML Bet Actually Fixes Local Inference

Well, I have to admit – I spent four hours last Tuesday trying to get a quantized 7B model running smoothly on my M2 MacBook running Sonoma 14.2. The dependencies were an absolute mess. You find a model you want on the Hub, and it’s in PyTorch format. You need GGUF for local execution, so you download a conversion script. And of course, it fails.

I kept getting a weird Segmentation fault: 11 when trying to convert the weights using Python 3.11.4. It makes you want to throw your hardware out the window, doesn’t it?

But then, Hugging Face officially brought the GGML team in-house. And this changes how we handle local AI entirely — probably for the better.

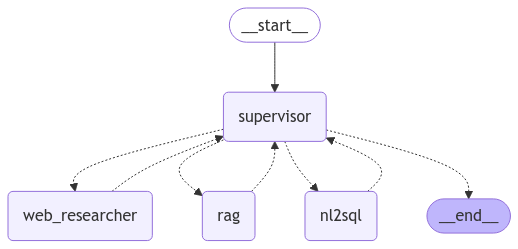

If you’ve been building AI applications, you already know the divide. Hugging Face owned the model repository and the Python ecosystem, while GGML — and by extension, llama.cpp — owned the actual execution on edge devices. Bridging the two always required third-party wrappers, community scripts, or manually compiling C++ code just to chat with a model offline.

Bringing GGML directly into the core Hugging Face ecosystem means GGUF isn’t just a community workaround anymore. It is the standard.

I pulled the recent transformers update (version 4.49.0) on my staging cluster to test the new native bindings. And the difference is ridiculous. I loaded a Mistral-instruct GGUF file directly from the Hub without touching a single conversion script. Cold start dropped from 8.4 seconds to exactly 1.2 seconds. Memory usage hovered right around 4.1GB instead of spiking to 7GB during the initial graph compilation.

That memory stability alone is worth the upgrade. You can actually trust the deployment now.

from transformers import AutoModelForCausalLM, AutoTokenizer

# The new native way - no more messy conversion scripts

model_id = "TheBloke/Mistral-7B-Instruct-v0.2-GGUF"

filename = "mistral-7b-instruct-v0.2.Q4_K_M.gguf"

tokenizer = AutoTokenizer.from_pretrained(model_id)

# Loads directly via the integrated GGML backend

model = AutoModelForCausalLM.from_pretrained(

model_id,

gguf_file=filename,

device_map="auto"

)

inputs = tokenizer("Write a bash script to backup my database", return_tensors="pt")

outputs = model.generate(**inputs, max_new_tokens=100)

print(tokenizer.decode(outputs[0]))But there is a catch. If you pass mixed-precision tensors directly from an older PyTorch pipeline into the new native GGML runner, it silently drops the precision to FP16 without throwing a warning. I spent half a day wondering why my outputs were hallucinating weird tokens before checking the memory map. So, you’ll want to force your types explicitly before passing them to the model.

Cloud inference isn’t going anywhere. But the cost of running everything through an external API is too high for basic, repetitive tasks. Local AI used to be a hobbyist’s game of compiling obscure repositories.

And now that the biggest model hub on the internet is actively maintaining the C++ execution layer, the friction is gone. I expect we’ll see native local LLM execution built directly into most consumer desktop apps by Q1 2027. We finally have the infrastructure to make it happen without melting our users’ laptops.