Debugging Multi-Agent Chaos with LangSmith

So there I was, staring at my terminal at 11:30 PM last Tuesday. My local orchestration script was quietly burning through $40 of API credits an hour. Two agents—a researcher and a synthesizer—were caught in a polite, infinite loop of “Could you clarify?” and “Here is the clarification.”

Building multi-agent systems is the current obsession. You spin up a few specialized roles, give them tools, let them talk to each other, and hope for the best. Except when they fail, they don’t throw a clean exception. They just hallucinate confidently or get stuck in a conversational cul-de-sac.

When a single script fails, you read the stack trace. When a multi-agent swarm fails, you’re looking at a distributed system where the components communicate in natural language. It’s a debugging nightmare.

The Visibility Problem

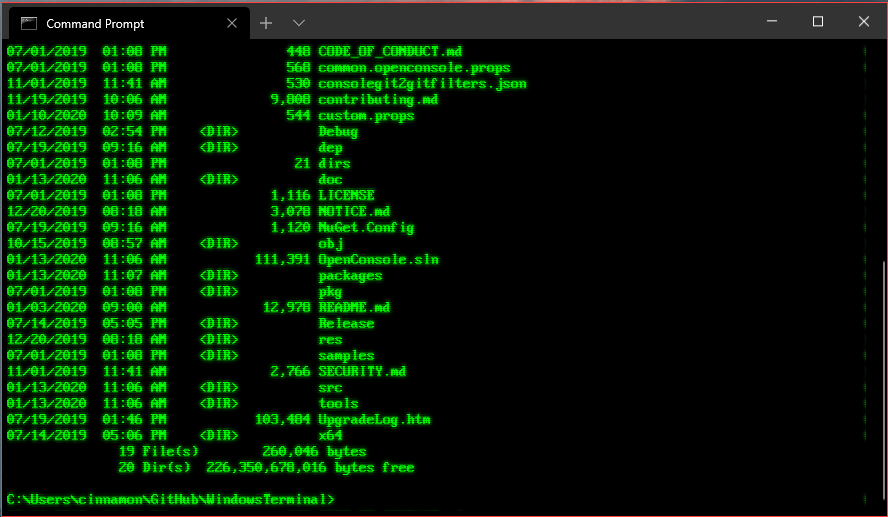

I’ve been working on a production agentic system for the past few months. We use a supervisor agent that delegates tasks to specialized workers. On paper, it’s brilliant. In practice, on my M3 Mac running Python 3.12.2, it was a black box.

Before I got serious about observability, my debugging process was basically tailing console logs and trying to guess which agent decided to format a JSON payload incorrectly. I kept getting RateLimitError: 429 because the worker agents would panic and ping the Anthropic API 400 times in two minutes trying to fix a single missing comma.

I finally wired up LangSmith properly. And I don’t just mean slapping LANGCHAIN_TRACING_V2=true in my .env file and calling it a day. I mean actually using the multi-agent tracing features they’ve been pushing heavily over the last few months.

The Async Gotcha Nobody Mentions

Here is a specific edge case that drove me crazy. The documentation barely mentions this, but if you run agents asynchronously—say, using asyncio.gather to have three worker agents scrape different pages simultaneously—your LangSmith traces will often turn into an unreadable, flattened list of LLM calls.

The parent-child relationship gets completely severed. You lose the waterfall view. You just see 50 disconnected API calls and have no idea which agent triggered which call.

To fix this, you have to explicitly pass the run_tree context or ensure your callbacks are properly configured for async execution. If you’re building custom agents without relying entirely on pre-built LangGraph abstractions, you need to manually manage the trace hierarchy.

import asyncio

from langchain_core.callbacks import AsyncCallbackManager

from langchain_core.tracers.context import tracing_v2_enabled

async def run_worker_agent(task, parent_run_id):

# If you don't pass the parent_run_id, this breaks out of the trace tree

async with tracing_v2_enabled() as cb:

# Your custom agent logic here

response = await my_custom_agent.ainvoke(

{"input": task},

config={"callbacks": cb, "run_name": "Worker_Agent"}

)

return response

async def supervisor_logic():

tasks = ["Find X", "Analyze Y", "Summarize Z"]

# The supervisor creates the parent context

with tracing_v2_enabled(project_name="multi_agent_prod") as cb:

# Pass the supervisor's run ID to the workers

parent_id = cb.latest_run.id if cb.latest_run else None

results = await asyncio.gather(

*(run_worker_agent(t, parent_id) for t in tasks)

)

return resultsOnce I fixed the context propagation, I could actually see the waterfall. I immediately spotted the problem. The “summarizer