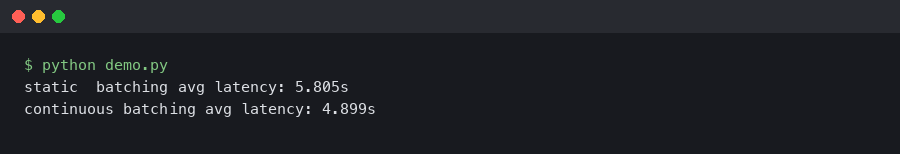

vLLM 0.6 Continuous Batching Cut My Llama 3 Latency in Half

Upgrading a Llama 3 8B endpoint from vLLM 0.5.4 to 0.6.x is the rare dependency bump where the numbers on the dashboard actually move.

How to Convert PyTorch Models to ONNX Format for Faster Inference

I remember the first time I deployed a PyTorch model to production. I wrapped a beautifully trained ResNet model in a Flask API, spun up a Docker.

Debugging Multi-Agent Chaos with LangSmith

So there I was, staring at my terminal at 11:30 PM last Tuesday. My local orchestration script was quietly burning through $40 of API credits an hour.

Optuna Is Still The HPO King (Yes, Even In 2026)

Actually, I should clarify – I spent last Tuesday fighting with a “self-optimizing” LLM agent that promised to tune my hyperparameters automatically.

Secure AI in Hex: Running Claude Inside Snowflake Cortex

I’ve lost count of how many times I’ve had to kill a project—or at least neuter it significantly—because InfoSec took one look at the architecture diagram.

OpenAI Weights on SageMaker: Hell Froze Over

Honestly, I had to check the URL three times. Then I checked the SSL certificate. Then I texted a buddy at Amazon to ask if their marketing team had gone.

Weaviate on GCP: Stop Overcomplicating Your Vector Stack

I remember the bad old days. You probably do too. Back around 2023 or 2024, if you wanted a production-grade vector database, you were basically signing.