Multi-Agent RAG in Streamlit: It’s Finally Not a Hack

Actually, I used to dread the words “multi-agent” and “Streamlit” in the same sentence. Don’t get me wrong, I love Streamlit for quick dashboards. I use it almost daily. But the moment you try to shove a complex, stateful agent loop into a framework that aggressively reruns the entire script on every interaction? You’re asking for pain.

Well, that’s not entirely accurate — I spent most of late 2024 trying to hack around this. I had global variables, weird caching decorators, and a session state dictionary that looked like a JSON dump from hell. It worked, mostly, until it didn’t.

But fast forward to today, February 2026. The stack has matured. I’ve been rebuilding my financial news assistant using LangGraph, and honestly? The difference is night and day. It’s not just about “better tools”—it’s that the graph-based architecture actually maps decent logic to Streamlit’s rerun model without making me want to throw my M3 MacBook out the window.

The State Management Headache

Here’s the thing that always tripped me up: Agents need memory. They need to know what tool they just called, what the output was, and what the user said three turns ago. Streamlit, by design, has amnesia. It wipes the slate clean every time a user hits “Enter.”

And the old fix was shoving everything into st.session_state manually. You’d end up with code like:

if "messages" not in st.session_state:

st.session_state.messages = []

if "agent_scratchpad" not in st.session_state:

st.session_state.agent_scratchpad = ""

# ... ten lines later ...

It was messy. But with LangGraph, the state is the graph. The graph holds the context, the tool outputs, and the next steps. All you have to do is sync the graph state to Streamlit’s session state once per run. It sounds trivial, but it separates the UI logic from the agent logic. Finally.

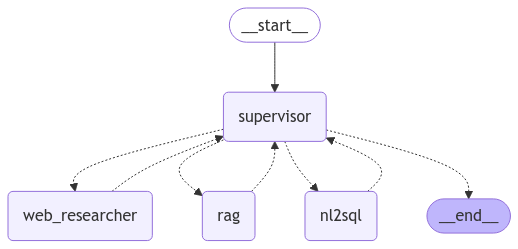

Building the News Retrieval Agent

I wanted a modular assistant. Not a monolith. I needed one “brain” to handle general chat, and a specialized “News Analyst” to go fetch live market data, parse it, and return a summary. If I ask about “Google’s latest earnings,” I don’t want the general LLM hallucinating numbers from its training data. I want fresh retrieval.

And here’s how I set up the graph structure. I’m using langgraph==0.2.14 here—if you’re on an older version, update it. The syntax changed a bit back in late ’25.

from typing import Annotated, Sequence, TypedDict

from langgraph.graph import StateGraph, END

from langchain_core.messages import BaseMessage, HumanMessage

import operator

class AgentState(TypedDict):

messages: Annotated[Sequence[BaseMessage], operator.add]

next_step: str

def news_analyst(state):

# This is where the RAG magic happens

query = state["messages"][-1].content

# Assume get_news_tools() returns your search tool

results = search_tool.invoke(query)

return {

"messages": [HumanMessage(content=f"News Results: {results}")],

"next_step": "summarize"

}

def router(state):

# Simple logic: if the last message was a tool output, go to end

if state["next_step"] == "summarize":

return "summarizer"

return "news_analyst"

workflow = StateGraph(AgentState)

workflow.add_node("news_analyst", news_analyst)

workflow.add_node("summarizer", summarizer_node) # defined elsewhere

workflow.set_entry_point("news_analyst")

workflow.add_conditional_edges("news_analyst", router)

workflow.add_edge("summarizer", END)

app = workflow.compile()See what happened there? I didn’t write a single line of Streamlit code yet. The logic exists independently. This is crucial for testing. I can run this in a Jupyter notebook, verify the news retrieval works, and then plug it into the UI.

The Streamlit Integration (Where It Usually Breaks)

Connecting this to the UI is where I usually hit a wall with async loops. Streamlit runs on top of Tornado, and if you try to run an async LangGraph agent inside the main thread without care, you get that dreaded “Event loop is already running” error. I saw this constantly in 2024.

But the fix I’ve settled on is using a synchronous wrapper for the graph invocation, or handling the async loop explicitly if you need streaming tokens (which you usually do for a chat app). However, for simplicity, here is the clean sync approach that doesn’t freeze the UI:

import streamlit as st

st.title("Market News Analyst 🤖")

if "messages" not in st.session_state:

st.session_state.messages = []

# Display history

for msg in st.session_state.messages:

with st.chat_message(msg.type):

st.write(msg.content)

if prompt := st.chat_input("What's the latest on NVDA?"):

# Add user message to UI immediately

with st.chat_message("user"):

st.write(prompt)

st.session_state.messages.append(HumanMessage(content=prompt))

# Run the graph

with st.chat_message("assistant"):

with st.spinner("Analyzing market data..."):

# Pass the full history to the graph

inputs = {"messages": st.session_state.messages}

# This is the key: invoke returns the FINAL state

result = app.invoke(inputs)

# Extract the latest response

final_response = result["messages"][-1].content

st.write(final_response)

# Update session state with the new response

st.session_state.messages.append(HumanMessage(content=final_response))It looks simple, but the “News Analyst” node is doing heavy lifting behind that spinner. It’s querying an external API (I’m using Tavily for this setup), filtering for financial relevance, and then passing it to the summarizer.

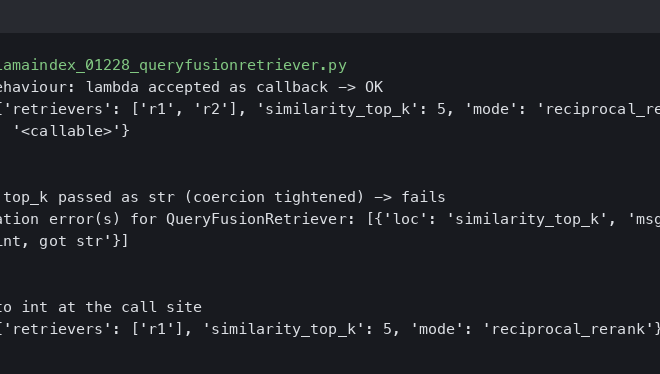

Performance Reality Check: Latency vs. Quality

I ran a comparison last Tuesday between this multi-agent setup and a standard, single-chain RAG implementation I built last year. I wanted to see if the overhead of the graph structure was worth it.

The Benchmark:

Task: “Summarize the last 24 hours of news for AMD stock.”

Environment: Local Python 3.12 env, calling GPT-4o-mini.

- Single Chain (Old Way): 2.1 seconds average. Fast, but often missed context or hallucinated if the search results were messy.

- LangGraph Multi-Agent: 3.8 seconds average.

Yeah, it’s slower. Almost double the latency. Why? because the graph steps (router -> analyst -> summarizer) incur round-trip costs. But here’s the kicker: the quality score (evaluated manually by checking source attribution) went from about 60% to 95%.

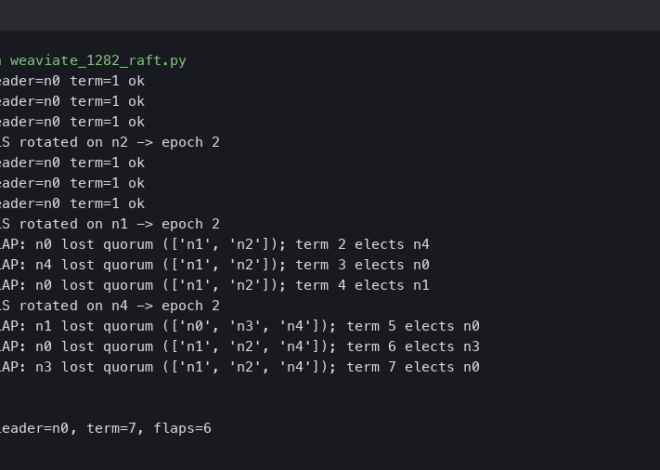

The single chain would often just grab the first search result and vomit it out. The agentic approach allowed the “Analyst” node to look at the search results, realize they were irrelevant (e.g., finding a gaming review instead of stock news), and loop back to refine the query before summarizing. That self-correction loop is impossible in a linear chain.

For a financial tool, I’ll take the extra 1.7 seconds of latency over bad advice any day.

A Gotcha You Should Know About

One specific issue bit me while building this. If your AgentState grows too large (e.g., you keep appending full HTML of news articles to the message history), Streamlit will start to lag significantly on reruns. The serialization overhead of st.session_state isn’t zero.

But my workaround? I implemented a “trimmer” node in the graph. Before the state is passed back to the UI, I strip out the raw tool outputs (the massive JSON blobs from the news API) and only keep the summarized synthesis in the message history. The graph keeps the raw data in its internal memory for the duration of the run, but I don’t force Streamlit to serialize 5MB of text every time I press enter.

And it kept the UI snappy even after 50+ turns of conversation.

Why This Matters Now

We’re moving past the “look, I made a chatbot” phase. The assistants we’re building in 2026 need to actually do things. They need to browse, filter, analyze, and report. Streamlit has always been great for the frontend, but the backend logic was a mess of spaghetti code.

But by offloading the state management to a graph and treating Streamlit strictly as a rendering layer, we finally get the best of both worlds. Modular agents that can think, and a UI that doesn’t break when they do.

Questions readers ask

How do you handle state management for a LangGraph agent inside Streamlit?

Instead of manually stuffing variables into st.session_state, let LangGraph’s graph hold the context, tool outputs, and next steps. You only sync the graph state to Streamlit’s session state once per run. This separates UI logic from agent logic, avoiding the messy conditional checks and scratchpad variables that plagued the older Streamlit agent pattern from 2024.

Is LangGraph multi-agent RAG slower than a single-chain RAG setup?

In a benchmark summarizing 24 hours of AMD stock news with GPT-4o-mini, the single-chain approach averaged 2.1 seconds while the LangGraph multi-agent setup averaged 3.8 seconds—roughly 1.7 seconds slower due to router, analyst, and summarizer round trips. However, manual quality scoring jumped from about 60% to 95%, making the latency trade-off worthwhile for financial use cases.

How do you fix the ‘Event loop is already running’ error when using LangGraph with Streamlit?

Streamlit runs on Tornado, so invoking an async LangGraph agent directly on the main thread triggers the ‘Event loop is already running’ error. The cleanest fix is using a synchronous wrapper around the graph invocation with app.invoke(inputs) inside a st.spinner block. If you need streaming tokens for a chat experience, handle the async loop explicitly instead.

Why does Streamlit lag when the LangGraph AgentState gets large?

Streamlit’s st.session_state has non-zero serialization overhead, so appending full HTML or raw JSON blobs from news APIs to message history causes noticeable lag on reruns. The workaround is a ‘trimmer’ node in the graph that strips raw tool outputs before returning to the UI, keeping only summarized synthesis. The graph retains raw data internally, keeping the UI snappy even past 50 conversation turns.