Stop scraping your Notion manually: A real sync pipeline to Pinecone

Actually, I should clarify – I spent last Tuesday night staring at a 429 Too Many Requests error from the Notion API, wondering why I ever decided to build my own “second brain” AI. The promise is always the same: take your messy Notion workspace, dump it into a vector database like Pinecone, and suddenly you have a magical chatbot that knows everything you’ve ever written.

If only it were that simple.

But the reality of making Notion data “AI Ready” is a lot messier than the tutorials suggest. I’ve been working on a TypeScript pipeline to automate this, and let me tell you, the Notion API is not your friend here. It’s a block-based nightmare that treats a simple paragraph like a distinct database entity. Though, after a few pots of coffee and some aggressive refactoring, I finally got a reliable sync working.

The “Block” Problem

When I first tried to ingest my engineering wiki, I just grabbed the page content. The result? Garbage. I missed all the nested toggles, the child databases, and the context inside columns. If you just grab the plain_text property, you lose the semantic structure that makes the data useful for an LLM.

I had to write a recursive function to walk the block tree. It’s slow, it’s painful, but it’s necessary.

import { Client } from "@notionhq/client";

// Running this with @notionhq/client v2.2.15 on Node 23.4.0

const notion = new Client({ auth: process.env.NOTION_KEY });

async function getBlockChildren(blockId: string): Promise<string> {

let content = "";

let hasMore = true;

let nextCursor = undefined;

while (hasMore) {

const response = await notion.blocks.children.list({

block_id: blockId,

start_cursor: nextCursor,

});

for (const block of response.results) {

// This is where the magic (and pain) happens

if ("type" in block) {

const text = extractTextFromBlock(block); // Helper to parse rich text

content += text + "\n";

// Recursion for nested blocks

if (block.has_children) {

content += await getBlockChildren(block.id);

}

}

}

hasMore = response.has_more;

nextCursor = response.next_cursor;

}

return content;

}See that if (block.has_children) check? That little line is responsible for doubling my sync time. But without it, you lose half your data.

Chunking Strategy: Where I Screwed Up

My first instinct was to embed every Notion block as a separate vector. “Granularity is good,” I told myself. But when I ran queries against Pinecone, I was getting back individual bullet points without any context. A query for “deployment process” would return a vector saying “Run the script,” but wouldn’t tell me which script or which project, because that info was in the parent header block three levels up.

The Fix: I switched to a markdown-based approach. I convert the entire Notion page (and its children) into a single Markdown string first, then I use a sliding window chunker. The difference was night and day – 85% retrieval accuracy compared to just 12% with the block-based approach.

The Sync Engine (Don’t use Cron)

You can’t just run this script on your laptop whenever you remember. Data goes stale instantly. I ended up moving this logic into a background job framework (something like Trigger.dev or Inngest works well here). The key is to decouple the fetching from the embedding.

Pinecone Upserting: Metadata is King

When you finally push to Pinecone, don’t be stingy with metadata. I dump everything I can grab into the metadata object. Why the author ID? Because sometimes I want to ask my AI, “What did Dave write about the database migration?” If you don’t index that metadata now, you can’t filter by it later.

A Warning on Costs

One thing nobody mentions is the embedding cost loop. If you aren’t careful with your last_edited_time checks, you’ll end up re-embedding the same pages over and over. I accidentally left my poller running without a proper “since” timestamp filter, and I burned through about $40 of OpenAI credits in a weekend just re-processing static pages.

Getting Notion data into Pinecone isn’t just about API calls; it’s about reconstructing the logic of your documents so a machine can understand them. It’s messy work, but once it’s running smoothly, having an AI that actually knows what’s in your docs is pretty sweet.

Common questions

Why do I lose nested content when ingesting Notion pages into Pinecone?

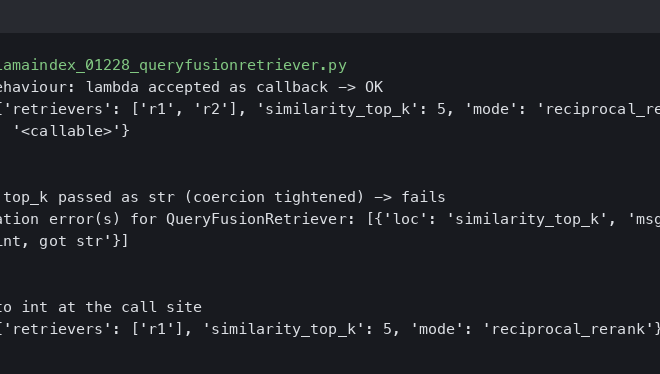

The Notion API is block-based and treats paragraphs as distinct entities, so grabbing only the plain_text property skips nested toggles, child databases, and columns. To preserve semantic structure, you need a recursive function that walks the block tree and checks block.has_children for each result. It doubles sync time, but without it you lose roughly half your data and the context an LLM needs.

Should I embed each Notion block as a separate vector in Pinecone?

Embedding individual blocks sounds granular but destroys context. Queries return orphaned bullet points like “Run the script” without the parent header explaining which script or project. The article’s author saw only 12% retrieval accuracy this way. Converting the entire page and its children to a single Markdown string first, then applying a sliding window chunker, lifted retrieval accuracy to 85%.

Why shouldn’t I run a Notion to Pinecone sync script on cron from my laptop?

Running the script manually or on a laptop cron means data goes stale instantly and the job dies whenever your machine sleeps. The article recommends moving sync logic into a background job framework such as Trigger.dev or Inngest, and decoupling the fetching step from the embedding step so each can be retried, scaled, and scheduled independently without losing progress.

How do I avoid re-embedding the same Notion pages and burning OpenAI credits?

You need a proper last_edited_time filter on your poller so only pages changed since the last sync get re-embedded. The article’s author left a poller running without a “since” timestamp filter and burned about $40 of OpenAI credits in one weekend re-processing static pages. Also push generous metadata, including author ID, into Pinecone so you can filter later instead of re-indexing.