Massive AI Models Are Failing. Small Fast.ai Builds Win.

I was staring at my AWS bill last Tuesday, trying to figure out how a simple image classification microservice managed to rack up $840 in three weeks. The answer was annoying but obvious. I was relying on a massive, expensive cloud endpoint for a job a local model could do practically for free.

We are seeing this exact problem play out across the entire tech industry right now. Huge, heavily funded AI products are quietly shutting down or scaling back. The math simply doesn’t work. You can’t spend millions on server compute when user downloads fall off a cliff after the initial novelty fades. The economics of running heavy generative models for everyday tasks are completely broken.

Which brings me back to the Fast.ai community.

Jeremy Howard and the Fast.ai crowd have been right about this for years. While everyone else was scrambling to hook their apps into the largest language and vision models available, Fast.ai kept teaching people how to train small, highly specific models on cheap hardware. Now that the venture capital subsidies for those big API calls are drying up, the Fast.ai approach looks less like a learning exercise and more like a survival strategy.

The Local Compute Reality Check

I decided to rip out the expensive third-party calls in my project. I spent maybe 45 minutes writing a script to handle the quality control image sorting we needed. I didn’t need a multi-billion parameter beast to tell me if a manufactured part had a scratch on it.

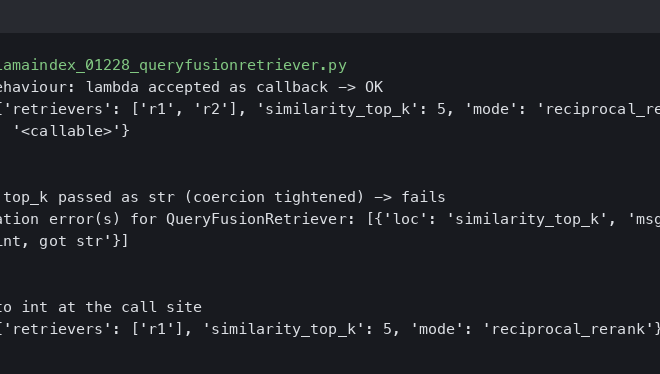

I just needed a basic convolutional neural network. So I set up a fresh environment with fastai 2.7.14 and PyTorch 2.3.0.

from fastai.vision.all import *

# Pointing to my local folder of good/defective part images

path = Path('./manufacturing_dataset')

# Setting up the data loaders with basic augmentation

dls = ImageDataLoaders.from_folder(

path,

valid_pct=0.2,

item_tfms=Resize(224),

batch_tfms=aug_transforms()

)

# Using a lightweight pre-trained model

learn = vision_learner(dls, resnet34, metrics=error_rate)

# Finding a good learning rate before training

learn.lr_find()

# Fine-tuning the top layers

learn.fine_tune(4, base_lr=2e-3)

# Exporting for production

learn.export('qc_model.pkl')I ran this on my local workstation—an RTX 4090 with 64GB RAM. It chewed through the data instantly.

The Benchmark That Made Me Feel Stupid

Here is the specific breakdown that made me regret not doing this months ago.

I ran our standard 12,500 image dataset through the commercial API we were using. It took 4.2 hours due to rate limits and cost about $60 just for that one batch. When I ran that same dataset through the local Fast.ai model I just trained? It took 8 minutes to train from scratch. Inference now takes roughly 14 milliseconds per image.

Frequently asked questions

Why are massive AI models failing while small Fast.ai builds succeed?

The economics of running heavy generative models for everyday tasks are broken. Venture capital subsidies for large API calls are drying up, and user downloads drop sharply after initial novelty fades. Meanwhile, Fast.ai’s approach of training small, highly specific models on cheap hardware has proven sustainable. What once looked like a learning exercise now functions as a survival strategy for developers facing unsustainable cloud compute bills.

How much faster is a local Fast.ai model compared to a commercial image classification API?

Running a 12,500 image dataset through a commercial API took 4.2 hours due to rate limits and cost around $60 for that single batch. The same dataset trained from scratch on a local Fast.ai model in just 8 minutes. After training, inference dropped to roughly 14 milliseconds per image, eliminating both the rate-limiting bottleneck and the per-call charges entirely.

What Fast.ai code trains a ResNet34 model for quality control image sorting?

Using fastai 2.7.14 and PyTorch 2.3.0, you point ImageDataLoaders.from_folder at a dataset path with valid_pct=0.2, Resize(224), and aug_transforms(). Then create vision_learner(dls, resnet34, metrics=error_rate), call learn.lr_find() to find a learning rate, run learn.fine_tune(4, base_lr=2e-3), and finally learn.export(‘qc_model.pkl’) for production. The whole script takes roughly 45 minutes to write.

What hardware do you need to train a Fast.ai image classifier locally?

The author ran training on a local workstation equipped with an RTX 4090 GPU and 64GB of RAM, which chewed through the dataset instantly. The approach uses a lightweight pre-trained model (resnet34) rather than a multi-billion parameter beast, meaning you do not need massive cloud infrastructure. A basic convolutional neural network handles tasks like detecting scratches on manufactured parts without expensive endpoints.