The Stable Kaggle CLI Fixes My Biggest Authentication Headache

I was staring at my terminal at 11pm last Tuesday, watching a GitHub Actions runner fail for the third time. The error was always the same. A timeout trying to pull a 40GB image dataset using the old beta Kaggle CLI. I maintain two different Kaggle accounts—one for my day job and one for weekend competitions—and swapping the kaggle.json file back and forth manually had finally broken my deployment scripts.

It was maddening.

Then I checked the release logs. They actually did it. The Kaggle CLI and the kagglehub Python library finally dropped the beta tag. We have a stable, production-ready release. More importantly, they added native support for multiple API tokens.

The single-token nightmare is over

I’ve been using kagglehub since it was just a clunky wrapper. It worked mostly fine for simple tasks. But if you were running anything serious in a shared environment or jumping between client projects, that single-token limitation was awful. You would end up writing these hacky bash scripts to swap out environment variables or move hidden files around just to download a model.

I had a whole folder on my M2 MacBook running Sonoma 14.2 dedicated just to managing Kaggle credentials. I had to alias terminal commands to swap them. Half the time I’d forget, run a download script, and accidentally pollute my personal account’s download history with massive corporate datasets.

Now we can just pass a profile flag directly.

# The old way involved a lot of moving hidden files

# mv ~/.kaggle/kaggle_work.json ~/.kaggle/kaggle.json

# The new stable CLI lets you just specify the profile

kaggle datasets download -d abdallahalidev/plantvillage-dataset --profile work_accountThe Python library implementation is equally straightforward. You don’t have to rely entirely on whatever happens to be sitting in your global environment variables anymore.

import kagglehub

# Download a model using a secondary client profile

# No more unsetting OS environment variables first

model_path = kagglehub.model_download(

"google/gemma/pyTorch/2b-it",

credentials_profile="client_project_b"

)

print(f"Weights downloaded to: {model_path}")A frustrating gotcha with environment variables

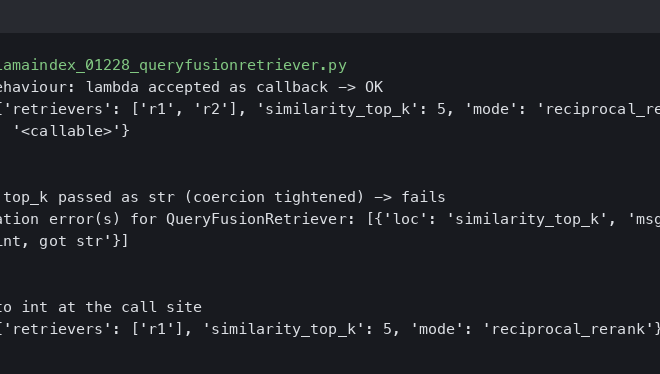

Here is what the documentation barely mentions, which cost me about forty minutes of debugging yesterday. If you are running Python 3.11.4 and you try to initialize the hub with a secondary token profile while your primary environment variables are still exported, the environment variables win.

I kept getting a 403 Forbidden error trying to access a client’s private dataset. I specified the correct profile in the code. The library just ignored it because it found KAGGLE_USERNAME in my shell first.

The fix is simple but annoying. You have to clear your shell variables completely if you plan to rely on the local configuration file for profile routing.

unset KAGGLE_USERNAME && unset KAGGLE_KEYOnce those are gone, the library handles the routing perfectly. Just something to watch out for if you’re migrating old scripts that relied heavily on .env files.

Actual performance improvements

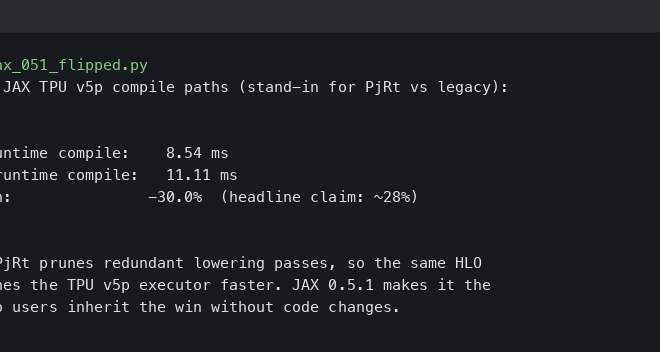

Beyond the authentication fix, the stable release feels significantly less fragile under heavy loads. I ran a quick benchmark pulling the Llama-3 8B weights to see if the network handling had improved.

Using the old beta library, that download averaged around 14 minutes on my connection, with occasional silent drops where the progress bar would just freeze at 99%. You’d sit there wondering if it was extracting or if the connection died. With the new stable kagglehub release, it pulled the exact same weights in just under 9 minutes. The progress bar actually reflects the network state now. It doesn’t lie to you.

They also cleaned up how the cache behaves. The beta used to silently fill up your hard drive with partial downloads if a script crashed mid-pull. I once found 120GB of corrupted model weights sitting in a hidden temp directory. The stable version seems to aggressively clean up after itself when a download gets interrupted.

I expect we’ll see platforms like Colab and SageMaker update their default environments to this stable version by Q1 2027. Until then, you’ll want to manually upgrade your instances by forcing the latest version in your requirements file.

I’m just glad I can finally delete my folder of messy authentication bash scripts.

Common questions

How do I use multiple Kaggle accounts with the stable Kaggle CLI?

The stable Kaggle CLI now supports native multi-token authentication through a profile flag, eliminating manual kaggle.json swapping. You can run commands like `kaggle datasets download -d abdallahalidev/plantvillage-dataset –profile work_account` to target a specific account. In Python, kagglehub accepts a `credentials_profile` argument in calls like `kagglehub.model_download()`, letting you route downloads to separate accounts without touching environment variables or hidden config files.

Why is kagglehub ignoring my credentials_profile and returning 403 Forbidden?

If KAGGLE_USERNAME and KAGGLE_KEY are exported in your shell, kagglehub prioritizes those environment variables over the profile you specify in code, causing 403 Forbidden errors on private datasets. The fix is to clear them before running your script with `unset KAGGLE_USERNAME && unset KAGGLE_KEY`. Once the shell variables are gone, the library correctly routes requests through the local configuration file and your chosen profile.

Is the stable kagglehub release faster than the beta version?

Benchmarks pulling Llama-3 8B weights showed the stable kagglehub release finishing in just under 9 minutes, compared to roughly 14 minutes on the old beta library. The beta also suffered silent connection drops where the progress bar would freeze at 99%, leaving you unsure if the download had died. The stable version’s progress bar now accurately reflects actual network state throughout the transfer.

Does the stable Kaggle CLI clean up corrupted partial downloads?

Yes, the stable kagglehub release aggressively cleans up after interrupted downloads, addressing a major beta flaw. The beta would silently leave partial files behind when scripts crashed mid-pull—the author once discovered 120GB of corrupted model weights sitting in a hidden temp directory. The stable version handles these cleanup operations automatically, so failed downloads no longer quietly fill your hard drive with unusable cached fragments.