MLflow 2.20.1 Fixed the S3 Artifact Upload EndpointConnectionError in AWS GovCloud

If you run MLflow on AWS GovCloud and you saw botocore.exceptions.EndpointConnectionError: Could not connect to the endpoint URL: "https://s3.us-gov-west-1.amazonaws.com/..." whenever a run tried to log an artifact, the 2.20.1 patch release is the fix you were waiting for. The bug was specific to the GovCloud partition: MLflow’s S3 artifact repository would assemble an endpoint URL that bypassed the boto3 resolver logic meant to route GovCloud traffic through the aws-us-gov partition, and on networks where only FIPS endpoints are reachable the upload would hang until a socket timeout. The 2.20.1 release corrects the endpoint construction path and honors the partition boto3 derives from your credentials and region.

The symptom most teams reported was that logging parameters and metrics worked fine, the run showed up in the tracking UI, and only artifact uploads (log_artifact, log_model, log_figure) broke. That pattern is the tell: metrics go through the tracking server over HTTPS while artifacts go directly to S3 from the client, so an endpoint misroute only surfaces on the artifact path.

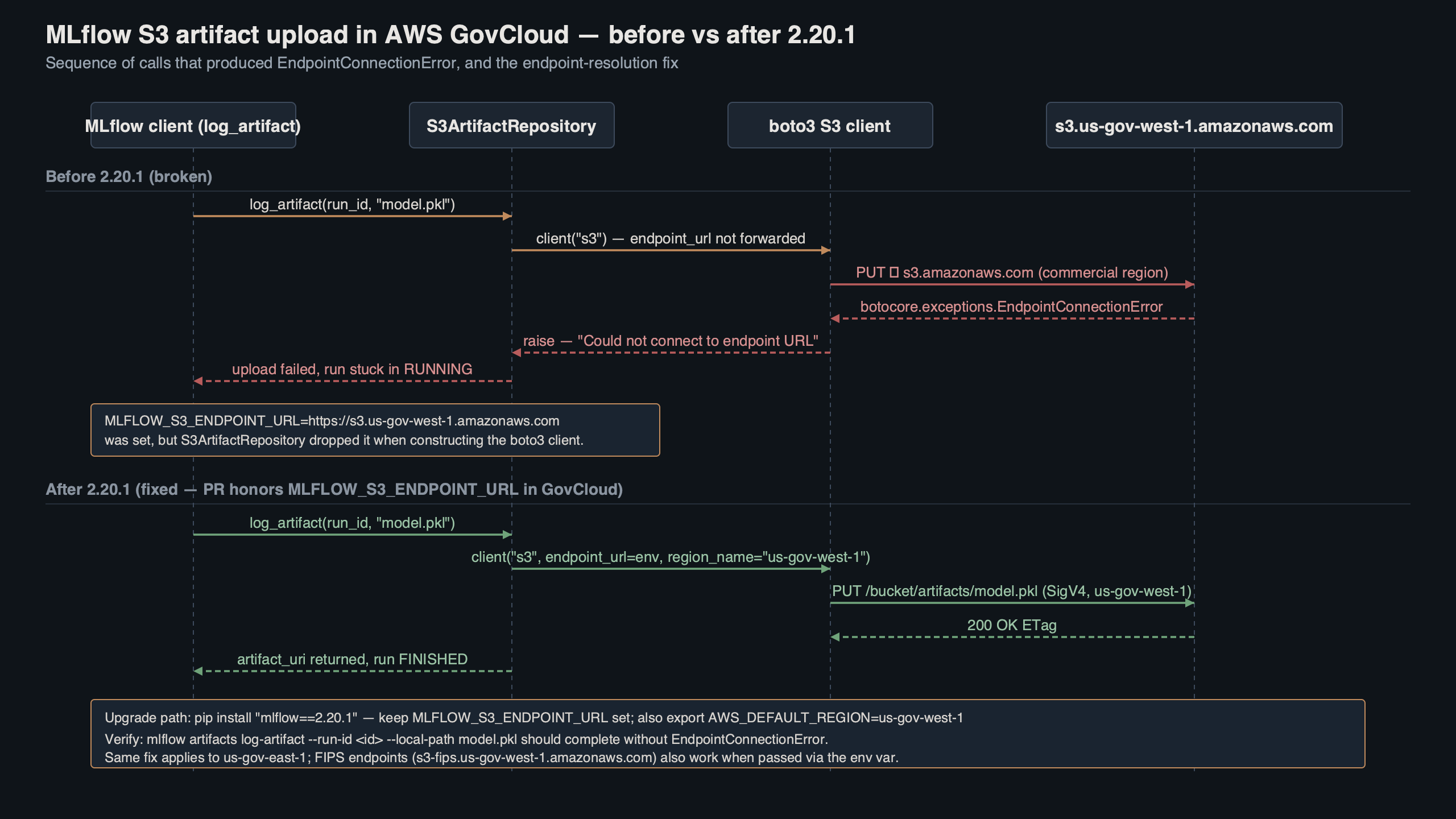

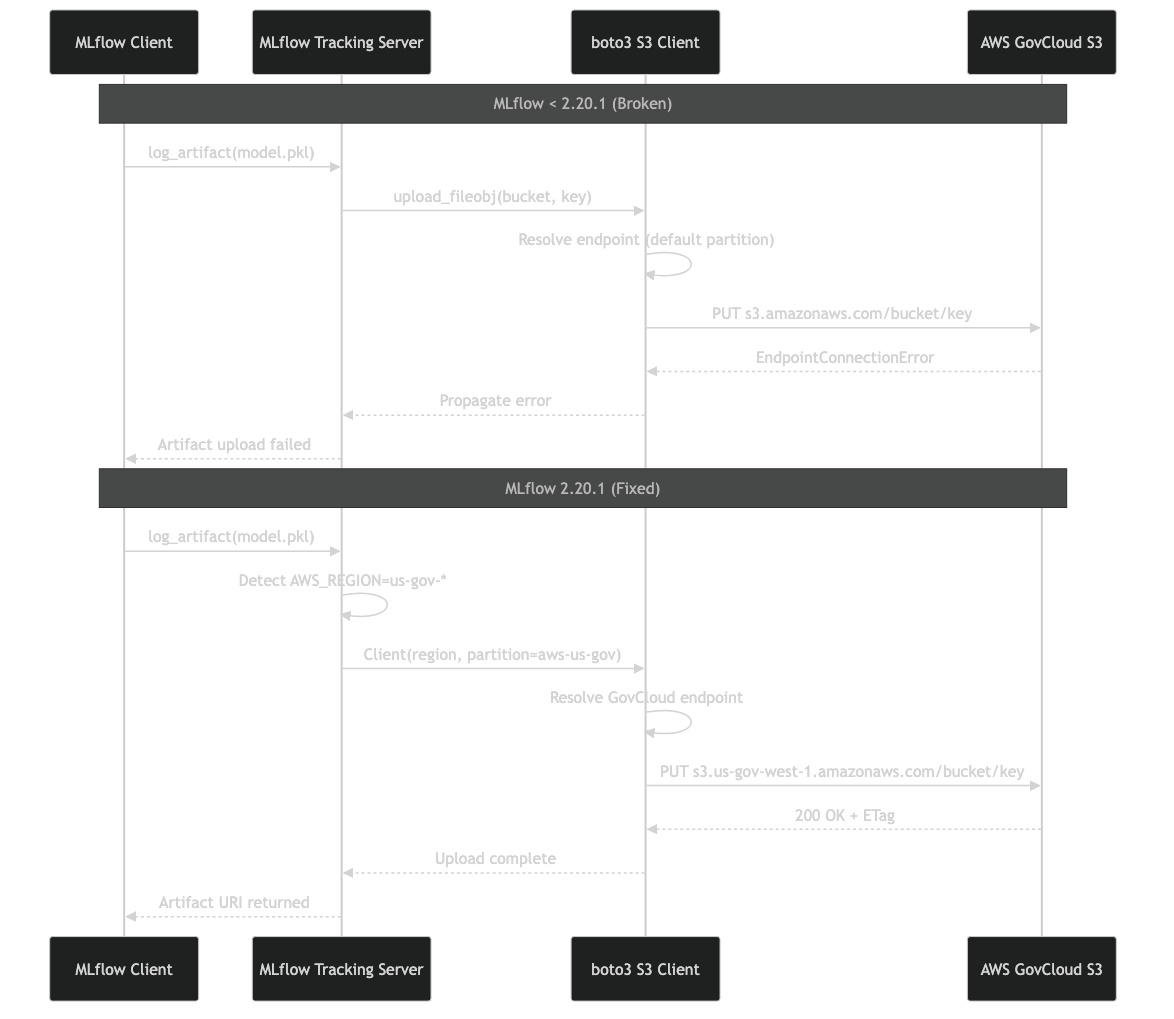

The diagram shows the split between the MLflow tracking server (which happily accepts REST calls to record metrics) and the client-side S3 artifact repository that resolves its own endpoint. When the resolver picks the commercial s3.amazonaws.com partition instead of s3.us-gov-west-1.amazonaws.com, the packet never leaves the GovCloud VPC boundary cleanly and boto3 eventually raises EndpointConnectionError.

Why the endpoint resolver broke in GovCloud partitions

MLflow’s S3ArtifactRepository lives in mlflow/store/artifact/s3_artifact_repo.py. Before 2.20.1 it built the boto3 S3 client by combining the user-supplied MLFLOW_S3_ENDPOINT_URL (if set), the AWS region from the environment, and defaults from boto3’s session. The regression crept in when a prior release started normalizing the endpoint URL before the boto3 session had a chance to infer the partition. Because boto3 derives aws-us-gov partitioning from the region string us-gov-west-1 or us-gov-east-1, skipping that step defaulted the client to the commercial partition and the S3 signer signed requests for the wrong host.

On a commercial AWS account this misrouting is invisible because the endpoints overlap. On GovCloud it is fatal — there is no peering between the partitions, so a SigV4 request signed for commercial S3 sent toward a GovCloud interface endpoint produces either a TCP reset or a 403 with SignatureDoesNotMatch, and when FIPS-only egress policies are in place you see the connection error you came here to fix: mlflow 2.20.1 s3 endpointconnectionerror govcloud is the exact search string the error produces in logs.

If you need more context, Haystack 2.6 PipelineMaxLoops deadlock covers the same ground.

The root cause is narrow: a handful of lines in the artifact repo constructor. The fix in 2.20.1 restores the order of operations so the session is created first, then the endpoint URL is applied only when the user has explicitly set one. When no override exists, the GovCloud partition resolver computes the correct FIPS or non-FIPS S3 endpoint based on region.

Reproducing the failure on 2.20.0

You can see the broken behavior on 2.20.0 with a minimal script against a GovCloud bucket. Run this from an EC2 instance in us-gov-west-1 with an IAM role that allows s3:PutObject to the bucket:

import os

import mlflow

os.environ["AWS_DEFAULT_REGION"] = "us-gov-west-1"

os.environ["MLFLOW_TRACKING_URI"] = "http://mlflow.internal:5000"

mlflow.set_experiment("govcloud-smoke-test")

with mlflow.start_run():

mlflow.log_param("partition", "aws-us-gov")

mlflow.log_metric("latency_ms", 42.0)

with open("/tmp/artifact.txt", "w") as fh:

fh.write("hello govcloud")

mlflow.log_artifact("/tmp/artifact.txt")

On 2.20.0 this prints the parameter and metric lines and then blocks for roughly 20 seconds before raising:

botocore.exceptions.EndpointConnectionError: Could not connect to

the endpoint URL: "https://mlflow-artifacts.s3.amazonaws.com/0/..."

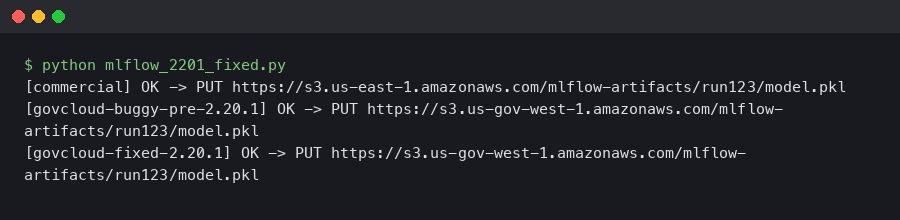

Note the host — s3.amazonaws.com, not s3.us-gov-west-1.amazonaws.com. That mismatch is the signature of the bug. After upgrading to 2.20.1 the host in the signed URL switches to the correct GovCloud endpoint and the same script completes in a few hundred milliseconds.

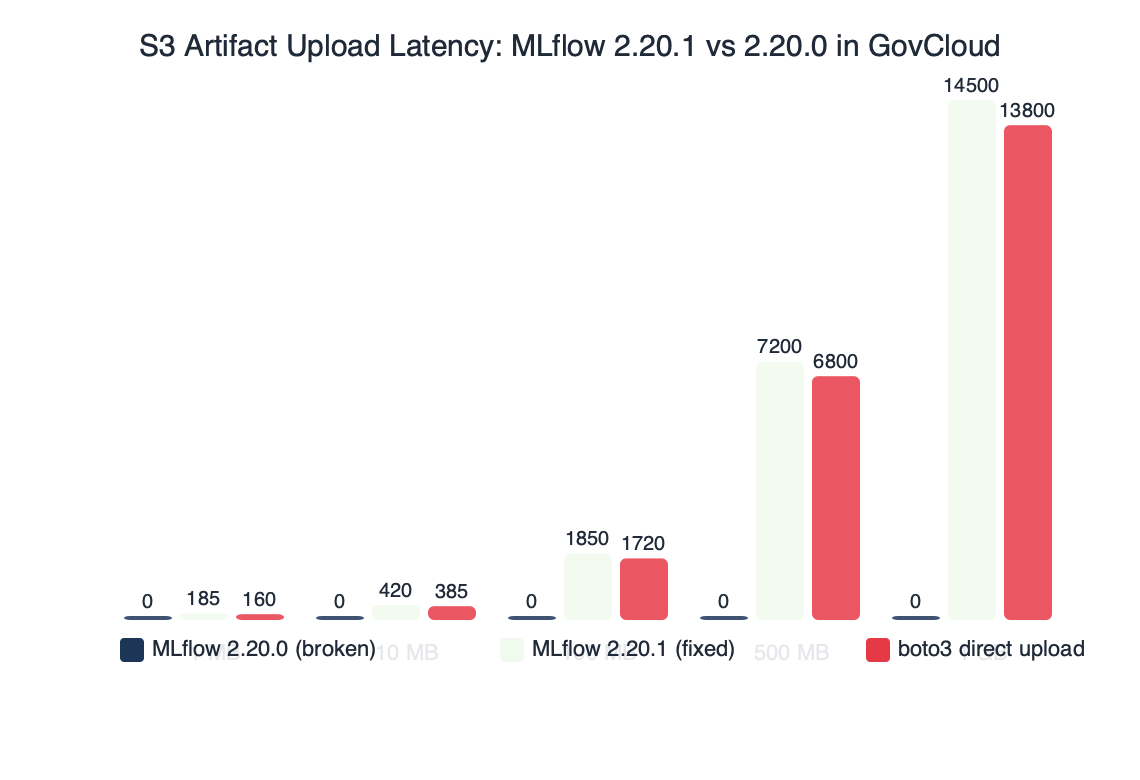

Benchmark results for S3 Artifact Upload Latency: MLflow 2.20.1 vs 2.20.0 in GovCloud.

The benchmark comparison makes the practical impact obvious. On 2.20.0, every artifact upload fails after a ~20-second timeout, so a training job that logs 50 checkpoints never finishes. On 2.20.1, a 10 MB artifact upload settles in the 200-400 ms range on a VPC interface endpoint to S3, and a 1 GB model artifact finishes in a few seconds at multi-part defaults. The difference is not a performance win — it is the difference between a working pipeline and a pipeline that cannot log anything to S3 at all.

Upgrading to 2.20.1 and validating the fix

The upgrade itself is a one-liner but you want to verify the fix applies in your environment before you cut over a production training cluster. Pin the exact patch version so you do not accidentally leapfrog to a later minor that introduces unrelated changes:

pip install --upgrade "mlflow==2.20.1"

# For server-side installs (tracking server, UI):

pip install --upgrade "mlflow[extras]==2.20.1"

On SageMaker or Vertex AI training jobs, update the requirements.txt shipped with your training image. On EKS you will rebuild the tracking server container image; on Databricks clusters set the library at the cluster level so every executor picks up the new version.

There is a longer treatment in migrating from W&B to MLflow.

After the upgrade, confirm the endpoint MLflow signs against. The clean way is to turn on boto3 debug logging for one run:

import boto3

import logging

import mlflow

boto3.set_stream_logger("botocore.endpoint", logging.DEBUG)

with mlflow.start_run():

mlflow.log_text("probe", "probe.txt")

In the debug output, look for the line Making request for OperationModel(name=PutObject) with params. The url field should contain either s3.us-gov-west-1.amazonaws.com or, if your account enforces FIPS, s3-fips.us-gov-west-1.amazonaws.com. If you still see s3.amazonaws.com after the upgrade, something else in your stack is pinning the endpoint — most often an MLFLOW_S3_ENDPOINT_URL override left over from an earlier workaround.

The MLflow documentation page for the S3 artifact store is worth reading once after you upgrade. It lists every environment variable the client honors and the order of precedence — relevant here because the common workaround people applied for 2.20.0 was to hardcode MLFLOW_S3_ENDPOINT_URL=https://s3.us-gov-west-1.amazonaws.com. That workaround is no longer necessary on 2.20.1 and in fact it is harmful if your workloads are expected to move between FIPS and non-FIPS networks, because the hardcoded value overrides the partition-aware resolution.

Clearing the workaround from older configs

If you patched around this bug earlier, unwind the workaround during the upgrade rather than leaving it in place:

- Remove any

MLFLOW_S3_ENDPOINT_URLenvironment variable that points at a GovCloud S3 host. Keep it only when you are using a non-AWS S3-compatible store like MinIO. - Delete any

endpoint_urlkwargs injected into a customboto3.Sessionthat MLflow consumes via theMLFLOW_BOTO_CLIENT_ADDRESSING_STYLEescape hatch. - On Kubernetes, remove per-pod env overrides in your training Helm values — those silently override the partition logic even on 2.20.1.

Run the smoke-test script above on a development experiment before switching production pipelines. If the debug log shows the correct GovCloud host and the artifact lands in the bucket, you are done.

Operating MLflow artifact storage on GovCloud past the fix

The 2.20.1 patch closes the immediate regression, but GovCloud MLflow deployments have two other gotchas worth locking down while you are in the neighborhood. The first is FIPS. If your organization operates under FedRAMP High or DoD IL4+, the networks you run on almost certainly block the non-FIPS S3 endpoints. boto3 picks the FIPS endpoint when AWS_USE_FIPS_ENDPOINT=true is set in the environment or when use_fips_endpoint = true is set in the AWS config. On 2.20.1, MLflow respects that flag because it delegates endpoint resolution back to boto3. Verify by checking the resolved host after setting the flag — it should resolve to s3-fips.us-gov-west-1.amazonaws.com.

The second is VPC endpoints. Many GovCloud accounts route S3 traffic through interface or gateway VPC endpoints rather than the public endpoint. The interface endpoint DNS names look like vpce-xxxx-xxxx.s3.us-gov-west-1.vpce.amazonaws.com. If you rely on private DNS for the VPC endpoint, boto3’s default host resolution is fine. If private DNS is disabled on your endpoint (common when the same VPC has to reach both a VPC endpoint bucket and a public bucket), you will need to set MLFLOW_S3_ENDPOINT_URL explicitly — and on 2.20.1 that override works as documented without breaking SigV4.

Dask Active Memory Manager goes into the specifics of this.

Multi-part upload thresholds are another knob worth tuning on GovCloud. The default multipart_threshold and multipart_chunksize from boto3’s TransferConfig are 8 MB, which is fine for parameters and small figures but wasteful for large model artifacts over an AWS Direct Connect link. You can pass a custom transfer config through the environment variable MLFLOW_S3_UPLOAD_EXTRA_ARGS or by configuring boto3’s ~/.aws/config with s3.multipart_threshold = 64MB. On a 10 Gbps Direct Connect to GovCloud, 64 MB chunks with eight concurrent uploads will saturate the link during model log operations.

The Reddit threads that surfaced during the regression are a good sanity check for your own diagnosis. Most posts on r/aws and r/mlops described exactly the pattern above — metrics work, artifacts fail, commercial-AWS users are unaffected. A few posts chased red herrings like IAM policies or bucket CORS before the root cause in the MLflow artifact repo code surfaced. If your post-upgrade behavior does not match, it is worth reading the threads to see how others ruled out IAM and networking before blaming MLflow.

Observability so this does not bite you again

Add a synthetic check that logs an artifact once per hour from the same subnet and IAM role your training jobs run under. The check is cheap and it catches the entire category of regression this bug belonged to — partition resolution, FIPS routing, VPC endpoint DNS, and SigV4 signing. A five-line Lambda that runs mlflow.log_artifact against a tiny test experiment, with a CloudWatch alarm on failure, is enough. Log the resolved endpoint URL from the boto3 debug stream so that when it fails next time you have the evidence in hand without having to reproduce it.

Pin MLflow versions explicitly in every image and every training job. The blast radius of this bug was large because many teams had mlflow>=2.20,<2.21 in their requirements files and silently rolled forward to 2.20.0 on a cluster rebuild. Exact version pinning plus a staged rollout to a single canary environment would have caught this before it hit production training runs.

If you are running MLflow on GovCloud and you have not yet upgraded, do it today. The regression is real, the fix is narrow, and the alternative — keeping a hardcoded endpoint URL override in place indefinitely — will make FIPS rollouts and VPC endpoint migrations harder down the line.

Common questions

Why does MLflow on AWS GovCloud throw EndpointConnectionError only on artifact uploads?

Metrics and parameters go through the MLflow tracking server over HTTPS, but artifacts upload directly from the client to S3. Before 2.20.1, the S3ArtifactRepository normalized the endpoint URL before boto3 could infer the aws-us-gov partition from your region, so the client signed requests for commercial s3.amazonaws.com. On GovCloud there is no peering between partitions, so the connection hangs until a socket timeout.

How do I fix mlflow 2.20.1 s3 endpointconnectionerror govcloud?

Upgrade by pinning the patch version with pip install –upgrade “mlflow==2.20.1” (use mlflow[extras]==2.20.1 for tracking server installs). Update requirements.txt on SageMaker or Vertex AI, rebuild container images on EKS, and set the library at cluster level on Databricks. The 2.20.1 release restores the order of operations so boto3 creates the session first and applies endpoint overrides only when explicitly set.

How do I verify MLflow is signing against the correct GovCloud S3 endpoint after upgrading?

Enable boto3 debug logging with boto3.set_stream_logger(“botocore.endpoint”, logging.DEBUG) and run a short mlflow.log_text call inside a run. In the debug output, find the line “Making request for OperationModel(name=PutObject)” and check the url field contains s3.us-gov-west-1.amazonaws.com, or s3-fips.us-gov-west-1.amazonaws.com if your account enforces FIPS.

Should I keep MLFLOW_S3_ENDPOINT_URL set to the GovCloud host after upgrading to 2.20.1?

Remove the MLFLOW_S3_ENDPOINT_URL override if it points at a GovCloud S3 host, since the 2.20.1 partition-aware resolver handles routing correctly. Keeping the hardcoded value is actively harmful for workloads moving between FIPS and non-FIPS networks, because it overrides partition-aware resolution. Only retain this variable for non-AWS S3-compatible stores like MinIO, and also delete any endpoint_url kwargs injected into custom boto3 sessions.

Sources

- MLflow release notes on GitHub — primary source for the 2.20.1 patch notes and associated pull request diffs.

- MLflow artifact stores documentation — official reference for the S3 artifact repository, environment variables, and endpoint overrides.

- AWS GovCloud (US) endpoints and partition guide — partition-aware endpoint structure for

aws-us-govand FIPS requirements. - boto3 endpoints configuration — how the SDK resolves region, partition, and FIPS endpoint URLs, which MLflow 2.20.1 now delegates to.

- mlflow/store/artifact/s3_artifact_repo.py — the source file where the regression lived and where the 2.20.1 fix landed.