How I Cut FLUX.1 Inference to 3 Seconds with TensorRT

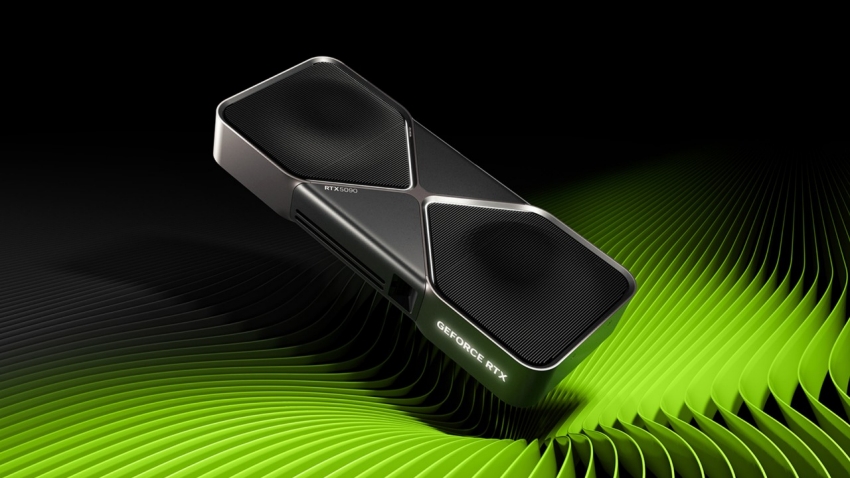

I was staring at my terminal at 1:30 AM last Thursday, watching my RTX 4090 scream at 98% utilization while spitting out a single 1024×1024 image every 15 seconds. FLUX.1 is gorgeous, but running it locally via standard PyTorch felt like trying to commute in a tank.

If you’ve messed with FLUX.1 since the weights dropped, you know the drill. The prompt adherence is insanely accurate. The text rendering actually works. But the compute cost? Brutal. On my setup (Ubuntu 24.04, Python 3.11.8, 64GB RAM), a standard 20-step generation was eating up around 14.2 seconds. I wanted to build a rapid-iteration sketching tool for a client. Waiting 14 seconds per frame meant the project was dead in the water.

Then I finally got the new NVIDIA TensorRT acceleration pipeline working.

The Compilation Nightmare

I saw the recent updates about NVIDIA pushing RTX AI acceleration specifically for FLUX.1. I figured it would be a quick package update and I’d be off to the races. I was completely wrong.

Here is the massive gotcha they don’t warn you about in the standard quickstart docs. When you compile the TensorRT engine for the FLUX context batching, it spikes your system RAM hard. I’m not talking about VRAM—I mean your actual system memory.

I hit an Error: ENOMEM crash three times in a row. My 64GB of system memory wasn’t enough to handle the engine building phase for the FP8 quantized model. I had to temporarily bump my Linux swap file to 128GB just to get through the 40-minute compilation process. If you are trying to build this engine on a machine with 32GB of RAM, do not even attempt it without setting up a massive swap drive first.

Running the Compiled Engine

Once you actually survive the compilation step, the implementation is fairly straightforward. I’m using TensorRT 10.0.1 here alongside the updated diffusers library.

import torch

from diffusers import FluxPipeline

from trt_flux_wrapper import TensorRTFluxPipeline # NVIDIA's helper class

# Load the pre-built TRT engine we suffered to compile

engine_path = "./models/flux1-dev-trt-fp8-engine"

print("Loading TRT engine... this will hang for about 8 seconds.")

pipe = TensorRTFluxPipeline.from_engine(

engine_path,

torch_dtype=torch.float16

)

pipe.to("cuda")

prompt = "A neon-lit cyberpunk coffee shop, high contrast, highly detailed, 8k resolution"

# The actual generation call

with torch.inference_mode():

image = pipe(

prompt,

num_inference_steps=20,

guidance_scale=3.5

).images[0]

image.save("output_fast.png")A quick note on the code above: the initial load time into VRAM takes noticeably longer than a standard PyTorch model load. Don’t kill the script thinking it froze. It’s just mapping the engine.

The Payoff

Once the engine was loaded, the difference was stupid.

I ran the exact same 20-step prompt from my baseline test. The generation finished in 3.1 seconds. That’s nearly a 5x speedup just from swapping the backend. Better yet, it dropped my active VRAM usage by about 38% during inference. Instead of constantly threatening to OOM my 24GB card and crashing my desktop environment, it hovered comfortably around 14.5GB.

I also tested it on a batch size of 4. The standard PyTorch implementation took just over 52 seconds. The TensorRT engine chewed through the batch in 11.4 seconds.

Look, if you just want to generate a funny picture of a cat in an astronaut suit once a week, don’t bother with TensorRT. The 40-minute compilation time and the massive storage footprint for the engine files aren’t worth it. Stick to the cloud APIs or standard local inference.

But if you’re building local UI tools, running bulk generations, or trying to squeeze every drop of performance out of your hardware, the upfront pain of compiling the engine pays off immediately. Just make sure you fix your swap space before you start the build.

FAQ

How much faster is FLUX.1 with TensorRT compared to standard PyTorch?

On an RTX 4090 running a 20-step generation at 1024×1024, standard PyTorch took about 14.2 seconds per image, while the TensorRT-compiled engine finished the same prompt in 3.1 seconds — nearly a 5x speedup. Batch generation showed similar gains: a batch of 4 images dropped from just over 52 seconds in PyTorch to 11.4 seconds with TensorRT.

Why does compiling a FLUX.1 TensorRT engine crash with ENOMEM on 64GB of RAM?

Building the TensorRT engine for FLUX context batching spikes system RAM (not VRAM) hard, and the FP8 quantized model’s 40-minute compilation phase exceeded 64GB of system memory, triggering ENOMEM crashes three times in a row. The fix was temporarily bumping the Linux swap file to 128GB. On a 32GB machine, do not attempt the build without a massive swap drive configured first.

How much VRAM does FLUX.1 use with TensorRT on a 24GB RTX 4090?

Active VRAM usage during inference dropped roughly 38% compared to standard PyTorch, hovering comfortably around 14.5GB on a 24GB card. Before the switch, the PyTorch implementation constantly threatened to OOM the 24GB card and crash the desktop environment. The reduced footprint makes it far safer for sustained local UI work and bulk generations on a single consumer GPU.

Is TensorRT worth it for occasional FLUX.1 image generation?

For one-off casual use — like generating a funny cat-in-an-astronaut-suit image once a week — TensorRT isn’t worth it. The 40-minute compilation time and the massive storage footprint of the engine files outweigh the gains; cloud APIs or standard local inference are better. TensorRT pays off when building local UI tools, running bulk generations, or squeezing maximum performance from the hardware.